Token-Efficient Prompting: Compression, Structured Formats, and Measurable Savings (2025–2026)

1. Why Token Efficiency Matters Now

Every LLM API call is priced per token. As of April 2026, input token costs range from $0.10/M (GPT-4.1 nano, Gemini 2.0 Flash) to $15.00/M (Claude Opus 4), while output tokens run 2–5× higher — from $0.40/M up to $75.00/M [1]. Production systems routinely send 2,000–10,000 tokens per request in system prompts, conversation history, RAG context, and few-shot examples. At 100,000 requests/day on GPT-4.1 ($2.00/$8.00 per M), a 50% input reduction alone saves ~$100/day or ~$36,500/year. Organizations report that systematic token management — compression, caching, prompt optimization, and model routing — can reduce LLM costs by 60–80% while maintaining or improving output quality [2][3].

Current API Pricing Landscape (April 2026)

| Model | Provider | Input ($/M tokens) | Output ($/M tokens) | Context Window |

|---|---|---|---|---|

| GPT-4.1 | OpenAI | $2.00 | $8.00 | 1M |

| GPT-4.1 mini | OpenAI | $0.40 | $1.60 | 1M |

| GPT-4.1 nano | OpenAI | $0.10 | $0.40 | 1M |

| Claude Sonnet 4 | Anthropic | $3.00 | $15.00 | 200K |

| Claude Opus 4 | Anthropic | $15.00 | $75.00 | 200K |

| Gemini 2.5 Pro | $1.25 | $10.00 | 1M | |

| Gemini 2.5 Flash | $0.15 | $0.60 | 1M | |

| Llama 4 Maverick | Meta (hosted) | $0.20 | $0.60 | 1M |

Source: PE Collective cross-provider comparison, April 2026 [1].

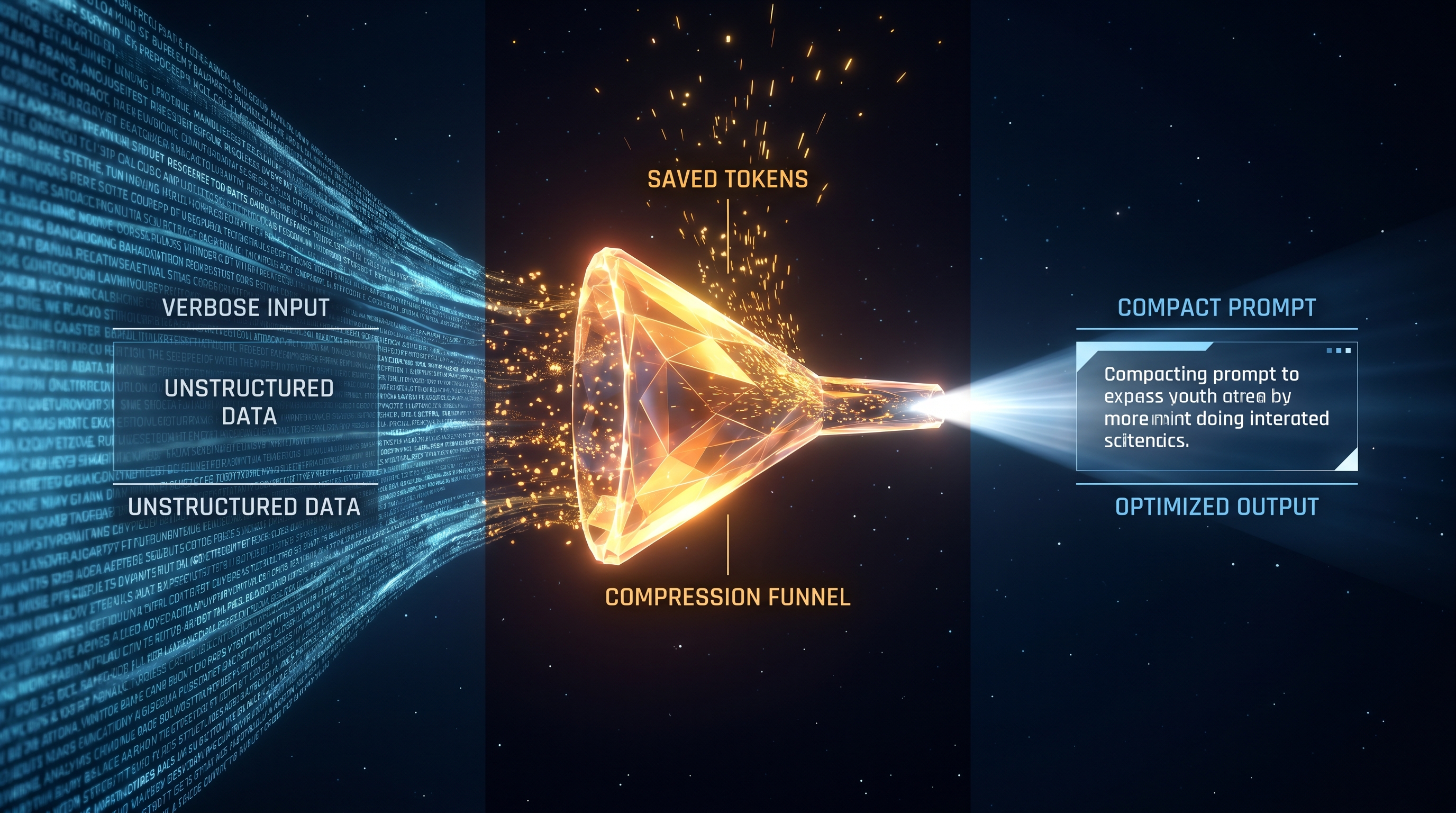

2. Prompt Compression Frameworks

2.1 LLMLingua Family (Microsoft Research)

LLMLingua is the most mature prompt compression framework in production use as of 2026. It uses a small classifier model (GPT-2 Small or LLaMA-7B) to score and remove low-information tokens before the prompt reaches the target LLM [4][5].

Benchmark results:

| Variant | Compression | Accuracy Impact | Speed vs. LLMLingua-1 | Key Strength |

|---|---|---|---|---|

| LLMLingua | Up to 20× | −1.5 pts on GSM8K | Baseline | Highest compression ratio |

| LLMLingua-2 | 3–5× | Comparable | 3–6× faster | Task-agnostic, BERT-size encoder |

| LongLLMLingua | 4× | +17.1% on RAG tasks | Similar | Mitigates "lost in the middle" |

LLMLingua-2 is trained via data distillation from GPT-4 and uses a BERT-level encoder for token classification, making it practical for latency-sensitive production workloads. On the LooGLE long-context RAG benchmark, LongLLMLingua achieved 94% cost reduction while improving GPT-3.5-Turbo performance by up to 21.4% — compression focused the model on relevant tokens [5][6].

Production case study: A SaaS customer-support deployment using RAG reduced monthly LLM costs from $42,000 to $2,100 (95% reduction) by applying LLMLingua to compress retrieved context from ~50K tokens to ~2.5K tokens per request, with no model change [7].

Per-call cost savings (2.1× compression on 1,431 tokens):

| Model | Original Cost/Call | Compressed Cost/Call | Savings/1,000 Calls |

|---|---|---|---|

| GPT-4 | $0.04293 | $0.02010 | $22.83 |

| GPT-4 Turbo | $0.01431 | $0.00670 | $7.61 |

| Claude 3 Opus | $0.02147 | $0.01005 | $11.42 |

| Claude 3 Sonnet | $0.00429 | $0.00201 | $2.28 |

Source: LLMLingua benchmark, Nayak 2025 [8].

Practical limitations (2026 evaluation): An April 2026 study measuring LLMLingua in realistic self-hosted inference found that end-to-end latency improvements greater than 1.3× only appeared with non-optimized frameworks, high-end GPUs (A100), or very long prompts exceeding 10,000 tokens at 4×+ compression. Among all variants, only LLMLingua-2 proved practical under production conditions. Summarization and QA tasks remained robust under compression, while code completion and few-shot examples were sensitive [9].

2.2 ProCut (EMNLP 2025 Industry Track)

ProCut is an LLM-agnostic, training-free framework that compresses prompts through attribution analysis. It segments prompt templates into semantically meaningful units, quantifies their impact on task performance, and prunes low-utility components [10].

Results:

- 78% fewer tokens in production prompt templates

- Up to 62% better task performance than alternative compression methods on 5 public benchmarks

- An LLM-driven attribution estimator reduces compression latency by over 50%

- Integrates with existing prompt-optimization frameworks

ProCut is particularly effective for bloated production prompts that have grown through iterative additions of instructions, few-shot examples, and heuristic rules.

2.3 CompactPrompt (October 2025)

CompactPrompt merges hard prompt compression with file-level data compression. It prunes low-information tokens using self-information scoring and dependency-based phrase grouping, while applying n-gram abbreviation to recurrent textual patterns and uniform quantization to numerical columns [11].

Results: Up to 60% total token and cost reduction on TAT-QA and FinQA benchmarks, with less than 5% accuracy drop for Claude-3.5-Sonnet and GPT-4.1-Mini.

2.4 CROP: Token-Efficient Reasoning (April 2026, Google Research)

CROP (Regularized Prompt Optimization) targets the reasoning-token explosion in agentic AI systems. Rather than compressing context, it optimizes the prompt itself to elicit shorter chain-of-thought traces [12].

Results: 80.6% reduction in token consumption while maintaining competitive accuracy with only a nominal performance decline. This is significant for reasoning-heavy workloads where output tokens (at 2–5× input pricing) dominate costs.

2.5 Squeez: Tool-Output Pruning for Coding Agents (April 2026)

Squeez is a task-conditioned pruner for coding-agent tool output. A compact LoRA-tuned Qwen 3.5 2B model removes irrelevant lines from structured tool responses (grep results, test output, build logs) [13].

Results: 0.86 recall and 0.80 F1 while removing 92% of input tokens, outperforming the 18× larger Qwen 3.5 35B and all heuristic baselines (BM25 reached only 0.22 recall on tool output). This addresses a major cost driver in agentic coding workflows where tool observations can be tens of thousands of tokens.

3. Structured Output Formats

Switching from verbose prose or standard JSON to compact structured formats is one of the simplest and highest-ROI token optimizations.

3.1 TOON (Token-Optimized Object Notation)

TOON centralizes schema definitions and sends mostly values, eliminating repeated JSON keys [14][15].

Benchmark (user data extraction):

| Format | Tokens | Reduction vs. JSON |

|---|---|---|

| JSON | 88 | — |

| TOON | 28 | 68% |

Production benchmark (500 users, Gemini 2.5 Flash):

| Metric | JSON | TOON | Improvement |

|---|---|---|---|

| Estimated tokens | 12,706 | 6,056 | 52.3% |

| Estimated latency | 2.565s | 1.221s | 52.4% |

| Cost per request | $0.001906 | $0.000908 | 52.3% |

However, independent testing found TOON's parsing reliability at only 70% without optimization. With optimized prompts, parsing reached 100% but the token advantage narrowed. For single extractions, JSON with native SDK structured outputs (Pydantic) used fewer total tokens. TOON's advantage compounds in multi-step agent workflows where output becomes the next input — a 24% reduction per tool call across 20 calls saves ~3,040 tokens per session [15].

3.2 TSV/CSV Over JSON

A production PDF extraction pipeline on Gemini 2.5 found that switching from JSON to TSV output encoding reduced structural output tokens by 50–60%. Nested JSON objects increase overhead geometrically; repeated keys increase it linearly. TSV eliminates both [16].

3.3 General Structured Prompting

Converting verbose prose instructions to bullet points, key-value pairs, or numbered lists typically reduces token count by 15–30% while improving model consistency and reducing ambiguity [17].

4. Few-Shot Example Pruning

Few-shot examples are the second-largest token cost after context documents. Most implementations include too many examples or examples that are too verbose [18].

Optimal example counts by task type:

| Task Type | Optimal Examples | Diminishing Returns Beyond |

|---|---|---|

| Binary classification | 2 | 4 |

| Multi-class classification (5–10 classes) | 3–5 | 8 |

| Structured extraction | 2–3 | 5 |

| Complex reasoning (CoT) | 1–2 | 3 |

Pruning technique: Take the longest few-shot example and cut it by 50%. Test quality. If unchanged, cut the next example. Continue until quality drops, then restore the last cut. Most teams reduce few-shot token usage by 40–60% this way [18].

Concrete example (intent classification):

- Before: 3 examples, 180 tokens (redundant cancellation variants)

- After: 1 example, 60 tokens — 67% reduction, same accuracy [19]

Important caveat: An April 2026 study found that compressing few-shot examples via token pruning caused performance drops of up to 52% on classification tasks (TREC, LSHT-ZH), because compression removed class-indicative patterns. For tasks where zero-shot performance matches the baseline (TriviaQA, SAMSum), skipping few-shot examples entirely is preferable to compressing them [9].

5. Prompt Caching: The Highest-Leverage Optimization

Prompt caching reuses the processed KV-cache of static prompt prefixes across requests, offering the largest single cost reduction for workloads with repeated system prompts, tool definitions, or RAG context [20][21].

Provider Comparison (April 2026)

| Feature | Anthropic | OpenAI | Google Gemini |

|---|---|---|---|

| Cache type | Explicit (cache_control) | Automatic | Automatic / Explicit |

| Cache read discount | 90% | 50% | 90% (Gemini 2.5) |

| Cache write premium | 1.25× (5-min TTL) | None | Storage: $4.50/MTok/hr |

| Min tokens | 1,024 | 1,024 | 1,024 (auto) / 32,768 (explicit) |

| TTL | 5 min (resets on hit) | 5–60 min (24h for GPT-5.1/4.1) | ~10 min (auto) / user-defined |

Real-world savings (chatbot, 5,000 daily users, Claude Sonnet):

| Scenario | Monthly Cost | Savings |

|---|---|---|

| No caching | $2,767 | — |

| With Anthropic caching (90%) | $289 | $2,478/mo (89%) |

| With OpenAI caching (50%) | $1,868 | $1,822/mo (49%) |

Source: AI Cost Check, March 2026 [21].

Production case study: A code-editing agent at a 12-engineer shop migrated from GPT-4.1 (no cache) to Claude Sonnet 4 with 1-hour caching. Monthly bill dropped from $8,900 to $1,350 and p50 latency fell 40% [20].

Key insight: Combining Anthropic prompt caching with Batch API can reduce effective spend by up to 95% on eligible workloads [22].

6. Compounding Savings: Layered Optimization

Individual techniques compound because each reduces the base the next operates on [18]:

| Optimization Layer | Input Tokens/Call | Output Tokens/Call | Monthly Cost | Cumulative Savings |

|---|---|---|---|---|

| Baseline (no optimization) | 1,500 | 500 | $12,000 | — |

| Prompt compression (−40% input) | 900 | 500 | $10,200 | 15% |

| Few-shot pruning (−50% examples) | 650 | 500 | $9,450 | 21% |

| Output length control (−60% output) | 650 | 200 | $5,950 | 50% |

| Prompt caching (90% on 500-token prefix) | 650 | 200 | $4,700 | 61% |

| Batch processing (50% discount, 40% of traffic) | 650 | 200 | $3,760 | 69% |

Recommended implementation order (by single-technique impact): output length control → prompt caching → prompt compression → few-shot pruning → batch processing [18].

7. Emerging Tools and Libraries (2025–2026)

tokenpruner (PyPI, 2026)

Open-source Python library offering composite compression strategies. Claims 70–80% reduction on general prompts via a 6-stage pipeline: template stripping (20–40%), code minification (40–60%), Jaccard deduplication (30–70%), heuristic semantic scoring, sliding window, and hard truncation [23].

OpenCompress (2026)

Commercial proxy service with a 5-stage pipeline (input normalization → semantic deduplication → token pruning → context compression → output shaping). Reports 62% average input reduction and 50–70% total cost reduction with ≥0.80 cosine similarity to original responses, verified on agent traces from Claude Code and Cursor [24].

SimpleTool (Function-Calling Compression)

Reduces tool-definition tokens 4–6× in function-calling prompts, achieving up to 9.6× end-to-end speedup. Relevant because tool definitions are a growing share of prompt tokens in agentic architectures [19].

Cortex (Context Management for Coding Agents)

Content-aware output compression for coding agents. Type-specific summarization of test results, TypeScript errors, lint output, git diffs, and JSON responses. Typical reduction: 60–90% on verbose tool outputs [25].

8. Summary of Techniques and Expected Savings

| Technique | Typical Token Reduction | Quality Risk | Best For |

|---|---|---|---|

| LLMLingua-2 | 3–5× (60–80%) | Low (−1.5 pts) | RAG pipelines, long context |

| ProCut | 78% | Low to none | Bloated production prompts |

| CompactPrompt | Up to 60% | Low (<5% accuracy drop) | Agentic workflows with data |

| CROP (reasoning optimization) | 80.6% | Nominal | CoT-heavy reasoning tasks |

| Structured formats (TOON/TSV) | 50–68% | None if parsed correctly | Extraction, multi-step agents |

| Few-shot pruning | 40–60% | Test per task | Classification, extraction |

| Prompt caching (Anthropic) | 90% cost on cached tokens | None | Any repeated prefix >1,024 tokens |

| Output length control | 20–40% | None if calibrated | All workloads |

| Layered optimization (all combined) | 60–80% total cost | Low | Production systems at scale |

The field has matured rapidly: prompt compression moved from research curiosity to production infrastructure between 2024 and 2026. The key insight is that no single technique dominates — maximum savings come from layering compression, structured formats, pruning, and caching, with each technique targeting a different part of the cost stack.

References

[1] PE Collective, "Cross-Provider LLM API Pricing Comparison (April 2026)," https://pecollective.com/blog/llm-pricing-comparison-2026/

[2] JetThoughts, "Cost Optimization for LLM Applications: Token Management," https://jetthoughts.com/blog/cost-optimization-llm-applications-token-management/

[3] Particula Tech, "How to Reduce LLM Costs Through Token Optimization," https://particula.tech/blog/reduce-llm-token-costs-optimization

[4] Microsoft Research, "LLMLingua Series," https://www.microsoft.com/en-us/research/project/llmlingua/

[5] Jiang et al., "LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models," EMNLP 2023, https://arxiv.org/abs/2310.05736

[6] microsoft/LLMLingua GitHub repository, https://github.com/microsoft/LLMLingua

[7] TokenMix, "LLMLingua 2026: 20x Prompt Compression," https://tokenmix.ai/blog/llmlingua-prompt-compression-2026

[8] Nayak, "Slash Your LLM Costs by 80%: A Deep Dive into Microsoft's LLMLingua," Level Up Coding, Nov 2025

[9] "Prompt Compression in the Wild: Measuring Latency, Rate Adherence, and Quality," arXiv:2604.02985, April 2026, https://arxiv.org/abs/2604.02985

[10] "ProCut: LLM Prompt Compression via Attribution Estimation," EMNLP 2025 Industry Track, https://aclanthology.org/2025.emnlp-industry.20/

[11] "CompactPrompt: A Unified Pipeline for Prompt Data Compression in LLM Workflows," arXiv:2510.18043, Oct 2025, https://arxiv.org/abs/2510.18043

[12] "CROP: Token-Efficient Reasoning in LLMs via Regularized Prompt Optimization," Google Research, arXiv:2604.14214, April 2026, https://arxiv.org/abs/2604.14214

[13] "Squeez: Task-Conditioned Tool-Output Pruning for Coding Agents," arXiv:2604.04979, April 2026

[14] Singha, "TOON vs JSON: 68% Fewer Tokens with the New Structured Format for LLMs," Medium, Nov 2025

[15] Shah, "JSON vs TOON: What We Learned About Token Efficiency in Production LLM Systems," Medium, April 2026

[16] Ashish, "The Hidden Token Trap: How We Reduced Gemini Costs by Half," Medium, Nov 2025

[17] Machine Learning Mastery, "Prompt Compression for LLM Generation Optimization and Cost Reduction," Dec 2025, https://machinelearningmastery.com/prompt-compression-for-llm-generation-optimization-and-cost-reduction/

[18] Tan, "Token Optimization — How to Get the Same Output Quality at 40% Lower Cost," uatgpt.com, April 2026, https://uatgpt.com/prompt-engineering/token-optimization/

[19] Rephrase, "Prompt Compression: Cut Tokens Without Losing Quality," March 2026, https://rephrase-it.com/blog/prompt-compression-cut-tokens-without-losing-quality

[20] TechPlained, "LLM Prompt Caching: Cut API Costs 90% (2026)," https://www.techplained.com/llm-prompt-caching

[21] AI Cost Check, "Prompt Caching: Cut Your AI API Bill by 90%," March 2026, https://aicostcheck.com/blog/ai-prompt-caching-cost-savings

[22] Burnwise, "Prompt Caching: Save 50-90% on LLM API Costs [2026 Guide]," https://www.burnwise.io/blog/prompt-caching-guide

[23] tokenpruner v1.0.0, PyPI, https://pypi.org/project/tokenpruner/

[24] OpenCompress, https://opencompress.ai/

[25] sparn-labs/cortex, GitHub, https://github.com/sparn-labs/cortex