Reasoning Token Costs: The Hidden Economics of AI Thinking

Introduction

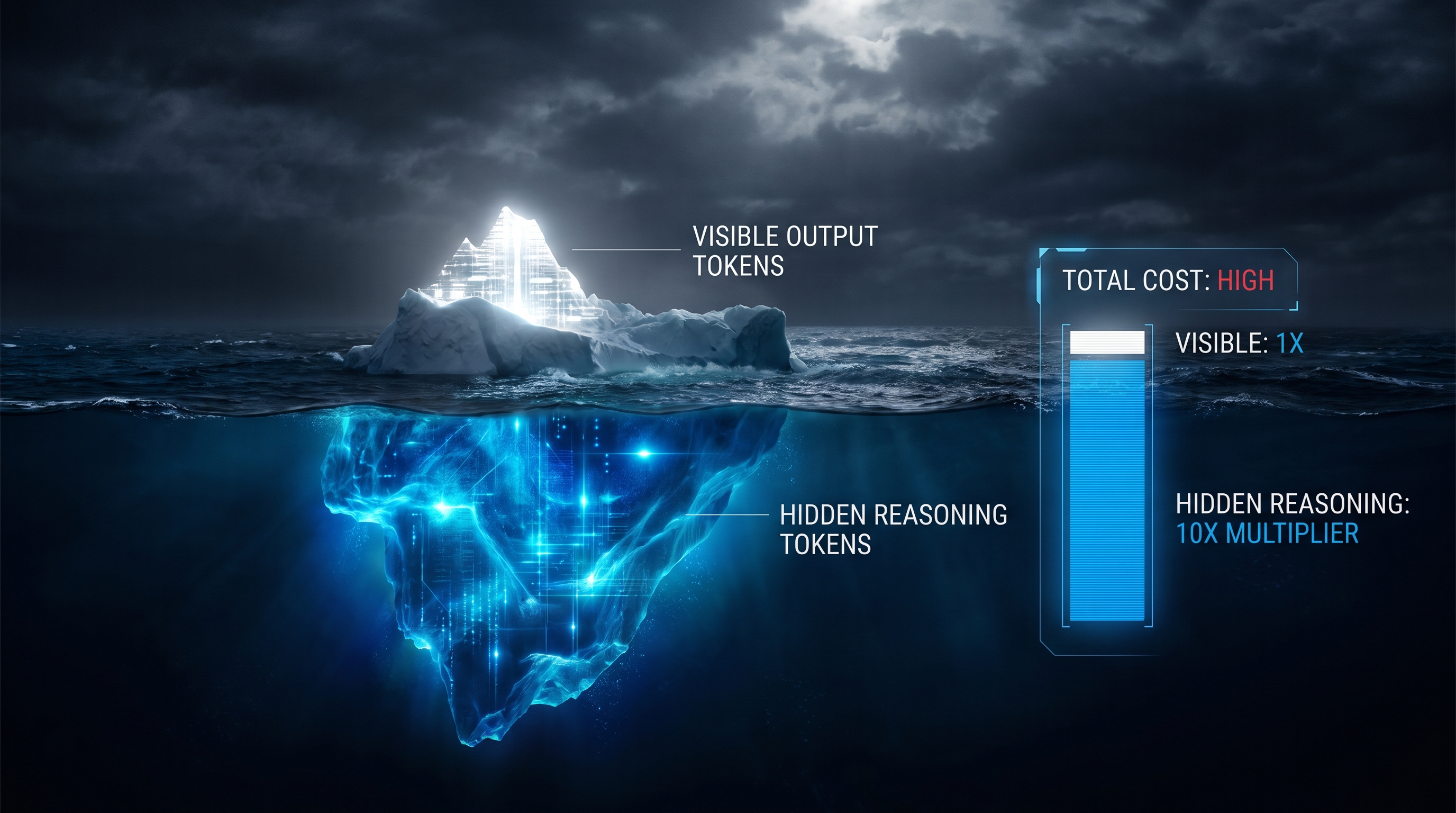

Reasoning models — OpenAI's o-series, Anthropic's Claude with extended thinking, Google's Gemini thinking modes, and DeepSeek R1 — represent a fundamental shift in how LLM inference is priced. Unlike standard models where you pay for visible input and output, reasoning models generate internal chain-of-thought tokens that are billed at output-token rates but never (or only partially) shown to the end user. These "thinking tokens" can multiply the effective cost of a single API call by 3–14× compared to what the visible response length would suggest [1][2]. Understanding this hidden cost layer is essential for any team budgeting AI inference in production.

This document covers provider-by-provider pricing as of Q1–Q2 2026, the mechanics of thinking-token billing, budget controls, effective per-query costs, benchmark-quality tradeoffs, and optimization strategies.

1. How Reasoning Tokens Work

When a reasoning model receives a prompt, it generates an internal chain-of-thought before producing the user-visible answer. These internal tokens are variously called "reasoning tokens" (OpenAI), "thinking tokens" (Anthropic, Google), or simply part of the output stream (DeepSeek).

Key properties shared across all providers [1][3][4]:

- Billed as output tokens. Every provider charges thinking tokens at the standard output-token rate — there is no separate "thinking surcharge" or discount.

- Counted against

max_tokens. Thinking tokens consume your output budget. A request withmax_tokens: 10on a reasoning model can return an empty response if all 10 tokens were consumed by internal reasoning [3]. - Variable volume. A complex math problem may generate 18,000–25,000 reasoning tokens before producing a 500-token visible answer. A simple factual question may generate near-zero thinking tokens [2].

- Visibility varies by provider. OpenAI does not expose reasoning token content (only the count in usage metadata). Anthropic returns a

thinkingcontent block in the API response. Google exposes thought summaries in streaming mode [4].

2. Provider Pricing Breakdown (April 2026)

2.1 OpenAI o-Series

| Model | Input $/MTok | Cached Input $/MTok | Output $/MTok | Context | Notes |

|---|---|---|---|---|---|

| o3 | $2.00 | $0.50 | $8.00 | 200K | Flagship reasoning; 80% price drop from original $10/$40 [5][6] |

| o4-mini | $1.10 | $0.275 | $4.40 | 200K | Best-value reasoning model [2] |

| o3-mini | $1.10 | $0.275 | $4.40 | 200K | Previous-gen efficient reasoning [7] |

| o1 | $15.00 | — | $60.00 | 200K | Original reasoning model (legacy) [7] |

| o3-pro | $20.00 | — | $80.00 | 200K | Premium reasoning; niche use [7] |

OpenAI reasoning tokens are completely opaque — you can see the token count in the usage metadata but cannot read the reasoning content. The usage dashboard historically only showed input tokens in model-level breakdowns, making cost tracking frustrating [8]. Reasoning tokens and visible output tokens are billed at the identical rate; there is no way to distinguish them in the invoice line item.

The o3 price drop in mid-2025 (from $10/$40 to $2/$8 per MTok) was the single largest reasoning-model price reduction to date, making o3 roughly competitive with Claude Sonnet on a per-token basis — though effective per-query costs remain higher due to reasoning overhead [5][6].

2.2 Anthropic Claude Extended Thinking

| Model | Input $/MTok | Cached Input $/MTok | Output (incl. thinking) $/MTok | Context | Notes |

|---|---|---|---|---|---|

| Claude Sonnet 4.6 | $3.00 | $0.30 | $15.00 | 200K | Production workhorse with thinking [9] |

| Claude Opus 4.6 | $5.00 | $0.50 | $25.00 | 200K | Highest-ceiling reasoning [9] |

| Claude Opus 4 (original) | $15.00 | $1.50 | $75.00 | 200K | Premium tier (legacy pricing) [10] |

Anthropic's extended thinking is controlled via the budget_tokens parameter, which sets the maximum number of tokens the model can spend on internal reasoning before producing its final answer [11][12]:

- Minimum budget: 1,024 tokens (hard floor).

- Maximum budget: Up to 128K tokens on Opus models.

- Budget is a cap, not a target. In practice, the model uses 40–90% of the budget on tasks that genuinely need it, and near-zero on simple tasks [13].

budget_tokensmust be less thanmax_tokens— the thinking budget is carved out of your total output allocation [12].

Claude 4.6 adaptive thinking introduced effort levels (low, medium, high, max) that let the model dynamically decide how much to think based on prompt difficulty. At low effort, Claude may skip thinking entirely on simple tasks [14].

Critical billing gotcha on Claude 4+ models: You are billed for the full internal thinking tokens generated, but the API only returns a summary of the thinking. The visible thinking field may be 500 tokens while you were billed for 10,000 [9][11]. This makes cost estimation from response inspection unreliable — always check usage.output_tokens in the API response.

Per-request cost examples on Opus 4.6 ($25/MTok output) [13]:

| Budget Tier | Thinking Tokens | Cost per Request | Typical Use |

|---|---|---|---|

| Light | 1,024 | ~$0.03 | Quick disambiguation |

| Medium | 5,000 | ~$0.13 | Single-hop reasoning |

| Heavy | 16,000 | ~$0.40 | Multi-step planning |

| Max | 64,000 | ~$1.60 | Research-grade analysis |

On Sonnet 4.6 ($15/MTok), these costs are roughly 60% of the Opus figures.

2.3 Google Gemini Thinking Models

| Model | Input $/MTok | Output (incl. thinking) $/MTok | Context | Notes |

|---|---|---|---|---|

| Gemini 2.5 Pro | $1.25 (≤200K) / $2.50 (>200K) | $10.00 (≤200K) / $15.00 (>200K) | 1M–2M | Configurable thinking budget [15] |

| Gemini 2.5 Flash | $0.15 | $3.50 (thinking) / $0.60 (non-thinking) | 1M | Best $/benchmark for reasoning [2] |

| Gemini 3.1 Pro | $1.25 | $5.00 | 2M+ | Latest generation [16] |

Google's billing formula is explicit: Cost = (input_tokens × input_price) + ((output_tokens + thinking_tokens) × output_price) [15]. The thinking_budget parameter (0–32,768 tokens on 2.5 series) controls reasoning depth. Unlike Claude, Gemini 2.5 Flash had separate pricing tiers for thinking vs. non-thinking output, making it the cheapest reasoning option at $3.50/MTok for thinking tokens [2].

Pricing controversy: When Gemini 2.5 Flash reached GA, Google unified pricing and removed the cheaper non-thinking tier, resulting in a ~4× output price increase for users who didn't need thinking mode. Google introduced Flash-Lite ($0.10/$0.40) as a budget alternative [17].

2.4 DeepSeek R1

| Model | Input $/MTok | Cached Input $/MTok | Output $/MTok | Context | Notes |

|---|---|---|---|---|---|

| DeepSeek R1 | $0.55 | $0.14 | $2.19 | 128K | Open-weight; 20–50× cheaper than o1 [18][19] |

| DeepSeek R1 V3.2 | $0.28 | — | $0.42 | 128K | Cheapest reasoning model available [7] |

DeepSeek R1 bills reasoning tokens at the standard output rate with no separate category. The cache-hit discount on input ($0.14/MTok vs. $0.55) is aggressive and makes repeated-context workloads extremely cheap [4][18].

3. Effective Per-Query Costs: The Hidden Multiplier

The per-token price is misleading for reasoning models because the ratio of thinking tokens to visible output is typically 10:1 to 50:1 on complex tasks. A query that produces 500 visible tokens may consume 5,000–25,000 total output tokens including reasoning [1][2].

Measured effective costs per reasoning query (complex task) [2][7]:

| Model | Typical Reasoning Tokens | Visible Output | Total Billed Output | Effective Cost/Query |

|---|---|---|---|---|

| o3 | 18,000–25,000 | 400–600 | 18,400–25,600 | $0.147–$0.205 |

| o4-mini | 10,000–16,000 | 400–600 | 10,400–16,600 | $0.046–$0.073 |

| Claude Opus 4 (thinking) | 5,000–12,000 | 400–700 | 5,400–12,700 | $0.135–$0.318 |

| Gemini 2.5 Pro (thinking) | 8,000–15,000 | 300–500 | 8,300–15,500 | $0.083–$0.155 |

| Gemini 2.5 Flash (thinking) | 6,000–12,000 | 300–500 | 6,300–12,500 | $0.022–$0.044 |

| DeepSeek R1 | 6,000–14,000 | 350–600 | 6,350–14,600 | $0.014–$0.032 |

Relative cost per reasoning query (DeepSeek R1 V3.2 as 1× baseline) [7]:

| Model | Cost/Request | Relative Cost |

|---|---|---|

| DeepSeek R1 V3.2 | $0.0018 | 1× |

| o4-mini | $0.0165 | 9.2× |

| o3 | $0.0300 | 16.7× |

| Claude Opus 4 (thinking) | ~$0.15–0.30 | 83–167× |

| o3-pro | $0.3000 | 167× |

The takeaway: o3 is 16.7× more expensive than DeepSeek R1 per reasoning request, and o3-pro is 167× more expensive. For budget-constrained teams, the cost gap between frontier reasoning models is enormous [7].

4. Cost vs. Quality: Benchmark Tradeoffs

Higher cost does not always mean proportionally better results. The relationship between reasoning spend and quality is sublinear — you pay exponentially more for marginal gains at the frontier.

Key Benchmarks (2025–2026)

AIME 2024 (Competition Mathematics) [10][20]:

- o3: 91.6%

- Gemini 2.5 Pro: 92.0%

- Claude Opus 4 (extended thinking): ~88%

SWE-bench (Software Engineering) [10][20]:

- Claude Opus 4: 72.5% (79.4% with parallel test-time compute)

- o3: 71.7%

- Gemini 2.5 Pro: 63.8%–67.2%

GPQA Diamond (Graduate-Level Science) [2][21]:

- o3: 83–87.7%

- o4-mini: 81.4%

- Gemini 2.5 Pro: ~82.4%

Cost-efficiency observations:

- Gemini 2.5 Pro matches o3 on AIME at roughly 1/8th the per-query cost. For math-heavy workloads, Gemini offers the best price-performance ratio [10][20].

- Claude Opus 4 leads on SWE-bench coding tasks, but at $15/$75 per MTok (original pricing), it costs 5–10× more per query than o3. The newer Opus 4.6 at $5/$25 narrows this gap significantly [9][10].

- DeepSeek R1 delivers ~80–85% of o3's benchmark performance at 1/17th the cost. For most production reasoning tasks that don't require absolute frontier accuracy, R1 is the rational economic choice [7][18].

- o4-mini captures ~95% of o3's reasoning quality (81.4% vs 83–87.7% on GPQA Diamond) at roughly half the per-query cost, making it the sweet spot within OpenAI's lineup [2].

The Thinking Budget–Quality Curve

Anthropic's extended thinking provides the clearest data on how reasoning budget affects quality. With Claude, you can directly control the thinking budget and observe the impact:

- 1K–5K thinking tokens: Sufficient for single-step reasoning, basic code generation, and disambiguation. Quality improvement over non-thinking mode is modest (~5–10% on hard tasks) [13][14].

- 5K–16K thinking tokens: Sweet spot for multi-step problems. Covers most production use cases — complex debugging, architectural planning, multi-hop Q&A. Quality gains plateau around 10K–16K for most tasks [13].

- 16K–64K thinking tokens: Diminishing returns for all but the hardest problems. Useful for research-grade analysis, novel algorithm design, and tasks requiring exploration of multiple solution paths [13].

- 64K–128K thinking tokens: Extreme budget. Justified only for problems where exhaustive reasoning is critical. Latency becomes the binding constraint (60+ seconds on Opus) [13].

In practice, roughly 77% of tokens in high-reasoning scenarios go toward thinking rather than visible output [22]. A PromptLayer analysis of Claude Opus with a 16K thinking budget found that the model consistently allocated the majority of its token budget to internal deliberation.

5. Optimization Strategies

5.1 Use Thinking Budgets Aggressively

Both Claude (budget_tokens) and Gemini (thinking_budget) let you cap reasoning spend. Start with the minimum (1,024 for Claude, 0 for Gemini Flash) and increase only when quality degrades. Claude's adaptive thinking (effort: "low") can skip thinking entirely on simple tasks [12][14].

5.2 Route by Complexity

Build a tiered architecture: use a cheap non-reasoning model (GPT-4.1 nano at $0.10/MTok input, Gemini Flash-Lite at $0.10/$0.40) for simple tasks, and escalate to reasoning models only when the task genuinely requires multi-step logic [7][16]. A classifier or heuristic that routes 80% of traffic to non-reasoning models can cut aggregate costs by 5–10×.

5.3 Leverage Prompt Caching

All major providers offer cached-input discounts that dramatically reduce the input component:

- OpenAI: 75% discount on cached input ($0.50 vs $2.00 for o3) [5]

- Anthropic: 90% discount ($0.30 vs $3.00 for Sonnet 4.6) [9]

- DeepSeek: 75% discount ($0.14 vs $0.55 for R1) [18]

For agentic workflows with repeated system prompts, caching alone can cut total costs by 30–50%.

5.4 Batch Processing

OpenAI and Anthropic offer 50% discounts on batch API calls [4][16]. If latency is not critical, batching reasoning requests halves the output-token cost — which is where the majority of reasoning spend occurs.

5.5 Monitor Actual Token Consumption

Never estimate reasoning costs from visible response length. Always check usage.output_tokens (or equivalent) in the API response. On Claude 4+ models, the visible thinking summary is shorter than what was billed [9][11]. On OpenAI, reasoning tokens are invisible in the response body entirely [8]. Build dashboards that track thinking-token ratios per request type.

5.6 Consider Open-Weight Alternatives

DeepSeek R1 is open-weight and can be self-hosted, eliminating per-token API costs entirely for teams with GPU infrastructure. At API prices, R1 is already 17× cheaper than o3; self-hosted, the gap widens further [18][19].

6. Summary

Reasoning tokens are the defining cost driver of modern AI inference. They are billed at full output-token rates, invisible or only partially visible in API responses, and can constitute 90%+ of total output tokens on complex tasks. The effective per-query cost of reasoning models is 3–14× higher than what per-token pricing suggests at first glance.

Key numbers to remember:

- o3: $2/$8 per MTok, ~$0.15–0.20 effective per complex query

- o4-mini: $1.10/$4.40, ~$0.05–0.07 per query — best value in OpenAI's lineup

- Claude Sonnet 4.6 (thinking): $3/$15, ~$0.05–0.15 per query depending on budget

- Gemini 2.5 Flash (thinking): $0.15/$3.50, ~$0.02–0.04 per query — best $/benchmark ratio

- DeepSeek R1: $0.55/$2.19, ~$0.01–0.03 per query — cheapest frontier reasoning

- Thinking tokens typically outnumber visible output 10:1 to 50:1 on hard problems

- Budget controls (

budget_tokens,thinking_budget, effort levels) are the primary lever for managing reasoning costs

The market is moving fast: o3's 80% price drop in 2025, Claude's shift to adaptive thinking, and DeepSeek's continued cost leadership all point toward reasoning becoming cheaper and more controllable. But for now, teams that don't actively manage their thinking-token budgets risk bill shock that scales with problem complexity, not response length.

References

[1] Aumiqx, "OpenAI API Pricing April 2026: Real Cost Per Million Tokens" — https://aumiqx.com/ai-tools/openai-api-pricing-gpt4-costs-guide-2026/ [2] Awesome Agents, "Reasoning Model API Pricing Compared 2026" — https://awesomeagents.ai/pricing/reasoning-model-pricing/ [3] TokenMix, "Thinking Tokens Trap: How Reasoning Models Burn max_tokens (2026)" — https://tokenmix.ai/blog/thinking-tokens-billing-trap-2026 [4] TokenCost, "Reasoning models 2026: o3-pro vs DeepSeek R1 pricing" — https://tokencost.app/blog/reasoning-models-pricing-2026 [5] OpenAI Community, "O3 is 80% cheaper and introducing o3-pro" — https://community.openai.com/t/o3-is-80-cheaper-and-introducing-o3-pro/1284925 [6] CometAPI, "How Much Does OpenAI's o3 API Cost Now?" — https://www.cometapi.com/how-much-does-openais-o3-api-cost-now/ [7] AI Cost Check, "Reasoning Model Pricing: What Thinking Tokens Cost (2026)" — https://aicostcheck.com/blog/ai-reasoning-model-pricing-thinking-tokens [8] OpenAI Community, "Reasoning tokens hidden price question" — https://community.openai.com/t/reasoning-tokens-hidden-price-question/1353099 [9] APIScout, "Claude Extended Thinking API: Cost & When to Use 2026" — https://apiscout.dev/blog/claude-api-extended-thinking-mode-2026 [10] Composio, "OpenAI o3-pro vs Claude 4 Opus vs Gemini 2.5 Pro" — https://composio.dev/content/openai-o3-pro-vs-claude-4-opus-vs-gemini-2-5-pro [11] Best Remote Tools, "Claude API Extended Thinking: How Output Tokens Are Billed" — https://bestremotetools.com/claude-api-extended-thinking-cost-how-output-tokens-are-bill/ [12] Anthropic, "Extended thinking tips" — https://docs.anthropic.com/en/docs/build-with-claude/prompt-engineering/extended-thinking-tips [13] René Zander, "Claude Extended Thinking: When the Budget Pays Off" — https://renezander.com/blog/claude-extended-thinking/ [14] SurePrompts, "Prompt Engineering for Reasoning Models (2026)" — https://sureprompts.com/blog/prompting-reasoning-models-guide [15] Google AI Dev Forum, "Cost Accounting for Gemini 2.5 Pro" — https://discuss.ai.google.dev/t/cost-accounting-for-gemini-2-5-pro/107226 [16] CalcPro, "AI API Costs Compared: GPT-5 vs Claude 4.6 vs Gemini 3.1 (2026)" — https://calcpro.io/blog/ai-api-costs-compared [17] Hostbor, "Gemini 2.5 API Gets 4× Pricier" — https://hostbor.com/gemini-25-api-gets-pricieris/ [18] Intuition Labs, "DeepSeek's Low Inference Cost Explained" — https://intuitionlabs.ai/articles/deepseek-inference-cost-explained [19] LangCopilot, "DeepSeek Reasoner vs o3 Pricing (2026)" — https://langcopilot.com/deepseek-reasoner-vs-o3-pricing [20] TrendWatch, "The Reasoning Model Wars: OpenAI o3 vs Gemini vs Claude" — https://trends.thicket.sh/reasoning-model-wars [21] APIScout, "Claude 3.7 vs GPT-5 vs Gemini 2.5 API 2026" — https://apiscout.dev/blog/claude-37-vs-gpt5-vs-gemini-25-llm-api-2026 [22] PromptLayer, "What the Thinking-16k label actually means" — https://blog.promptlayer.com/claude-opus-4-1-20250805-thinking-16k-what-the-thinking-16k-label-actually-means-for-your-workflows/