Quantization and Distillation in 2025–2026

Model compression has moved from a research curiosity to the default path for production LLM serving. Two techniques dominate: quantization (reducing numerical precision of weights and/or activations) and distillation (training a smaller "student" model from a larger "teacher"). Together, they have driven the 10–100× drop in inference cost per token observed between 2023 and 2026, and they now define the economics of both frontier API serving and on-prem deployment.

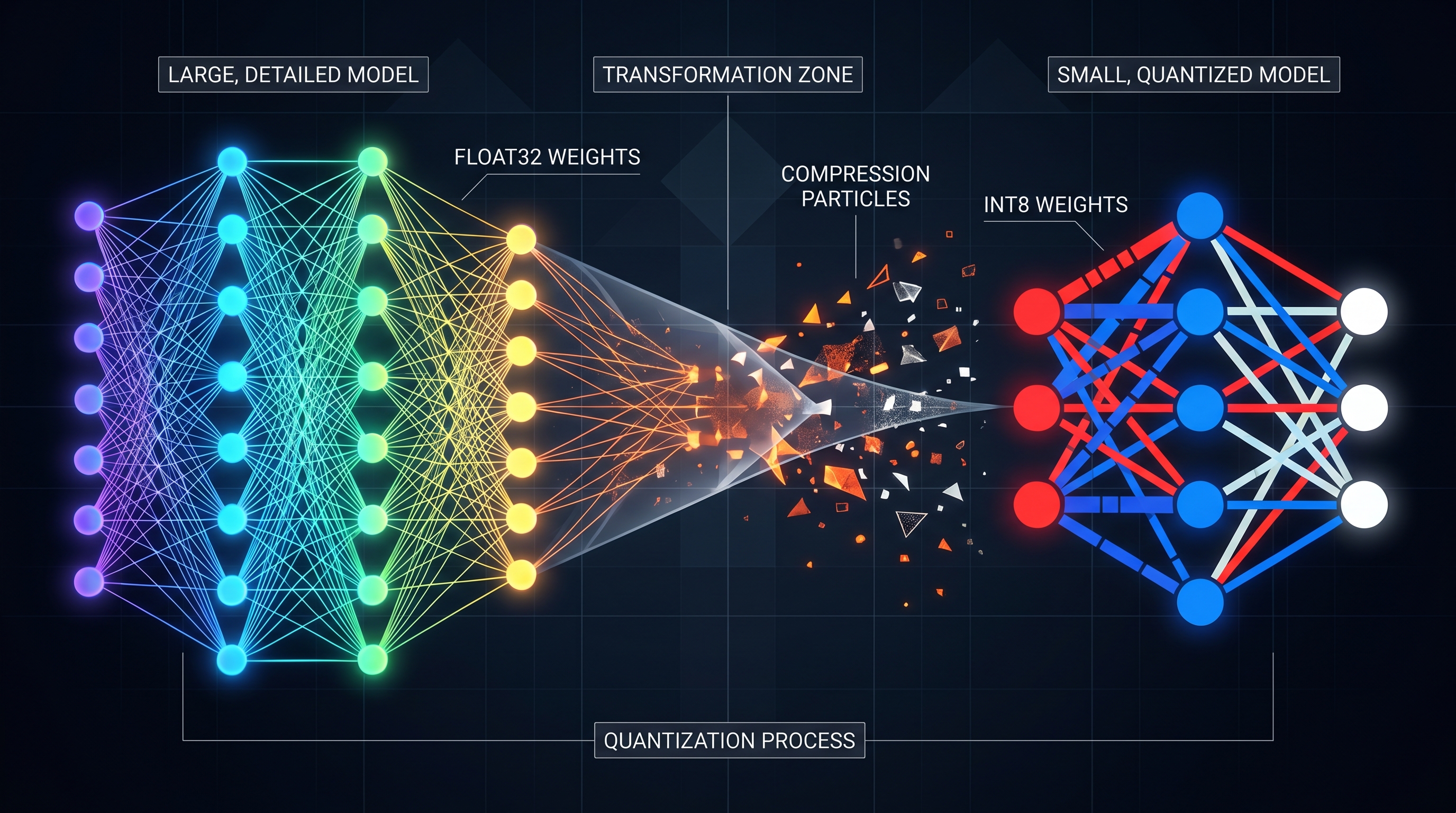

The precision ladder: BF16 → FP8 → INT8 → INT4

By late 2025, the consensus precision ladder on NVIDIA Hopper/Blackwell and AMD MI300-class hardware is well established, with quality deltas converging across independent studies.

FP8 (W8A8-FP) is effectively lossless. Neural Magic/Red Hat's comprehensive Llama-3.1 study found FP8 weight+activation quantization preserves accuracy within noise across all model sizes (8B, 70B, 405B), with average recovery of ~99.75% vs. BF16 on academic and real-world benchmarks [1]. A 2025 EMNLP evaluation across 9,700 examples confirmed FP8 shows only ~0.2% average accuracy drop [2]. On Blackwell and Hopper, FP8 has native Tensor Core support, so it is strictly a throughput win: GPUStack's vLLM benchmarks report FP8-Static delivering +26.7% throughput and –32.4% TPOT latency versus BF16 on the same GPU [3].

INT8 (W8A8-INT) sits just behind FP8 in quality. Well-tuned INT8 incurs only 1–3% accuracy degradation per task, with GPTQ-int8 averaging ~0.8% drop in the EMNLP study [1][2]. INT8 is the safe default on pre-Hopper hardware (A100, L40S) that lacks native FP8.

INT4 (W4A16) is where the real memory savings appear — 0.5 bytes per parameter, a ~75% VRAM reduction vs. BF16 [4]. This is the format that lets a 70B model fit on a single 80GB H100 or a 141GB H200, or a 13B model fit on a 24GB consumer GPU. The two dominant algorithms are GPTQ and AWQ.

- GPTQ quantizes layer by layer using second-order (Hessian) information. At INT4 it typically lands within 1–3% perplexity of FP16 [5].

- AWQ (Activation-aware Weight Quantization) protects the ~1% of weights tied to high-activation channels. It typically reaches within 0.5–1.5% of the original on standard perplexity benchmarks, slightly ahead of GPTQ on academic evals [5].

Interestingly, the Llama-3.1 study found the two are nearly tied on academic benchmarks (AWQ leads by only 0.23–0.35 points on a 0–100 scale), but GPTQ outperforms AWQ by 2.9 points on real-world tasks like Arena-Hard, which led Neural Magic to adopt GPTQ as their production INT4 default [1]. Qwen's own benchmarks tell a similar story: Qwen2-72B-Instruct scores 81.3 BF16 vs. 81.2 GPTQ-int4 vs. 80.4 AWQ on the MMLU/C-Eval/IFEval average — essentially indistinguishable at the 72B scale [6].

The picture gets worse on long context and aggressive 4-bit: BNB-NF4 averages 6.9% drop and can lose up to 59% on Llama-3.1 70B under the ONERULER long-context benchmark [2]. The rule of thumb: INT4 is safe for 30B+ models on short/medium context, risky for <10B models or long-context/reasoning-heavy tasks.

Throughput and cost per token

Quantization translates directly to dollars. The GPUStack vLLM benchmarks on a single H100 with Qwen2.5-7B show AWQ reaching 5,653 tok/s vs. 3,869 tok/s BF16 (+46%), and FP8-Static on larger models reaching 16,452 tok/s (+27%) with 32% lower per-token latency [3]. On TensorRT-LLM, NVIDIA reports >10,000 output tok/s on H100 with FP8 and sub-100ms TTFT [7]. A head-to-head on 4×A100 shows TensorRT-LLM at 2,800 tok/s vs. vLLM at 2,200 tok/s on Llama-2 70B (+27% throughput for the NVIDIA stack) [8].

The macro effect on economics is dramatic. As of late 2025, H100 cloud prices fell to $1.49–3.90/hr (down from $7–8/hr in 2024), AWS cut prices 44% in June 2025, and H200 (141GB HBM3e) is now $2.15–6.00/hr — enough to serve a 70B model on a single GPU that previously needed two H100s [9]. Combined with FP8/INT4 quantization, the cost-per-token gap between hyperscalers and self-hosters has narrowed: one analysis pegs OpenAI's marginal cost at ~$0.00012/token while naive self-hosted deployments pay ~$0.001/token, with quantization + batching + paged KV cache closing most of that 8× gap [9]. Blackwell GB200/GB300 promises a further 30× inference improvement for LLMs, with FP4 moving from research to production in 2026 [9].

Distillation: smaller models catching up

Parallel to quantization, distillation has become a first-class pretraining technique, not just a post-hoc compression trick. The Llama-3.2 and Gemma model families are explicitly distilled from larger teachers, and recent work shows distillation improves not just statistical modeling but also test-time scaling and in-context learning behaviors [10].

The breakout case of 2025 was DeepSeek-R1 distillation. DeepSeek released R1-Distill-Qwen-32B and R1-Distill-Llama-70B — students trained on reasoning traces from the 671B R1 teacher. R1-Distill-Qwen-32B outperforms OpenAI o1-mini on math/code benchmarks despite being a dense 32B model [11]. This demonstrated that chain-of-thought reasoning capability itself could be transferred via distillation, not just next-token prediction, and triggered a wave of reasoning-distilled open models through 2025.

Microsoft's Phi-4 (14B) continues the "small model, curated/distilled data" thesis first proven by Phi-2 and Phi-3, reaching GPT-4-class performance on targeted reasoning benchmarks at roughly 1/50th the parameter count. Gemma 2/3 (2B, 9B, 27B) use logit distillation from larger Gemini teachers and consistently outperform similarly-sized non-distilled peers. Llama 3.2 1B/3B are distilled from the 8B/70B models and have become the default edge-deployment targets.

Beyond official releases, aggressive compression research continues. Lillama compresses Mixtral-8x7B in minutes on a single A100, removing 10B parameters while retaining >95% of original performance, and compresses Phi-2 3B by 40% with just 13M calibration tokens [12]. These techniques are increasingly bundled into inference stacks rather than run as offline pipelines.

Combining quantization + distillation

The production pattern in 2026 is to stack both: distill to a smaller student, then quantize the student. A DeepSeek-R1-Distill-Llama-70B in AWQ-INT4 fits on a single H100 80GB and serves at ~5,000 tok/s on vLLM, delivering o1-mini-class reasoning at single-GPU economics. For edge deployment, Gemma-2-2B distilled + INT4 GGUF runs on a phone-class NPU; Llama-3.2-1B INT4 fits in under 1GB.

This stacking is largely additive in cost savings but not quite additive in quality loss. The 1–3% drop from INT4 on a distilled model can matter more than on the original teacher, because distilled students have less representational slack — there is less redundancy to absorb quantization noise. Practitioners report that AWQ-INT4 on a distilled 7B is noticeably worse than AWQ-INT4 on the 70B teacher at the same benchmarks, even after normalizing for the distillation gap itself.

Practical recommendations for 2026

- Frontier serving on Hopper/Blackwell: FP8 weights + activations. Lossless, hardware-native, ~25–30% throughput win over BF16.

- VRAM-constrained serving (single-GPU 70B, consumer-GPU 13B+): AWQ-INT4 or GPTQ-INT4. Expect 1–3% quality drop on standard benchmarks, more on long-context reasoning.

- Edge and on-device: distilled small models (Llama-3.2-1B/3B, Gemma-2-2B, Phi-3.5-mini) + INT4 GGUF via llama.cpp/Ollama.

- Reasoning workloads: prefer distilled reasoning models (R1-Distill family) over quantizing a general teacher to the same footprint — the distilled reasoning signal survives INT4 better than raw long-context recall does.

- Avoid: BNB-NF4 for any long-context workload (up to 59% drop observed) [2]; GGUF Q2_K except for hobbyist use (15–20% quality loss); naive post-training INT4 without GPTQ/AWQ calibration.

The net effect across 2025–2026 is that serving cost per token for open-weight models at near-frontier quality has dropped roughly an order of magnitude, driven more by the quantization+distillation stack than by raw hardware improvements. The economic gap between proprietary APIs and self-hosted open models is now primarily a function of batch size and utilization, not precision.

References

[1] Kurtic et al. — "Give Me BF16 or Give Me Death"? Accuracy-Performance Trade-offs in LLM Quantization — https://arxiv.org/pdf/2411.02355 [2] "How Does Quantization Affect Multilingual LLMs?" — EMNLP 2025 — https://aclanthology.org/2025.emnlp-main.479.pdf [3] GPUStack — The Impact of Quantization on vLLM Inference Performance — https://docs.gpustack.ai/latest/performance-lab/references/the-impact-of-quantization-on-vllm-inference-performance/ [4] VRLA Tech — LLM Quantization Explained: INT4, INT8, FP8, AWQ, and GPTQ in 2026 — https://vrlatech.com/llm-quantization-explained-int4-int8-fp8-awq-and-gptq-in-2026/ [5] ML Journey — Quantization Techniques for LLM Inference: INT8, INT4, GPTQ, and AWQ — https://mljourney.com/quantization-techniques-for-llm-inference-int8-int4-gptq-and-awq/ [6] Qwen Docs — Performance of Quantized Models — https://qwen.readthedocs.io/en/stable/getting_started/quantization_benchmark.html [7] Introl — TensorRT-LLM Optimization — https://introl.com/blog/tensorrt-llm-optimization-nvidia-inference-stack-guide [8] EaseCloud — Quantization Setup Guide (2026) — https://blog.easecloud.io/ai-cloud/optimize-gpus-faster-with-tensorrt-llm/ [9] Introl — Cost Per Token Analysis — https://introl.com/blog/cost-per-token-llm-inference-optimization [10] "Distillation Scaling: A modern lens of Data, In-Context Learning and Test-Time Scaling" — arXiv 2509.01649 — https://arxiv.org/html/2509.01649v1 [11] Hugging Face — deepseek-ai/DeepSeek-R1-Distill-Llama-70B — https://huggingface.co/deepseek-ai/DeepSeek-R1-Distill-Llama-70B [12] "Large Language Models Compression via Low-Rank Feature Distillation" (Lillama) — arXiv 2412.16719 — https://arxiv.org/html/2412.16719v2