Prompt Caching Deep Dive: Economics, Mechanics, and Production Patterns

1. What Prompt Caching Is and Why It Matters

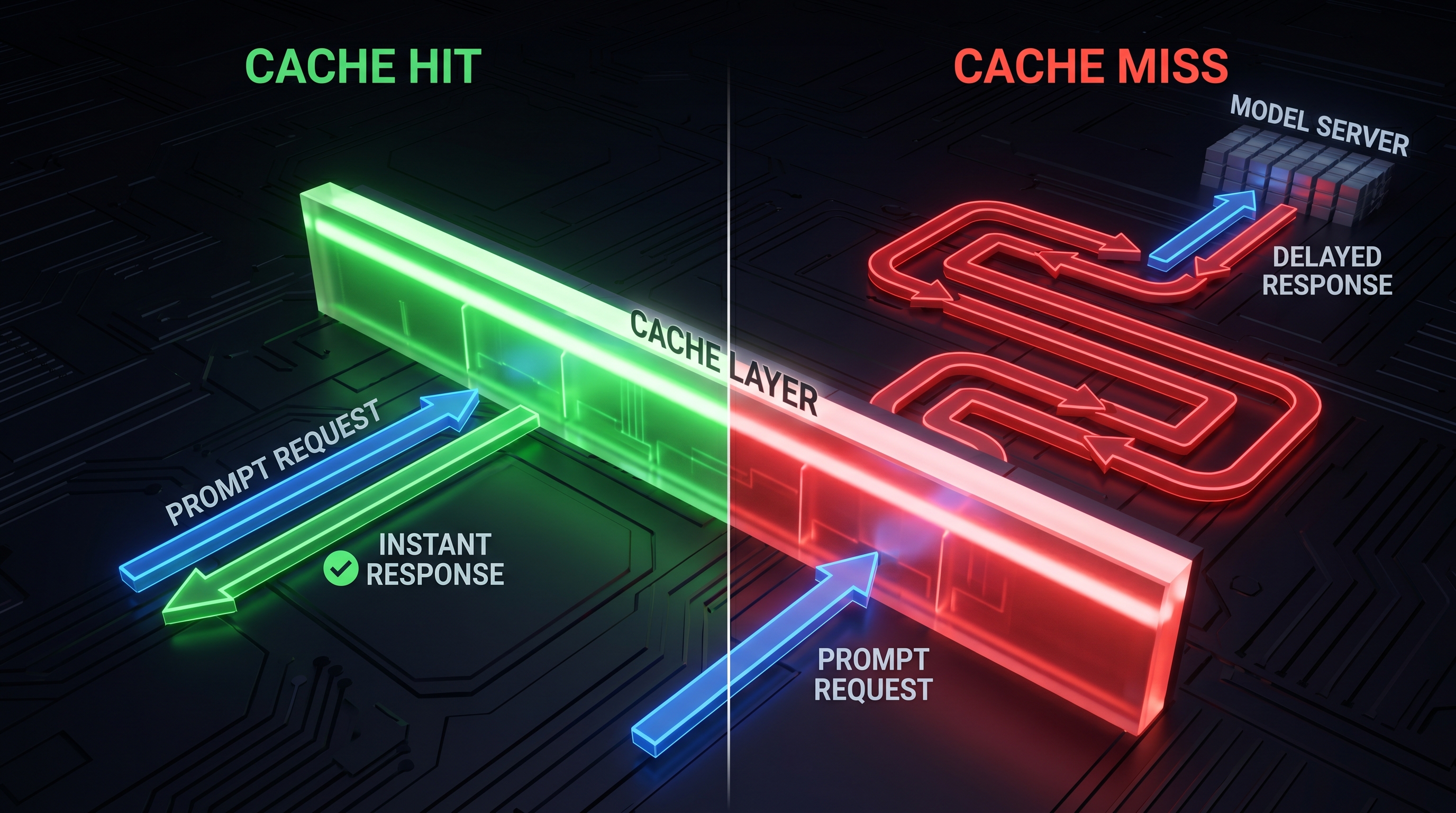

Every LLM API call reprocesses the entire input from scratch. In multi-turn conversations or agentic workflows, the same system prompt, tool definitions, and conversation history get re-tokenized and re-computed on every request. Prompt caching stores the key-value (KV) pairs from previously processed prefixes server-side, allowing subsequent requests that share the same prefix to skip that computation. The result is lower latency (up to 85% reduction in time-to-first-token) and lower cost (up to 90% off input token pricing) [1][2].

As of mid-2026, all three major providers — Anthropic, OpenAI, and Google — offer prompt caching, but with fundamentally different design philosophies: explicit control, fully automatic, and a hybrid approach respectively.

2. Anthropic: Explicit Cache Control

Anthropic offers the most granular caching system. Developers place cache_control markers on content blocks to designate what gets cached, or use a top-level automatic caching mode that manages breakpoints as conversations grow [1][3].

Pricing Model

Anthropic uses a write-premium / read-discount model. Cache writes cost more than standard input; cache reads cost 90% less. There are no separate storage fees [1].

| Model | Base Input | 5-min Cache Write | 1-hr Cache Write | Cache Read (Hit) | Output |

|---|---|---|---|---|---|

| Claude Opus 4.7 | $5.00/MTok | $6.25/MTok (1.25×) | $10.00/MTok (2×) | $0.50/MTok (0.1×) | $25.00/MTok |

| Claude Sonnet 4.6 | $3.00/MTok | $3.75/MTok (1.25×) | $6.00/MTok (2×) | $0.30/MTok (0.1×) | $15.00/MTok |

| Claude Haiku 4.5 | $0.80/MTok | $1.00/MTok (1.25×) | $1.60/MTok (2×) | $0.08/MTok (0.1×) | $4.00/MTok |

| Claude Haiku 3 | $0.25/MTok | $0.30/MTok (1.25×) | $0.50/MTok (2×) | $0.03/MTok (0.1×) | $1.25/MTok |

Source: Anthropic official pricing page, April 2026 [1]

Key Parameters

- Minimum cacheable prefix: 1,024 tokens (varies by model, up to 4,096 for some)

- TTL options: 5 minutes (1.25× write premium) or 1 hour (2× write premium)

- Cache refreshing: Each cache hit refreshes the TTL, keeping active caches alive

- Scope: Organization-level; caches are not shared across organizations

- Latency improvement: Up to 85% TTFT reduction. Anthropic reports a 100,000-token prompt dropping from 11.5 seconds to 2.4 seconds TTFT on a warm cache [2][4]

Break-Even Math

For Sonnet 4.6 with a 5-minute cache window [5]:

Write cost: $3.75/MTok

Savings per read: $3.00 - $0.30 = $2.70/MTok

Break-even: $3.75 / $2.70 = 1.39 reads

You need approximately 1.4 cache reads within a 5-minute window to recover the write cost — in practice, 2 reads is the minimum viable threshold. For the 1-hour window (2× write), the break-even rises to ~2.2 reads, meaning 3 reads within an hour [5].

Practical Savings

A real-world test with Claude Sonnet 4.5 over 10 requests against a 6,313-token cached prefix showed: first request cost $0.39 (cache write + query), subsequent 9 requests cost $0.04 each, for a total of $0.75 — a 75.9% savings versus uncached [6].

3. OpenAI: Fully Automatic Caching

OpenAI takes the opposite approach: zero configuration. Caching activates automatically on all prompts over 1,024 tokens with no code changes required and no write surcharge [7][8].

Pricing Model

OpenAI charges no premium for cache writes. Cache hits receive a discount that varies by model — 50% for GPT-4o, 75% for the GPT-4.1 series, and up to 90% for o3/o4-mini reasoning models [8][9].

| Model | Standard Input | Cached Input | Discount | Output |

|---|---|---|---|---|

| GPT-4o | $2.50/MTok | $1.25/MTok | 50% | $10.00/MTok |

| GPT-4o mini | $0.15/MTok | $0.075/MTok | 50% | $0.60/MTok |

| GPT-4.1 | $2.00/MTok | $0.50/MTok | 75% | $8.00/MTok |

| GPT-4.1 mini | $0.40/MTok | $0.10/MTok | 75% | $1.60/MTok |

| GPT-4.1 nano | $0.10/MTok | $0.025/MTok | 75% | $0.40/MTok |

| o3 | $2.50/MTok | $0.25/MTok | 90% | $10.00/MTok |

| o4-mini | $0.75/MTok | $0.075/MTok | 90% | $3.00/MTok |

Source: OpenAI official pricing page, April 2026 [8]

Key Parameters

- Minimum cacheable prefix: 1,024 tokens

- Cache granularity: 128-token increments after the initial 1,024

- TTL: 5–10 minutes of inactivity; always cleared within 1 hour. Extended caching available for up to 24 hours [7][9]

- Routing: Requests are routed to servers based on a hash of the first ~256 tokens. The

prompt_cache_keyparameter can be provided to influence routing and improve hit rates for requests sharing long common prefixes [7] - No write surcharge: The first request pays standard input pricing, not a premium

- Latency improvement: Up to 80% TTFT reduction for prompts over 10,000 tokens [9]

- Scope: Organization-level, zero data retention eligible

Practical Savings

A test with GPT-4o over 10 requests showed: first request $0.26 (no write surcharge), subsequent 9 requests $0.13 each, total $1.45 — a 53.4% savings [6]. The lower percentage versus Anthropic reflects the 50% discount (vs. 90%), but the absence of a write surcharge means every single request after the first benefits, with no break-even threshold to clear.

For GPT-4.1 series models with 75% cache discounts, the economics improve significantly. A workload processing 55M input tokens/day on GPT-4.1 drops from $110/day to $27.50/day — monthly savings of ~$2,475 [9].

4. Google Gemini: Hybrid Implicit + Explicit Caching

Google offers two caching modes: implicit caching (automatic, enabled by default since May 2025) and explicit caching (manual, with storage fees) [10][11].

Implicit Caching

Implicit caching works automatically with no code changes and no storage fees. When the system detects a cache hit, you receive a 90% discount on cached tokens for Gemini 2.5+ models [10][11].

- Minimum tokens: 2,048 (Gemini 2.0/2.5), 4,096 (Gemini 3/3.1)

- No storage fees: Cost savings are passed through automatically on hits

- No guaranteed hits: Google does not guarantee cache hits will occur — it's best-effort

- Supported models: Gemini 2.5 Pro, 2.5 Flash, 2.5 Flash-Lite, 3 Flash, 3.1 Pro, 3.1 Flash-Lite

Explicit Caching

Explicit caching gives developers control over what gets cached and for how long, but introduces per-hour storage fees [10][11].

| Model | Standard Input | Cached Read | Discount | Storage Cost |

|---|---|---|---|---|

| Gemini 2.5 Pro (≤200K) | $1.25/MTok | $0.3125/MTok | 75% → 90%* | $4.50/MTok/hr |

| Gemini 2.5 Flash | $0.30/MTok | $0.075/MTok | 75% → 90%* | $1.00/MTok/hr |

| Gemini 2.5 Flash-Lite | $0.10/MTok | $0.025/MTok | 75% → 90%* | $0.25/MTok/hr |

Gemini 2.5+ models receive a 90% discount on cached reads; Gemini 2.0 models receive 75% [10].

Key Parameters

- Minimum for explicit caching: 32,768 tokens (~25,000 words) [4]

- Default TTL: 60 minutes (user-configurable, no maximum)

- Storage billing: Per-hour, based on cached token count. A 10M-token cache on Gemini 2.5 Pro costs $45/hour ($1,080/day) [4]

- Break-even for explicit: With Gemini 2.5 Pro at 10M tokens, storage is $45/hr and savings per read is ~$1.12/MTok — you need roughly 40 full-context reads per hour to cover storage [4]

When to Use Which Mode

Implicit caching is the right default for most workloads — it's free, automatic, and provides the same 90% discount when hits occur. Explicit caching makes sense only for very high-volume workloads where you need guaranteed cache availability and can justify the storage overhead [10][11].

5. Head-to-Head Comparison

| Feature | Anthropic | OpenAI | Google Gemini |

|---|---|---|---|

| Trigger mechanism | Explicit cache_control or automatic mode | Fully automatic | Implicit (auto) + Explicit (manual) |

| Min tokens | 1,024–4,096 | 1,024 | 2,048–4,096 (implicit), 32,768 (explicit) |

| Cache read discount | 90% | 50–90% (model-dependent) | 90% (Gemini 2.5+) |

| Write cost premium | 1.25× (5-min) / 2× (1-hr) | None | None (implicit) / Storage fees (explicit) |

| TTL | 5 min or 1 hour | 5–10 min auto, up to 24 hr extended | Auto (implicit), user-defined (explicit) |

| Storage fees | No | No | No (implicit) / Yes (explicit) |

| Break-even reads | ~1.4 per window | 1 (no write premium) | 1 (implicit) / ~40/hr at 10M tokens (explicit) |

| Max latency reduction | 85% TTFT | 80% TTFT | Not officially published |

| Multimodal caching | Text, images, tools | Text, images, tools | Text, images, audio, video, PDFs |

6. Production Hit Rates and Real-World Economics

Benchmark: ProjectDiscovery (Security Tooling)

ProjectDiscovery's AI security pipeline provides one of the best-documented case studies. Their initial implementation achieved only a 7.4% cache hit rate because dynamic content (timestamps, request IDs, session tokens) was embedded near the top of the system prompt, causing every request to hash differently. Moving dynamic content after the stable prefix — a purely structural change — pushed hit rates to 84% [4][5].

On their most demanding task (1,225 steps, 67.5 million input tokens), they achieved a 91.8% cache rate and reduced total LLM costs by 59–70% [4].

Benchmark: Claude Code Sessions

Without prompt caching, a long Claude Opus coding session (100 turns with compaction cycles) can cost $50–100 in input tokens. With caching, that drops to $10–19 — making the $20/month Claude Code Pro subscription economically viable [3].

Industry Averages (25 Production Deployments)

Data synthesized from multiple 2025–2026 production reports [12]:

| Workload Type | Typical Hit Rate | Cost Reduction |

|---|---|---|

| RAG-based customer support (Anthropic) | 85%+ | ~85% on input costs |

| Code analysis pipeline (OpenAI) | 70–80% | ~60% |

| Multi-step agent tasks | 80–92% | 59–70% |

| Single-step tasks | 30–40% | 15–25% |

| General SaaS (50% hit rate, 100K daily requests) | 50% | ~49% |

| Target for healthy implementations | >70–80% | — |

7. Architecture Patterns That Maximize Hit Rates

The Golden Rule: Static First, Dynamic Last

The single most impactful optimization is prompt structure. Place all stable content (system instructions, tool definitions, few-shot examples, reference documents) at the beginning of the prompt. Place all variable content (user query, timestamps, session-specific data) at the end [3][4][5].

[Cached block 1: System prompt — never changes]

[Cached block 2: Tool definitions — rarely changes]

[Cached block 3: Conversation history — grows each turn]

[Uncached: Current user message]

Cache-Breaking Anti-Patterns

Any of these will invalidate the cache and force a full-price recomputation [3][5]:

- Timestamps in system prompts:

"Today is April 27, 2026"at the top of a prompt breaks caching for everything after it - Dynamic IDs before stable content: Request IDs, session tokens, or user-specific data placed before the cached block

- Changing tool order: Reordering MCP tools or function definitions between requests

- Model switching mid-session: Changing models invalidates the entire cache

- Adding/removing tools: Any change to the tool set breaks the prefix match

The Parallel Request Race Condition

When firing many parallel requests simultaneously, the first request triggers a cache write that takes 2–4 seconds to materialize. All concurrent requests arrive before the cache is available, so every request pays full price (or write premium) with zero reads. The fix: issue a single warm-up request and wait for it to complete before launching the parallel batch [5].

8. Economic Decision Framework

When Caching Pays Off

- Cacheable prefix ≥ 1,024 tokens

- Expected requests per cache window ≥ 3 (conservative)

- Stable portion of prompt > 30% of total input tokens

- Traffic density sufficient to hit break-even within TTL

When Caching Costs More

- Requests arrive at intervals longer than the cache TTL

- Prompts are mostly unique per request (changing document injections, tool outputs)

- Low daily request volume where write premiums don't amortize

- Explicit Gemini caching with insufficient read volume to cover storage fees

Provider Selection Guide

| Scenario | Best Provider | Why |

|---|---|---|

| Zero-config prototyping | OpenAI | Automatic, no write surcharge, immediate benefit |

| High-volume production with stable prompts | Anthropic | 90% discount + granular control = maximum savings |

| Multimodal caching (video/audio) | Google Gemini | Native support for caching video, audio, PDFs |

| Cost-sensitive with variable traffic | OpenAI | No write premium means no penalty for cache misses |

| Very large context (>32K tokens), high reuse | Google Gemini (explicit) | Long TTLs, guaranteed cache availability |

| Agent loops / multi-turn conversations | Anthropic | 90% savings on growing conversation prefixes |

9. The Stacking Effect: Caching + Batch + Model Selection

Discounts can be combined. On Anthropic, prompt caching multipliers stack with the Batch API's 50% discount and data residency pricing [1]. On OpenAI, cached input pricing stacks with the Batch API's 50% discount [8].

Example — Maximum savings on Anthropic Opus 4.7:

- Base input: $5.00/MTok

- Batch discount (50%): $2.50/MTok

- Cache read on batch (0.1×): $0.25/MTok

- Total: 95% off base input pricing

Example — Maximum savings on OpenAI GPT-4.1:

- Base input: $2.00/MTok

- Batch discount (50%): $1.00/MTok

- Cache read on batch (75% off): $0.25/MTok

- Total: 87.5% off base input pricing

10. Key Takeaways

-

Anthropic offers the deepest discounts (90% on reads) but charges a write premium (1.25–2×). Best for high-volume, stable-prefix workloads where the write cost amortizes quickly.

-

OpenAI is the safest default — automatic caching with no write surcharge means you never pay more than standard pricing. The 50–90% read discount (model-dependent) is lower than Anthropic's flat 90%, but the zero-risk model is compelling for variable workloads.

-

Gemini's implicit caching is the best free option — 90% discount with no storage fees when hits occur. Explicit caching's storage fees ($4.50/MTok/hr on 2.5 Pro) make it viable only for very high-throughput workloads.

-

Hit rate is an architectural property, not a prompt property. The difference between 7% and 84% hit rates is where you place dynamic content in the prompt, not whether you enable caching.

-

Break-even is remarkably low: ~1.4 reads per cache window on Anthropic, 1 read on OpenAI (no write premium). At scale, caching is almost always correct. At low volume, verify before shipping.

References

[1] Anthropic. "Pricing." https://docs.anthropic.com/en/docs/about-claude/pricing (accessed April 2026).

[2] Anthropic. "Token-saving updates on the Anthropic API." https://www.anthropic.com/news/token-saving-updates (October 2024).

[3] ClaudeCodeCamp. "How Prompt Caching Actually Works in Claude Code." https://www.claudecodecamp.com/p/how-prompt-caching-actually-works-in-claude-code (July 2026).

[4] AppXLab. "Prompt Caching LLM Cost Savings: Claude vs GPT vs Gemini." https://blog.appxlab.io/2026/04/13/prompt-caching-llm-cost-savings/ (April 2026).

[5] Tian Pan. "The Exact Math on When Provider-Side Prefix Caching Actually Pays Off." https://tianpan.co/blog/2026-04-17-prompt-cache-break-even-math (April 2026).

[6] Will McGinnis. "I Tested LLM Prompt Caching With Anthropic and OpenAI." https://mcginniscommawill.com/posts/2025-11-17-llm-prompt-caching-comparison/ (November 2025).

[7] OpenAI. "Prompt Caching Guide." https://developers.openai.com/api/docs/guides/prompt-caching (accessed April 2026).

[8] OpenAI. "API Pricing." https://www.openai.com/api/pricing (accessed April 2026).

[9] TokenMix. "How to Reduce OpenAI API Cost by 80%." https://tokenmix.ai/blog/how-to-reduce-openai-api-cost (April 2026).

[10] Google Cloud. "Context Caching Overview — Vertex AI." https://cloud.google.com/vertex-ai/docs/generative-ai/context-cache/context-cache-overview (accessed April 2026).

[11] Google Developers Blog. "Gemini 2.5 Models now support implicit caching." https://developers.googleblog.com/en/gemini-2-5-models-now-support-implicit-caching/ (May 2025).

[12] Zylos Research. "Prompt Caching and KV Cache Optimization for Long-Running AI Agent Sessions." https://zylos.ai/research/2026-03-27-prompt-caching-kv-cache-optimization-long-running-ai-agents (March 2026).