Pricing-Efficient Model Selection in 2026

Price-per-million-tokens comparison across Anthropic, OpenAI, Google, DeepSeek, and Qwen — with cost-quality frontiers and task-based routing strategies.

1. Executive Summary

The 2026 LLM market is defined by a bimodal pricing curve. Frontier closed models from Anthropic, OpenAI, and Google have largely held premium pricing stable through Q1–Q2 2026, while Chinese providers (DeepSeek, Alibaba's Qwen, Xiaomi's MiMo, MiniMax) have compressed the low end by another 5–10×. The result is a 60× to 1000× input-price spread across viable production models, but a quality spread of only ~8 points on the Artificial Analysis Intelligence Index (roughly 49–57 at the top tier) [1][2].

Academic analysis of price-performance curves finds that the price for a given level of benchmark performance has dropped 5–10× per year across knowledge, reasoning, math, and software engineering tasks on frontier models [3]. This compression means most production workloads are now served best by a routing architecture — not a single model — with reported savings of 40–85% versus naive single-model deployments [4][5][6].

2. The 2026 Price Landscape, Tier by Tier

Prices below are USD per 1M tokens (input / output), using April 2026 published pricing on OpenRouter and provider direct APIs [1][7][8].

2.1 Premium tier ($3+ input)

| Model | Provider | Input $/M | Output $/M | Context | Quality (AA Index) |

|---|---|---|---|---|---|

| GPT-5.4 Pro | OpenAI | $30.00 | $180.00 | 1.05M | ~58 |

| Claude Opus 4.6 | Anthropic | $5.00 | $25.00 | 200K / 1M beta | 53.0 |

| Claude Sonnet 4.6 | Anthropic | $3.00 | $15.00 | 200K / 1M beta | 51.7 |

| GPT-5.4 | OpenAI | $2.50 | $15.00 | 1.05M | 57.2 |

| Gemini 3.1 Pro | $2.00 | $12.00 | 1M+ | 57.2 |

Gemini 3.1 Pro Preview currently leads the Artificial Analysis Intelligence Index while costing roughly $892 to run the full index test, versus $2,304 for GPT-5 and $2,486 for Opus 4.6 at max effort — less than half the cost of rivals [9][10]. Opus is the single biggest revenue driver for Anthropic's direct API at an estimated $25.1M/month, confirming that capability-bound spend is not price-elastic at this tier [1][11].

2.2 Mid-tier ($0.50–$3 input)

| Model | Provider | Input $/M | Output $/M | Notes |

|---|---|---|---|---|

| Qwen3.5-Max | Alibaba | $1.20–$1.60 | $6.00 | Strong multimodal [12] |

| MiMo V2 Pro | Xiaomi | $1.00 | $3.00 | 1.04M context; #1 on OpenRouter by volume at 4.79T weekly tokens [1] |

| Qwen 3 Max Thinking | Alibaba | $0.78 | $3.90 | Reasoning variant |

| Claude Haiku 4.5 | Anthropic | $1.00 | $5.00 | Anthropic's low-cost tier [13] |

2.3 Economy tier ($0.15–$0.50 input)

| Model | Provider | Input $/M | Output $/M | Context |

|---|---|---|---|---|

| GPT-5 Mini | OpenAI | $0.25 | $2.00 | [14] |

| Gemini 2.5 Flash | $0.30 | $2.50 | [14] | |

| MiniMax M2.7 | MiniMax | $0.30 | $1.20 | 205K; 56.2% SWE-Bench Pro [1] |

| DeepSeek V3.2 | DeepSeek | $0.28 | $0.42 | 90% cache discount → $0.028 cache-hit [12][15] |

| Qwen 3 Coder Next | Alibaba | $0.12 | $0.75 | 256K; coding-tuned |

2.4 Ultra-low tier (<$0.15 input)

| Model | Provider | Input $/M | Output $/M | Context |

|---|---|---|---|---|

| DeepSeek-V3 | DeepSeek | $0.27 ($0.07 cache-hit) | $1.10 | [16] |

| Gemini 3 Flash | $0.10 | $0.40 | 1M [7] | |

| MiMo V2 Flash | Xiaomi | $0.09 | $0.29 | 262K |

| Qwen 3.5 Flash | Alibaba | $0.065 | $0.26 | 1M context [1] |

| Qwen 3.5 9B | Alibaba | $0.05 | $0.15 | 256K [1] |

| GPT-5 Nano | OpenAI | $0.05 | $0.40 | [17] |

| Llama 3.1 8B (via Groq) | Meta/Groq | $0.05 | — | 274 tok/s [2] |

The cheapest viable input price ($0.05/M on Qwen 3.5 9B or GPT-5 Nano) is a full 60× below Claude Sonnet 4.6 input and 600× below GPT-5.4 Pro input [1]. A further layer of free-tier models (Qwen 3.6 Plus Preview at 1M context, Nemotron 3 Super 120B, Step 3.5 Flash) now covers non-critical background workloads at zero marginal token cost [1].

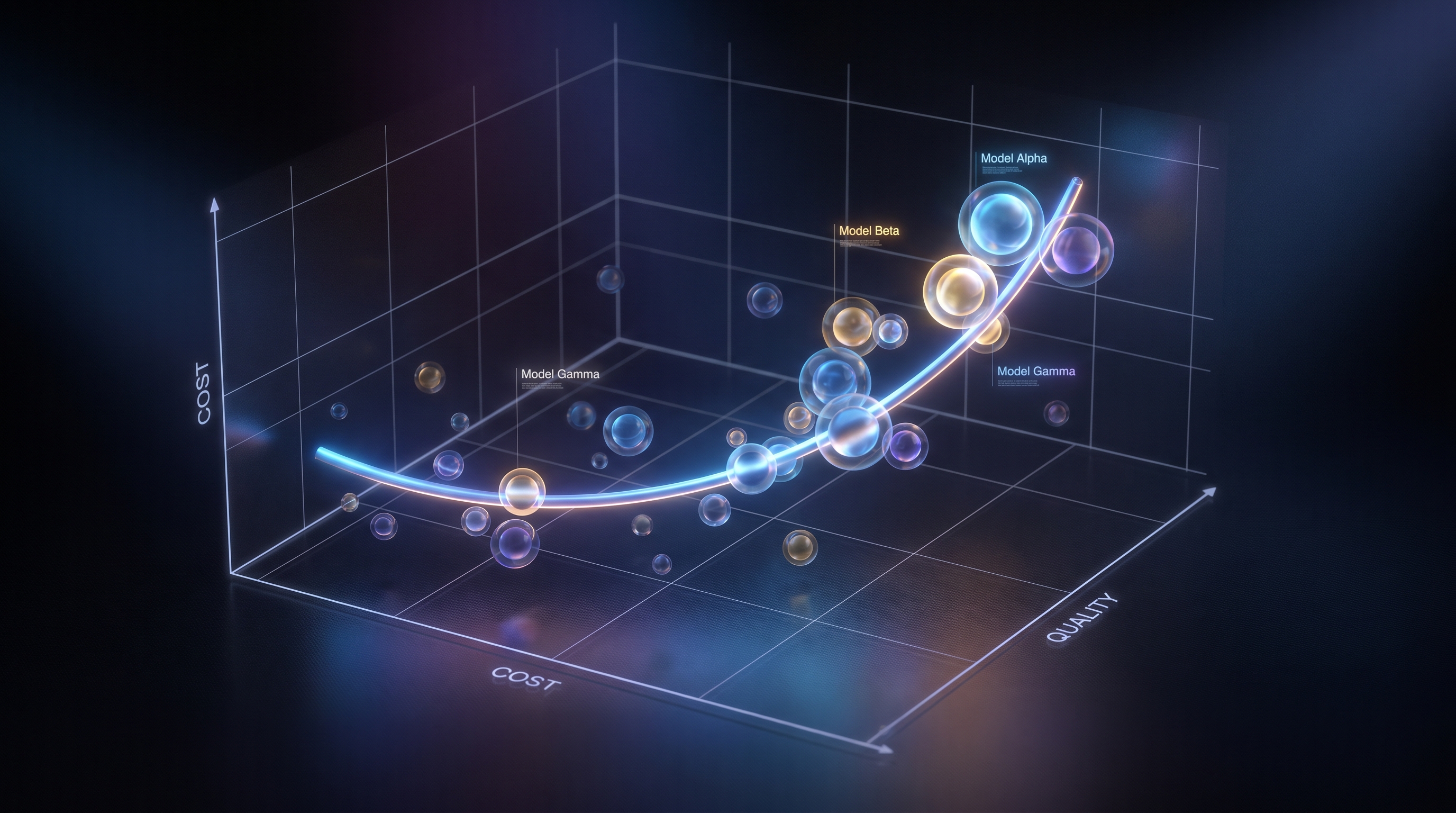

3. The Cost-Quality Frontier

Artificial Analysis's Intelligence Index v4.0 (February 2026) puts the quality ceiling at Opus 4.6 (max) at 53 and GPT-5.2 (xhigh)/Gemini 3.1 Pro/GPT-5.4 at ~57.2 [18][19]. Using a 3:1 input-to-output blend, only six of the top 20 tracked models sit on the Q2 2026 Pareto frontier (not dominated on both cost and quality by some sibling) [2]:

| Frontier model | Blended $/1M | Quality | Best use |

|---|---|---|---|

| GPT-5.4 Pro | $67.50 | ~58 | Hardest reasoning, irreversible decisions |

| GPT-5.4 | $5.63 | 57.2 | Agent tooling, broad ecosystem |

| Gemini 3.1 Pro | $4.50 | 57.2 | Long context, multimodal, best $/quality at top |

| Claude Opus 4.6 | $10.00 | 53.0 | Terminal reasoning, legal/research depth |

| Claude Sonnet 4.6 | $6.00 | 51.7 | Production default for reasoning |

| MiniMax M2.7 | $0.53 | ~49.6 | Opus-class reasoning at 1/20th the price |

Notably dominated (beaten on every axis by a cheaper or better sibling) are: Claude Opus 4.5 (superseded by Sonnet 4.6), GPT-5.2 xhigh, DeepSeek V3.2 (beaten by Nemotron 3 Super on SWE-Bench + cost), Grok 4.20, Gemini 3 Flash Preview (beaten by free Qwen 3.6 Plus), and GPT-5.4 Mini (beaten by MiMo V2 Pro) [2]. Procurement inertia keeps these in production, but greenfield deployments should skip them.

3.1 The quality-vs-price decoupling

The headline finding from Digital Applied's Q2 2026 efficient-frontier analysis is that a ~1000× price spread compresses into an ~8-point quality spread (49–57 on AA Index) [2]. Put bluntly, the difference between spending $0.05 per million input tokens and $30 per million is not a 600× quality difference — it is roughly 16% on a reasoning benchmark. That gap is real for frontier reasoning tasks but nonexistent for extraction, classification, and most structured-output work.

Academic work in The Price of Progress (arXiv, late 2025) quantifies this directly: for a fixed performance level on MMLU, MATH, SWE-Bench, and GPQA, the frontier price has fallen 5–10× per year and is projected to continue [3].

3.2 Chinese models and the 30× cost gap

DeepSeek-V3.2 at $0.28/$0.42 delivers GPT-5-level reasoning and coding — reportedly 90%+ on HumanEval and competitive on SWE-Bench — while running up to 30× cheaper than Claude Sonnet 4.6 ($3/$15) on the blended rate, and 90× cheaper when context-cache hits apply ($0.028 input) [12][15]. Qwen3.5-Max at $1.20–$1.60 input / $6.00 output is 5–10× cheaper than Western flagships while matching them on MATH and GPQA [12].

Three structural drivers explain the gap [12]:

- Mixture-of-Experts efficiency — DeepSeek-V3 activates only 37B of 671B parameters per token, cutting inference FLOPs ~80% versus dense models.

- Training under export constraints — DeepSeek reportedly trained V3 for ~$5.6M on ~2,000 H800-equivalent GPUs versus hundreds of millions for Western flagships.

- Context-cache aggression — automatic prefix caching grants a flat 90% discount on cache-hit input tokens, turning multi-turn agents and RAG into near-free operations.

For a typical 3:1 input-to-output agent workload, DeepSeek lands at ~$0.35 blended per million tokens versus $5–$7 for Western flagships — roughly $3,500/month savings at 10M tokens/month versus Claude Sonnet [12].

4. Task-Based Routing: Where the Real Savings Live

Token sticker price captures only 40–60% of true production cost [1]. The rest is driven by workload classification and routing. Published production data consistently shows 40–85% cost reduction from routing versus single-model deployment [4][5][6].

4.1 The four-stage routing stack

A reference routing architecture in production as of Q2 2026 [1][2]:

- Classification (ultra-low tier, ~$0.05/M) — Qwen 3.5 9B or Qwen 3.5 Flash tags every incoming request with intent, complexity, and required-capability labels. Classification at $0.05/M is effectively free relative to the downstream spend it unlocks.

- Planning (economy tier, ~$0.15–$0.50/M) — MiniMax M2.5/M2.7 or Qwen 3 Coder Next handle structured planning and tool selection below mid-tier pricing.

- Execution (mid-tier, ~$1–$3/M) — MiMo V2 Pro or Qwen 3 Max Thinking run reasoning or tool-chain-deep steps that don't yet require premium capability.

- Terminal reasoning (premium tier, $3–$25/M) — Claude Sonnet 4.6 or Opus 4.6 are reserved for the last-mile reasoning and irreversible output, typically 5–15% of total tokens but 40–60% of perceived quality [1].

4.2 Production routing rules by workload

Mapping each workload to its frontier point [2][5]:

| Workload | Recommended model | Rationale |

|---|---|---|

| Irreducible judgment / legal / high-stakes | Claude Opus 4.6 ($5/$25) | Top of quality; error cost > token cost |

| Production RAG + agents | Claude Sonnet 4.6 ($3/$15) | Near-Opus quality at 1/5 of Opus price |

| High-volume code generation | MiMo V2 Pro ($1/$3) | Best cost-per-quality at scale; 1M context |

| Budget agent workloads | MiniMax M2.7 ($0.30/$1.20) | 56.2% SWE-Bench Pro at $0.53 blended |

| Multimodal, long-context research | Gemini 3.1 Pro ($2/$12) | Leads AA Intelligence Index at ~half rival cost [9] |

| Bulk classification / tagging | Qwen 3.6 Plus (free preview) | Free floor dominates all paid sub-$0.50 models [2] |

| OCR post-processing / RAG re-rank | DeepSeek V3.2 ($0.28/$0.42, 90% cache) | Cheapest capable structured output |

| Interactive UX / voice | gpt-oss-120b on Cerebras (920 tok/s) | Speed frontier; latency-bound workloads |

| On-prem / regulated | Nemotron 3 Super 120B (self-host) | Best open-weight SWE-Bench (60.47%) |

4.3 Concrete savings math

For a chatbot handling 1,000 conversations/day × 2K tokens each = ~2M input and 500K output per day [7]:

- GPT-5 (single-model): $20 input + $7.50 output ≈ $27.50/day → ~$825/month

- Claude Sonnet 4.6 (single-model): $6 input + $7.50 output = $13.50/day → ~$405/month

- Routed stack (70% Gemini 3 Flash, 20% Sonnet 4.6, 10% Opus 4.6): ≈ $3.85/day → ~$115/month — a ~86% reduction vs GPT-5 and ~72% reduction vs Sonnet single-model baseline.

Particula.tech and tianpan.co independently report 50–85% routing savings in live production, corroborating this math [4][5].

5. Hidden-Cost Factors That Reshape Sticker Prices

Token sticker pricing misses seven factors that collectively swing true cost 2–5× against headline $/M numbers [1][20]:

- Prompt caching — 5–10× cheaper input on cache hits. Anthropic, OpenAI, Google, and DeepSeek all offer it, but only if system prompts are designed to be stable [12][16]. DeepSeek's cache takes input from $0.28 to $0.028/M — a 90% cut without code changes [15].

- Batch API discounts — OpenAI and Anthropic offer 50% off for 24-hour async turnaround; Alibaba Model Studio offers 50% off both input and output on batch [8][21]. Catalog tagging, enrichment, and synthetic data work belong here.

- Tool-use overhead — every tool call re-serializes definitions and returns on the next turn. Agentic workloads routinely 2–3× nominal input spend. GPT-5.4's tool-search reportedly trims 47% of these tokens [1].

- Reasoning-token bloat — OpenAI's o-series and GPT-5 reasoning variants charge for hidden chain-of-thought tokens; real bills inflate 20–30% on agentic workloads versus nominal output pricing [12].

- Tokenizer drift — new model tokenizers can map identical English text to 1.0–1.35× more tokens, silently shifting cost on the same traffic [1].

- Retry and fallback traffic — outages, rate limits, and quality retries typically add 5–15% uplift that baseline models miss [1].

- Observability and eval cost — properly instrumented agent deployments add 5–10% of API cost in eval/telemetry spend [1].

6. Strategic Takeaways for 2026 Model Selection

- The frontier is a cluster, not a line. Only ~6 of the top 20 production models sit on the Pareto frontier; the rest are dominated. Audit your stack quarterly against the current frontier rather than defaulting to incumbents [2].

- Premium capability is not price-elastic, but volume is. Anthropic's $3/$15 and $5/$25 bands have held through Q2 2026 despite matching-quality Chinese competitors because buyers pay for reliability, safety alignment, and ecosystem — not just IQ [1]. Don't expect frontier prices to fall; expect the middle to keep hollowing out.

- Free-tier models are an infrastructure subsidy. Qwen 3.6 Plus Preview (free, 1M context), Nemotron 3 Super 120B (free tier), and Step 3.5 Flash (free tier) compress the entire bottom of the curve. Any paid model below quality-44 and above $0 has no niche [2].

- Route first, select second. The single highest-leverage decision is building a classification + routing tier. Models are configuration values; routing is architecture. Every month of single-provider operation is a month of lost arbitrage at a 60× spread [1][5].

- Chinese models are production-ready for most tasks. DeepSeek V3.2 and Qwen 3.5 match GPT-5 class on HumanEval, MATH, and GPQA at 5–30× lower cost. Hybrid stacks (DeepSeek for 80% of traffic, Claude for irreducible reasoning) are now the cost-rational default for greenfield deployments not gated by data-residency rules [12][22].

- Measure cost-per-correct-answer, not cost-per-token. A model at $0.15/M that fails 30% of the time is more expensive than one at $3/M that succeeds on the first try [23]. Quality-weighted token economics are the only honest metric at the application layer.

7. Outlook to 2027

Arxiv's price-performance analysis projects continued 5–10× annual deflation for fixed capability levels, suggesting today's $3 Sonnet-equivalent quality will cost $0.30–$0.60 by early 2027 [3]. Three drivers:

- Hardware differentiation (Cerebras, Groq, SambaNova) keeps reshaping the cost-vs-speed frontier for open-weight models — 900+ tok/s at $0.35 blended is already production reality [2].

- MoE architectural gains compound: DeepSeek V4 pricing is signaled to maintain the 20–50× gap versus Western flagships [24].

- Context windows at 1M+ are now the baseline, not a premium feature. The 10M-token era is entering production for agentic workloads [2].

The net: model selection becomes a dynamic routing problem, not a purchase decision. Teams that invest now in observability, provider diversification via gateways (OpenRouter, LiteLLM, Vercel AI Gateway), and workload classification will compound the savings as the frontier moves.

References

[1] Digital Applied — LLM API Pricing Index Q2 2026: Cost Per Token Delta — https://www.digitalapplied.com/blog/llm-api-pricing-index-q2-2026-cost-per-token [2] Digital Applied — AI Model Efficient Frontier Q2 2026: Performance vs Price — https://www.digitalapplied.com/blog/ai-model-performance-vs-price-efficient-frontier-q2 [3] arXiv — The Price of Progress: Price, Performance and the Future of AI — https://arxiv.org/html/2511.23455v2 [4] Particula — LLM Model Routing: Cheap First, Expensive Only When Needed — https://particula.tech/blog/llm-model-routing-cheap-first-reduce-api-costs [5] Tian Pan — How to Stop Paying Frontier Model Prices for Simple Queries — https://tianpan.co/blog/2025-10-19-llm-routing-production [6] Propelius — 7 Proven Techniques to Cut AI Inference Costs by 40-80% in 2026 — https://propelius.tech/blogs/llm-cost-optimization-techniques-2026/ [7] Zen van Riel — Complete Pricing Guide for Production AI — https://zenvanriel.nl/ai-engineer-blog/llm-api-cost-comparison-2026/ [8] Alibaba Cloud — Model Studio Model Pricing — https://www.alibabacloud.com/help/en/model-studio/model-pricing [9] The Decoder — Google's Gemini 3.1 Pro Preview Tops Artificial Analysis Intelligence Index at Less Than Half the Cost — https://the-decoder.com/googles-gemini-3-1-pro-preview-tops-artificial-analysis-intelligence-index-at-less-than-half-the-cost-of-its-rivals/ [10] Artificial Analysis — Gemini 3.1 Pro Preview: New Leader in AI — https://artificialanalysis.ai/articles/gemini-3-1-pro-preview-new-leader-in-ai [11] Winbuzzer — Anthropic's Claude Opus 4.6 Leads AI Intelligence Index — https://winbuzzer.com/2026/02/08/anthropic-claude-opus-46-leads-ai-intelligence-index-xcxwbn/ [12] AICost — Chinese AI Models 2026: 90% Cheaper Than GPT-5 Yet Matching Performance — https://aicost.org/blog/chinese-ai-models-cost-advantage-2026 [13] IntuitionLabs — Low-Cost LLMs: An API Price & Performance Comparison — https://intuitionlabs.ai/articles/low-cost-llm-comparison [14] LangCopilot — Gemini 2.5 Flash vs GPT-5 mini Pricing (2026) — https://langcopilot.com/gemini-2.5-flash-vs-gpt-5-mini-pricing [15] AIPricing.guru — DeepSeek API Pricing 2026: The Cheapest AI API — https://www.aipricing.guru/deepseek-pricing [16] AI-Primer — DeepSeek-V3 Pricing & Cache Details — https://www.ai-primer.com/en/engineer/explore/tools/deepseek-v3 [17] Nailed It — ChatGPT vs Claude vs Gemini vs Grok (2026) — https://nailedit.ai/compare/ai-model-pricing-comparison [18] Artificial Analysis — Opus 4.6: Everything You Need to Know — https://artificialanalysis.ai/articles/opus-4-6-everything-you-need-to-know [19] Piefed — Artificial Analysis Intelligence Index v4.0 — Top Models Analysis — https://piefed.ee/c/localllama/p/118007/ [20] AIMagicX — LLM API Pricing in 2026: The Complete Cost Comparison — https://www.aimagicx.com/blog/llm-api-pricing-comparison-2026 [21] TLDL — DeepSeek API Pricing 2026 — Cheapest LLM — https://www.tldl.io/resources/deepseek-api-pricing [22] Spheron — DeepSeek V3.2 vs Llama 4 vs Qwen 3: Best Open-Source LLM for Production 2026 — https://www.spheron.network/blog/deepseek-vs-llama-4-vs-qwen3/ [23] Grizzly Peak Software — LLM Provider Pricing in 2026: What It Actually Costs Per Task — https://www.grizzlypeaksoftware.com/library/comparing-llm-provider-pricing-and-performance-19oanku0 [24] Macaron — DeepSeek V4 Pricing: Why It Costs 20-50× Less Than OpenAI — https://macaron.im/blog/deepseek-v4-pricing

Content was rephrased for compliance with licensing restrictions.