Multimodal Token Costs: Image, Audio, Video & PDF Economics in 2025–2026

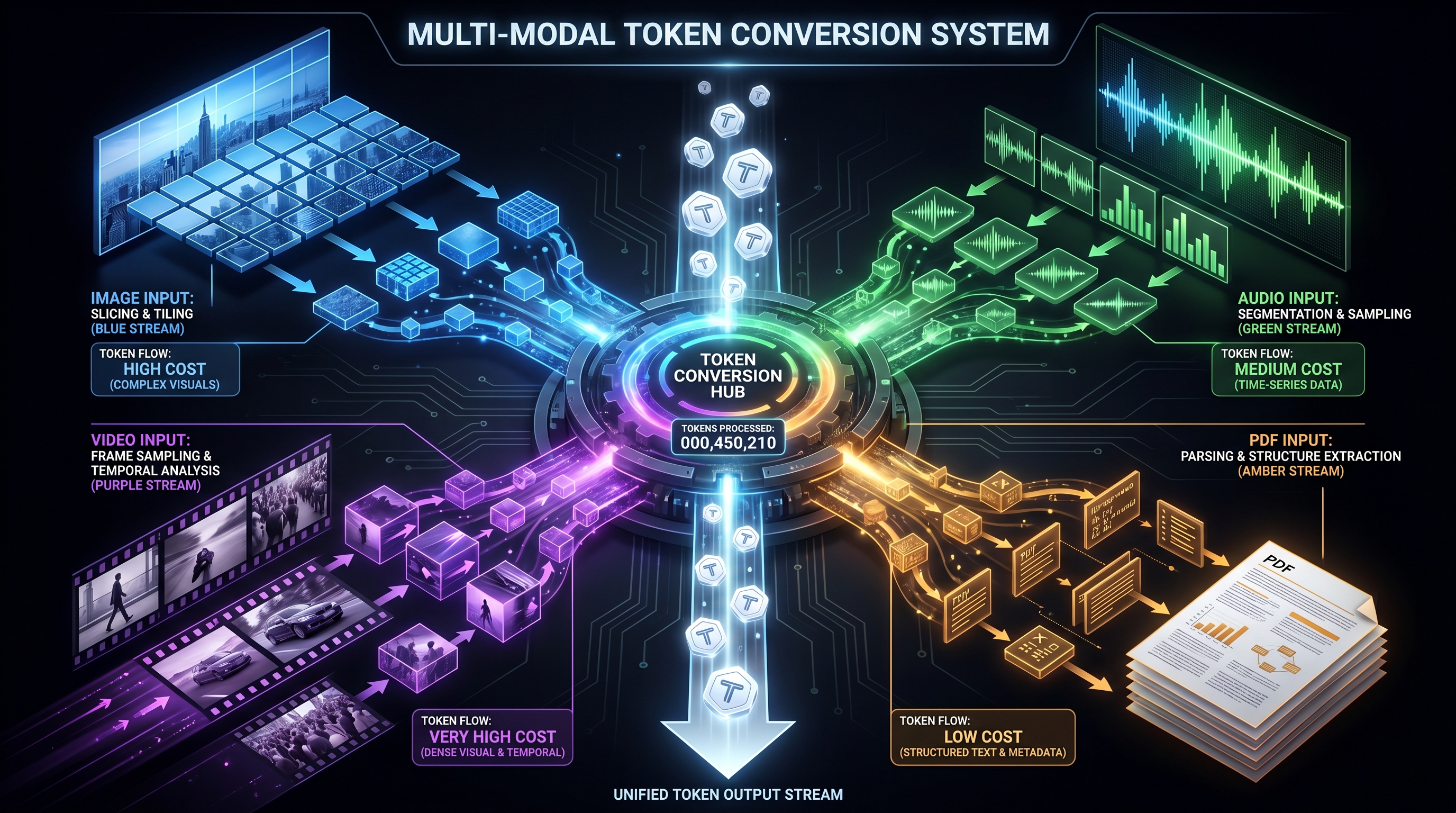

Multimodal AI has moved from demo to production, but the billing mechanics are still poorly understood. Each provider (OpenAI, Anthropic, Google) converts images, audio, video, and PDFs into tokens using fundamentally different formulas, and a single configuration parameter — frame rate, detail mode, tile count — can quietly multiply inference cost by 10× or more. This brief compiles the concrete 2025–2026 numbers: USD-per-million-tokens, per-tile formulas, and the savings levers that matter.

1. Headline Text Pricing as the Cost Anchor

Multimodal inputs are billed as input tokens at the same per-token rate as text, so the base text pricing is the anchor for every downstream calculation.

- OpenAI GPT-5 (launched Aug 7, 2025): $1.25 / 1M input, $10 / 1M output [9]. GPT-5.4 is listed at $2.50/$15 per 1M tokens, with cached input at $0.25; GPT-5 mini at $0.25 in / $2.00 out; GPT-5 nano at $0.05 in / $0.40 out [3][13].

- OpenAI GPT-4o: cut to $2.50 input / $10 output per 1M in October 2024, and as low as $3/$10 by mid-2025 — an ~83% drop in 16 months [14][17]. GPT-4o-mini remained $0.15 / $0.60 per 1M [15].

- Anthropic Claude (2025–2026 family): Sonnet 4.6 at $3 / $15, Opus 4.6 at $5 / $25, Haiku 4.5 at $1 / $5 per 1M input/output tokens [6][10]. Cache-read tokens are 10% of base ($0.30 for Sonnet) and cache-writes are 25% over base.

- Google Gemini: Gemini 2.5 Pro at $1.25 in / $10 out per 1M tokens (rising to $2.50/$15 above 200K context) [7]; Gemini 2.5 Flash at roughly $0.30 in / $2.50 out; Gemini 3 Pro at $2 / $12; Flash-Lite at $0.10 / $0.40 [1][18]. Audio inputs are billed at a higher rate: ~$0.50–$1.00 / 1M input tokens vs. $0.25 for text/image/video on the 2.5 Flash tier [8].

These rates are the denominator; what follows is how many tokens each modality actually consumes.

2. Image Tokenization Formulas

2.1 GPT-4o / GPT-5 vision (tiled, 512×512)

OpenAI uses the most transparent formula: tokens = 85 + 170 × n_tiles, where tiles are 512×512 pixel chunks after the image is scaled to fit [2][11].

- 512×512 image → 1 tile → 255 tokens

- 1024×1024 image → 4 tiles → 765 tokens

- 2048×2048 image → 16 tiles → 2,805 tokens

- 4000×6000 document scan →

16,000+ tokens ($0.041/image at GPT-4o input pricing) [2]

Low-detail mode flattens every image to a fixed 85 tokens regardless of dimensions — a ~9× reduction that most teams don't discover until after their first bill shock [2]. For classification or presence detection, low-detail is essentially free accuracy.

GPT-4o-mini has an unusual quirk: it charges the same per-image token count as GPT-4o-2024-05-13, which makes mini vision roughly the same absolute cost as full GPT-4o vision despite the cheaper text rate (5,667 tokens per 512×512 tile in Azure's calculation) [4][5]. Teams saw this as ~25M input tokens per 1,000 pages of document processing on GPT-4o-mini [16].

2.2 Claude (pixel-area formula)

Claude uses tokens ≈ (width × height) / 750, with auto-scaling if the long edge exceeds 1568 px or the image would exceed ~1,600 tokens [19][2]. Claude rejects images above 2000 px on an edge, which has caused real production failures when pipelines submitted unscaled App Store screenshots [20].

- 1092×1092 → ~1,590 tokens (near the cap)

- Typical "capped" image → ~1,333–1,600 tokens

- Cost at Haiku 4.5 ($1/M): ~$0.0016/image

- Cost at Sonnet 4.6 ($3/M): ~$0.0048/image

- Cost at Opus 4.6 ($5/M): ~$0.008/image

Claude Sonnet is widely reported as the most resilient model for document extraction under image-quality degradation, which matters more than nominal token cost for invoices and forms [2].

2.3 Gemini (hybrid: flat-rate below 384 px, tiled above)

Gemini's rule: if both dimensions ≤ 384 px, 258 tokens flat; otherwise the image is tiled at min(width, height) / 1.5, and each tile = 258 tokens [21]. Empirically, a standard ~1024×1024 photograph lands around 560 tokens [2]. That makes Gemini vision input the cheapest of the three at comparable quality — on Gemini 2.5 Flash at $0.30/1M input, a typical image costs ~$0.00017, roughly 10× cheaper than Claude Sonnet and 5× cheaper than GPT-4o at $2.50/1M.

2.4 Image generation (output tokens)

Gemini 2.5 Flash Image (aka "Nano Banana") charges $30 per 1M output tokens, with each generated image fixed at 1,290 output tokens → $0.039/image [22][23]. Nano Banana Pro (Gemini 3 Pro Image) scales up to $0.134/image for standard and $0.24/image at 4K [24]. Third-party proxies undercut this: Kie.ai lists $0.020/image (49% cheaper) and laozhang.ai $0.025/image (36% cheaper) [25].

OpenAI's gpt-image-2 (inside GPT-5.5 omnimodal) is priced at $5–$30 per 1M tokens for image generation [26], while the older gpt-image-1 and DALL-E 3 sit around $0.04/image.

2.5 Back-of-envelope at volume

At 10,000 images/day on GPT-4o high-detail (765 tokens/image avg), monthly input = 7.65 B tokens → ≈$19,125/month just for image input at $2.50/M — before output, text context, or system prompts [2]. The same workload on Gemini 2.5 Flash (560 tokens/image avg, $0.30/M) is closer to ~$500/month, a roughly 38× cost delta.

3. Audio: The Batch-vs-Realtime Cliff

Audio billing looks simple — until you switch from batch transcription to realtime conversation.

3.1 Batch transcription

- OpenAI Whisper / GPT-4o Transcribe: $0.006 / minute [27][28].

- Deepgram Nova: ~$0.0077 / minute [2].

- Gemini (audio through Gemini Flash): billed as input tokens at 32 tokens per second = 1,920 tokens/minute [29]. At Gemini 2.5 Flash's audio input rate of ~$1.00/1M tokens, that's ~$0.0019/minute — ~3× cheaper than Whisper in pure dollar terms, and the transcript is delivered by a full LLM that can reason over the audio. Earlier Gemini 2.0 Flash documentation cited ~25 audio tokens/sec [30].

- Gemini 1.5 Flash (older): as low as $0.012/hour for audio-in, widely benchmarked as the cheapest speech pipeline in 2024–2025 [31].

3.2 Realtime audio (voice agents)

This is where the math collapses. OpenAI's Realtime API was originally $100/1M output audio tokens, then cut to $40/1M input / $80/1M output audio tokens in Dec 2024 [32]. Cached audio input is $20/1M [33]. GPT-5.5's realtime variant (gpt-realtime-1.5) is listed at $32–$64/1M tokens [26].

Translated to minutes:

- Batch STT → LLM → TTS pipeline: $0.07–$0.22/minute total [2].

- Realtime LLM audio: $0.30 – $2+/minute depending on system-prompt size.

- A 1,000-word system prompt re-sent every turn adds ~$1.63/minute alone — more than the audio itself [2].

A 15-minute conversation on the Realtime API dropped ~30% in total cost after Nov 2024 caching updates [34], but it's still an order of magnitude more expensive than batch STT + chat completion. The architectural decision (realtime vs. batch) dominates everything else.

3.3 Accuracy-per-dollar

Word error rate roughly doubles as SNR drops from 15 dB to 5 dB; 95%-accurate models on clean audio fall below 70% in call-center environments [2]. Spectral-subtraction preprocessing can improve SNR by ~8 dB while increasing WER by 15% because it strips speech harmonics [2]. The practical win: pay slightly more for end-to-end models trained on noisy data, not for preprocessing.

4. Video: Where a Single Parameter Costs $780K/Year

Gemini's video API is the dominant option for native video understanding. It tokenizes at ~258 visual tokens per frame + 32 audio tokens per second [29][2]. The default sampling rate is 1 frame per second.

- 60-second video @ 1 FPS default: 60 frames × 258 + 60 × 32 = 17,400 tokens (~$0.022 on Gemini 2.5 Pro input).

- 60-second video @ 24 FPS override: 1,440 × 258 + 60 × 32 = ~374,400 tokens → a 21.5× cost jump from one parameter [2].

At 100 videos/day, that default vs. override difference is ~$104/day vs. $2,235/day — **$780K/year** swing from a single FPS flag [2]. On the Gemini 2.5 Live API, one user calculated 258 video tokens/sec × 3600 = 0.93M tokens per hour for continuous sessions [35].

Mitigations that actually work in production:

- Content-aware frame selection (perceptual hashing + scene detection) cuts tokens 13–45% on typical content, up to 83% on demo videos with long static segments [2].

- Implicit context caching in Gemini (on by default since mid-2025) gives a 90% discount on cached tokens for repeat analysis. A 5-minute video: $0.18 first query → $0.018 per cached re-query [2].

- Lower FPS — 0.1 FPS is often sufficient for lectures, product demos, and static-camera content.

5. PDF Handling: Native vs. OCR-First

5.1 Claude native PDFs

Claude processes PDFs by extracting text and rendering each page as an image, feeding both to the model [36][37]. Each page typically consumes 1,500–3,000 tokens depending on density [36][38], with no extra fee beyond standard token rates. Limits: 100 pages on 200K-context models, 600 pages on 1M-context Sonnet 4.6 / Opus 4.6 [39]; 10 MB per file, up to 5 files per message [40]. Above 1,000 pages Claude processes text only, losing visual reasoning.

Cost example: a 50-page PDF at 2,250 tokens/page avg = 112,500 tokens → $0.34 on Sonnet 4.6, or $0.11 on Haiku 4.5. For 10,000 documents/month that's ~$3,375 on Sonnet, comparable to per-page dedicated-OCR pricing but with full reasoning included.

5.2 GPT-4o/5 PDFs

OpenAI PDFs route through vision: each page is rendered as an image, tiled, and priced by the 85+170×n formula. A dense page at 1024×1536 hits ~1,445 tokens. A 1,000-page batch ran ~25M input / 0.4M output tokens on GPT-4o-mini in one widely-cited benchmark [16] — roughly $3.75 input + $0.24 output = ~$4/1,000 pages on mini.

5.3 OCR-first vs. native LLM vision

For structured extraction (invoices, forms, receipts):

- LLM vision: $0.20 – $1.00 per document [2].

- Dedicated OCR APIs: $0.01 – $0.05 per page.

- Hybrid pipeline (OCR → LLM post-process) often achieves equivalent accuracy at 10–20× lower cost [2].

Reserve native LLM vision for layout reasoning, table interpretation, and tasks where visual context genuinely matters.

6. Prompt Caching: The Single Biggest Multimodal Lever

All three providers now support input caching with aggressive discounts [41][42]:

- Anthropic Claude: cached reads at 10% of base (90% discount); writes at 125% (25% premium). Breakeven is ~2 re-uses per cached prefix per day [42][10].

- OpenAI: 50% discount on cached input tokens; GPT-5.4 cached input is $0.25 vs. $2.50 base [13]. Cached audio at $20/1M vs. $40/1M base.

- Google Gemini: implicit context caching active by default on 2.5 family; explicit caching offers up to 90% off cached tokens [2][43].

An arXiv evaluation across all three providers found prompt caching reduces API cost by 45–80% and TTFT by 13–31% on long-horizon agent tasks [44]. For multimodal workloads — where a 50-page PDF or 5-minute video dominates token count — caching flips the economics of re-query workflows (Q&A over the same document, iterative video QA, multi-turn voice agents).

7. Cross-Provider Cost Matrix (mid-2026)

Approximate cost per 1,000 standard inputs, blending published formulas with current pricing:

| Modality | GPT-4o / GPT-5 | Claude Sonnet 4.6 | Gemini 2.5 Flash |

|---|---|---|---|

| 1024×1024 image (high detail) | ~$0.0019 / img | ~$0.0048 / img | ~$0.00017 / img |

| 1024×1024 image (low detail) | ~$0.0002 / img | n/a (no mode) | n/a |

| 1-min audio (batch) | $0.006 (Whisper) | via Sonnet vision/text only | ~$0.002 |

| 1-min audio (realtime) | ~$0.30–$2+ | n/a | ~$0.10+ (Live API) |

| 1-min video @ 1 FPS | via frame submit | via frame submit | ~$0.022 native |

| 50-page PDF (dense) | ~$0.20–$0.40 vision | ~$0.34 native | ~$0.05–$0.10 |

Gemini 2.5 Flash consistently wins on raw multimodal input cost; Claude wins on document-extraction accuracy under degradation; GPT-4o/5 wins on general reasoning but pays a premium for vision tiles.

8. Takeaways

- Always compute multimodal cost from token formulas, not marketing pages. A 4000×6000 document is 16K+ tokens on GPT-4o — factor it before shipping.

- Default to low-detail image mode for classification; it's a ~9× cost reduction that ships with zero code.

- Never default to 24 FPS for video without testing content-aware sampling first; 0.1 FPS + scene detection is usually enough.

- Price realtime vs. batch audio separately. The two differ by 10–30×; choose the architecture before the integration.

- Enable prompt caching immediately for any workload re-using system prompts, long PDFs, or videos — it's 45–90% savings for ~0 engineering effort.

- Route by modality. Gemini 2.5 for native video/audio, Claude Sonnet for document extraction, GPT-5 for mixed reasoning. No single model is cheapest and best at everything.

The teams that avoid billing shocks didn't find a secret; they ran the arithmetic in a spreadsheet before writing the first integration line [2].

References

[1] costgoat.com — Gemini API Pricing Calculator & Cost Guide (Apr 2026) — https://costgoat.com/pricing/gemini-api [2] Tian Pan — Multimodal LLMs in Production: The Cost Math Nobody Runs Upfront (Apr 2026) — https://tianpan.co/blog/2026-04-10-multimodal-llms-production-cost-math [3] The Rogue Marketing — OpenAI API Pricing October 2025: GPT-5, Realtime & Image Generation — https://the-rogue-marketing.github.io/openai-api-pricing-comparison-october-2025/ [4] Microsoft Learn — Azure OpenAI GPT-4o Mini image input token limits — https://learn.microsoft.com/en-us/answers/questions/2126187/azure-open-ai-gpt-4o-mini [5] OpenAI Community — GPT-4o-mini Vision API: High Prompt Token Usage — https://community.openai.com/t/gpt-4o-mini-vision-api-high-prompt-token-usage/1149227 [6] MetaCTO — Full Anthropic Cost Breakdown (Mar 2026) — https://www.metacto.com/blogs/anthropic-api-pricing-a-full-breakdown-of-costs-and-integration [7] aifreeapi.com — Gemini API Pricing Guide 2025 — https://aifreeapi.com/en/posts/gemini-api-pricing-guide [8] ai-primer.com — Gemini pricing tier breakdown — https://www.ai-primer.com/engineer/tools/gemini [9] thesyntaxdiaries.com — What's New in GPT-5 (2025) — https://thesyntaxdiaries.com/whats-new-in-gpt-5 [10] claude.com / docs.anthropic.com — Claude API Pricing — https://docs.anthropic.com/en/docs/about-claude/pricing [11] OpenAI Community — How do I calculate image tokens in GPT-4 Vision? — https://community.openai.com/t/how-do-i-calculate-image-tokens-in-gpt4-vision/492318 [12] OpenAI Community — Vision Pricing calculator: inaccurate resizing/rounding — https://community.openai.com/t/vision-pricing-calculator-inaccurate-resizing-rounding-vs-api-costs/1281361 [13] OpenAI — API Pricing page — https://openai.com/api/pricing/ [14] PE Collective — GPT-4o pricing tracker — https://pecollective.com/tools/gpt-4o-pricing/ [15] OpenAI — GPT-4o mini: advancing cost-efficient intelligence — https://openai.com/index/gpt-4o-mini-advancing-cost-efficient-intelligence/ [16] Hacker News — GPT-4o-mini image request token benchmark — https://news.ycombinator.com/item?id=41051038 [17] cursor-ide.com — ChatGPT API Prices in July 2025 — https://www.cursor-ide.com/blog/chatgpt-api-prices [18] aifreeapi.com — Gemini API Pricing & Quotas 2026 — https://aifreeapi.com/en/posts/gemini-api-pricing-and-quotas [19] Anthropic — Vision docs (width × height / 750 formula) — https://console.anthropic.com/docs/en/build-with-claude/vision [20] GitHub — Claude Code image-dimension bug (2000 px limit) — https://github.com/anthropics/claude-code/issues/15730 [21] Google AI Developers Forum — Gemini Pro image pricing by tile — https://discuss.ai.google.dev/t/gemini-pro-image-pricing-by-tile-or-fixed/40839 [22] Google Developers Blog — Introducing Gemini 2.5 Flash Image — https://developers.googleblog.com/es/introducing-gemini-2-5-flash-image/ [23] Vertex AI docs — Gemini 2.5 Flash Image: 1,290 tokens per image — https://docs.cloud.google.com/vertex-ai/generative-ai/docs/models/gemini/2-5-flash-image [24] yingtu.ai — Nano Banana API Pricing 2025 — https://yingtu.ai/en/blog/nano-banana-api-pricing [25] fastgptplus.com — Cheapest Gemini Image API (Nov 2025) — https://fastgptplus.com/en/posts/cheapest-gemini-image-api [26] lushbinary.com — GPT-5.5 Omnimodal API Guide — https://lushbinary.com/blog/gpt-5-5-omnimodal-api-text-image-audio-video-guide/ [27] OpenAI Community — Audio Model Pricing — https://community.openai.com/t/audio-model-pricing-is-unclear/1149806 [28] OpenAI Community — Realtime API vs Whisper pricing — https://community.openai.com/t/realtime-api-vs-whisper-pricing/968409 [29] geminibyexample.com — Calculating multimodal input tokens (32 tok/sec audio) — https://geminibyexample.com/027-calculate-input-tokens/ [30] Google AI forum — Gemini 2.0 Flash Audio Input Pricing — https://discuss.ai.google.dev/t/gemini-2-0-flash-audio-input-pricing/64734 [31] Kenneth Tibow — Gemini > Whisper?? ($0.012/hour audio) — https://ktibow.github.io/blog/geminiaudio/ [32] OpenAI Community — WebRTC gpt-4o-audio cost: $100→$40/1M token cut — https://community.openai.com/t/webrtc-gpt-4o-audio-cost-per-minute-of-conversation/1069092 [33] OpenAI — Introducing the Realtime API (cached pricing) — https://openai.com/index/introducing-the-realtime-api/ [34] OpenAI Community — New Realtime API voices and cache pricing — https://community.openai.com/t/new-realtime-api-voices-and-cache-pricing/998238 [35] Google AI forum — Gemini Live 2.5 token counting for long video — https://discuss.ai.google.dev/t/gemini-live-2-5-token-counting/99245 [36] Towards Data Science — Introducing the New Anthropic PDF Processing API — https://towardsdatascience.com/introducing-the-new-anthropic-pdf-processing-api-0010657f595f [37] datastudios.org — Claude PDF Reading Capabilities and Limits — https://www.datastudios.org/post/claude-ai-pdf-uploading-pdf-reading-capabilities-text-extraction-accuracy-layout-support-and-fil [38] The Decoder — Claude 3.5 Sonnet PDF analysis (1,500–3,000 tokens/page) — https://the-decoder.com/anthropics-claude-3-5-sonnet-adds-pdf-analysis-capabilities-including-embedded-images/ [39] Anthropic — PDF Support docs (page limits) — https://docs.claude.com/en/docs/build-with-claude/pdf-support [40] datastudios.org — Claude and PDF Documents Technical Overview — https://www.datastudios.org/post/claude-and-pdf-documents-technical-complete-overview [41] redresscompliance.com — AI API Cost Reduction: Prompt Caching — https://redresscompliance.com/ai-api-cost-reduction-prompt-caching-routing.html [42] Anthropic — Prompt caching with Claude — https://www.anthropic.com/news/prompt-caching [43] Artificial Analysis — Prompt Caching: Cost & Performance Across Providers — https://artificialanalysis.ai/models/caching [44] arXiv 2601.06007 — An Evaluation of Prompt Caching for Long-Horizon Agentic Tasks — https://arxiv.org/html/2601.06007v1

Content was rephrased for compliance with licensing restrictions.