Model Routing and Cascades: Cheap-First, Expensive-Fallback Architectures for Production LLM Cost Savings (2025–2026)

Executive Summary

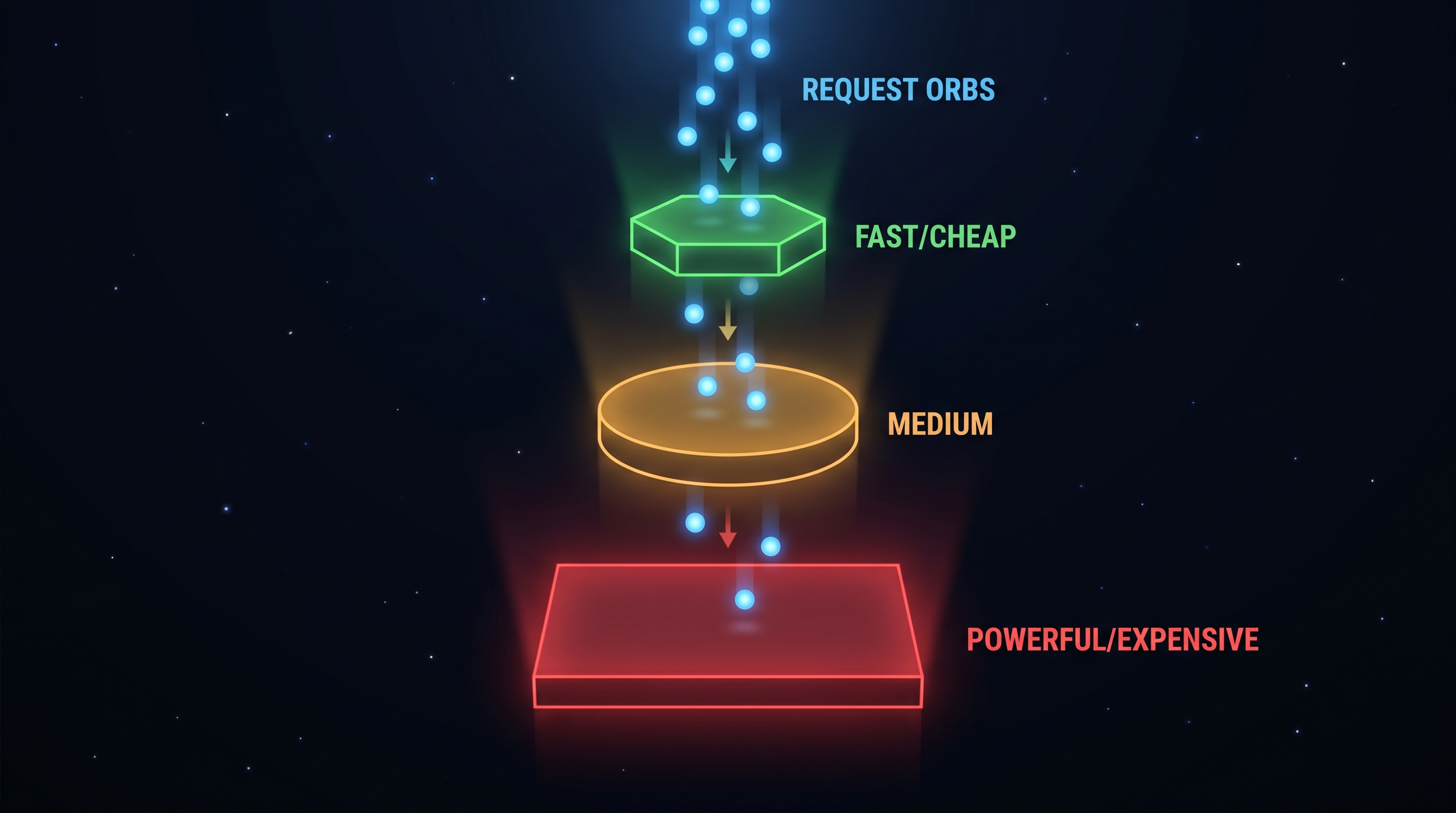

Model routing and cascading have emerged as the single most tractable lever for controlling LLM inference costs in production. The thesis is simple: a two-order-of-magnitude price gap separates frontier models from efficient ones — Claude Opus 4.6 at $5/$25 per million input/output tokens versus Claude Haiku 4.5 at $1/$5, GPT-5.2 Pro at $21/$168 versus GPT-5 nano at $0.05/$0.40, and DeepSeek V3.2 at $0.28/$0.42 [1][2]. Yet for 60–70% of production traffic — classification, extraction, short answers, and routine summarization — the cheap tier produces output that is functionally indistinguishable from the expensive one [3]. Routing simple queries to cheap models and escalating only the hard ones captures that gap. Reported production savings cluster in the 30–70% range for mixed workloads, with benchmark-level numbers reaching 85% (RouteLLM on MT Bench) and 98% (FrugalGPT on classification) under favorable conditions [4][5][6].

This document covers the academic foundations (FrugalGPT, RouteLLM), the production gateway ecosystem (Portkey, LiteLLM, OpenRouter, Martian, vLLM Semantic Router), concrete cost math with 2025–2026 pricing, and the failure modes that separate teams that capture the savings from teams that introduce silent quality regressions.

1. The Price Gap That Makes Routing Worthwhile

As of late 2025/early 2026, the pricing landscape has settled into a clear three-tier shape, with a roughly 100× spread between the cheapest and most expensive models [1][2][7]:

| Tier | Representative Models | Input $/M | Output $/M |

|---|---|---|---|

| Premium | Claude Opus 4.6, GPT-5.2 Pro, o3-pro | $5–$21 | $25–$168 |

| Mid | Claude Sonnet 4.6, GPT-5.4, Gemini 3 Pro | $2–$3 | $12–$15 |

| Budget | Claude Haiku 4.5, GPT-5 mini, Gemini 2.0 Flash | $0.25–$1 | $1.25–$5 |

| Ultra-budget | DeepSeek V3.2, Gemini 2.0 Flash-Lite, Mistral Nemo | $0.02–$0.28 | $0.04–$0.42 |

DeepSeek V3.2 notably halved its prices in late 2025 and offers a 90% cache-hit discount that can bring effective input cost to $0.028/M [1]. The practical implication: if even 30% of queries can be served by a $0.28/M model instead of a $15/M model, the blended cost drops by roughly an order of magnitude on that slice — and for typical chatbot, extraction, and classification workloads the shiftable fraction is far higher than 30%.

2. FrugalGPT: The Cascade Foundation

Chen, Zaharia, and Zou's FrugalGPT (Stanford, 2023; updated TMLR 2024) formalized the LLM cascade pattern and set the ceiling numbers the rest of the field benchmarks against [5][6]. The cascade strategy sends a query to a list of LLM APIs in ascending price order; each response is scored by a lightweight scorer model, and the pipeline stops as soon as the scorer's confidence exceeds a per-task threshold. Only the residual hard queries reach the most expensive model.

Headline FrugalGPT results on HEADLINES, OVERRULING, and COQA benchmarks [5][6]:

- Cost savings range 50%–98% while matching the best individual LLM API's accuracy.

- On the HEADLINES 4-way news classification task, FrugalGPT matched GPT-4 accuracy at $0.6 vs. $33.1 per test set — a 98.3% cost reduction.

- On OVERRULING, FrugalGPT achieved a 1% accuracy gain while reducing costs by 73% versus pure GPT-4.

- In aggregate, FrugalGPT reduced cost by ~80% while improving accuracy by ~1.5% on mixed tasks.

The surprising non-obvious finding: cascades can beat the frontier model, because a cheap-first cascade exposes diversity. Different models fail on different queries; when a strong scorer selects the cheap model's answer when it is correct and escalates only when it is wrong, the cascade's accuracy ceiling exceeds any single component.

FrugalGPT's limitation is task specificity: the scorer has to be trained (or carefully prompted) per task, so the gains attenuate on open-ended generation where "correctness" is hard to score. This is exactly the gap RouteLLM targets.

3. RouteLLM: Learned Preference-Based Routing

RouteLLM (UC Berkeley / LMSYS, ICLR 2025) reframes cascading as a pre-call classification problem: train a BERT-scale router (~110M parameters) on Chatbot Arena preference data to predict, before any LLM call, whether a cheap model will suffice [4][8][9]. It is the canonical open-source reference implementation — a drop-in replacement for OpenAI's client that routes between a strong and weak model based on a tunable cost threshold.

Four router architectures are provided: similarity-weighted ranking, matrix factorization, BERT classifier, and causal LLM classifier. Benchmark numbers against GPT-4 (strong) and Mixtral 8x7B (weak) [4][8][9]:

- MT Bench: up to 85% cost reduction while maintaining 95% of GPT-4 quality.

- MMLU: 45% cost reduction (with 2% augmentation from validation data, the best causal LLM router needs only 54% GPT-4 calls to hit 95% of GPT-4 quality).

- GSM8K: 35% cost reduction at the same quality bar.

- Overall best routers achieve 3.66× cost savings (≈73% reduction) against GPT-4.

- RouteLLM matches commercial routers (Martian, Unify AI) on MT Bench while being >40% cheaper [4].

The paper estimates average GPT-4 cost at $24.7/M tokens and Mixtral 8x7B at $0.24/M tokens — a ~100× ratio that makes even imperfect routing lucrative [8]. The router itself adds only 10–30ms latency and <0.4% extra cost [3][8].

At ICLR 2025, the matrix factorization router demonstrated 95% of GPT-4 quality while routing only 26% of queries to the expensive model; with data augmentation that dropped to 14%, a 75% cost reduction [10].

4. Production Numbers from Real Deployments

Academic benchmarks over-represent easy cases. Production deployments reveal a tighter but still substantial band of savings [3][11][12][13]:

- Particula Tech three-tier deployment (2026): A client spending $38K/month on LLM APIs dropped to $15.2K/month — a 60% reduction with no measurable quality decline. Traffic distribution was 62% Haiku-class ($0.25/M), 27% mid-tier ($3/M), 11% premium ($15/M), with 8% of cheap-model responses getting re-routed upward via confidence-based escalation. Implementation took four weeks [11].

- Portkey (delivery platform case study): Smart fallback across Anthropic, OpenAI, and Vertex rescued nearly 500K failed requests and saved over $500,000 in LLM spend at tens of millions of requests per quarter, across 1,000+ engineers and 350+ workspaces at 99.99% uptime [14].

- TokenMix.ai aggregate data: Mixed-workload deployments see 45–60% savings. One SaaS chatbot routing 60% of simple queries to Gemini Flash instead of GPT-4o saved 87% on monthly API costs [12].

- ROUTENLP pilot (8 weeks, ~5K queries/day): 58% inference cost reduction, 91% response acceptance, p99 latency dropping from 1,847ms → 387ms. Six-task benchmark: 40–85% cost reduction (volume-weighted mean 62%) while retaining 96–100% quality on structured tasks and 96–98% on generation [9].

- Markaicode 3-tier worked example (1M requests/month): Single-model GPT-4o costs $5,000/month; 3-tier router (60% cheap / 35% standard / 5% complex) drops it to $2,400/month — a 52% reduction [13].

- Martian financial-services agent: A 50-step workflow's end-to-end success rate jumped 6× (from 5.98% to 35.99%) when routing replaced pure GPT-4, because per-step quality gains compound multiplicatively across long agent chains [15].

- Industry baseline: Teams running multi-model architectures routinely report 30–70% cost reductions as the default expectation [3][11][12].

5. The Production Gateway Ecosystem

Four categories of infrastructure have matured around this pattern in 2025–2026.

5.1 Open-source routers and proxies

- RouteLLM — reference implementation from UC Berkeley / LMSYS, drop-in OpenAI client replacement, includes pretrained routers [4].

- LiteLLM — the de-facto Python ecosystem choice; proxy + SDK with built-in fallback chains; reported 30–50% savings for typical deployments [12][13].

- vLLM Semantic Router (Red Hat / IBM, Sept 2025) — an open-source Envoy ext_proc router that classifies by intent and selectively applies reasoning. On MMLU-Pro with Qwen3 30B, it delivered +10.2 pp accuracy, −48.5% token usage, −47.1% latency versus direct vLLM inference. Knowledge-intensive domains (business, economics) saw >20 pp accuracy improvements [16][17].

- OpenRouter — managed aggregator over 1600+ models with a 5% markup; simplest entry point but markup dominates at scale ($5K/month for a team spending $100K) [18].

5.2 Enterprise gateways

- Portkey — processed 2+ trillion tokens across 650+ organizations in 2024; observed peak failure rates >20% from individual providers during incidents, underscoring the reliability motivation for multi-provider routing [14][19].

- Bifrost (Maxim AI) — benchmarked at 11µs overhead at 5K RPS, the lowest-latency gateway measured in 2025 comparison studies; semantic caching reduces inference costs 40–60% without quality degradation [18].

- Helicone, TensorZero — observability-first and GitOps-first variants of the same pattern.

5.3 Commercial routers

- Martian and Unify AI — routing-as-a-service; RouteLLM benchmarks show the open-source routers match their quality while being >40% cheaper, but commercial offerings bundle prompt optimization, automatic prompt selection per model, and SLAs that matter for agentic workloads [4][15].

5.4 Cost-savings breakdown by mechanism

Across the gateway ecosystem, the 30–70% savings band comes from a stack of compounding techniques rather than a single trick [18][19]:

- Query-complexity routing (the core cheap-first mechanism): 30–60%.

- Semantic caching (serve cached responses for queries with similar meaning): additional 40–60% on cache-hit traffic.

- Provider arbitrage (DeepSeek V3.2 @ $0.28/M vs. GPT-5.2 @ $1.75/M for comparable quality on many tasks): 50–90% on the shifted slice.

- Reasoning-mode gating (only invoke chain-of-thought when needed): −48.5% tokens per vLLM Semantic Router data [16].

- Failover / reliability: not a cost saving per se, but rescues revenue — Portkey's data shows individual providers hitting >20% failure rates during peak incidents [19].

6. Concrete Cost Math: A 10M-Request Workload

Take a hypothetical chatbot serving 10M requests/month at ~1K tokens per request (mix of input and output, weighted 3:1 input-heavy).

Baseline: all traffic on Claude Sonnet 4.6 ($3/$15 per MTok) [2][7]:

- Input: 7.5B tokens × $3 = $22,500

- Output: 2.5B tokens × $15 = $37,500

- Total: $60,000/month

Two-tier router: 70% Haiku 4.5 ($1/$5), 30% Sonnet 4.6:

- Haiku: (5.25B × $1) + (1.75B × $5) = $5.25K + $8.75K = $14,000

- Sonnet: (2.25B × $3) + (0.75B × $15) = $6.75K + $11.25K = $18,000

- Total: $32,000/month — 47% savings

Three-tier router: 50% DeepSeek V3.2 ($0.28/$0.42), 40% Haiku 4.5, 10% Sonnet 4.6:

- DeepSeek: (3.75B × $0.28) + (1.25B × $0.42) = $1,050 + $525 = $1,575

- Haiku: (3B × $1) + (1B × $5) = $3K + $5K = $8,000

- Sonnet: (0.75B × $3) + (0.25B × $15) = $2.25K + $3.75K = $6,000

- Total: $15,575/month — 74% savings

The cheap-first cascade variant where every query is tried on DeepSeek first, with a scorer escalating ~30% of responses, is even more favorable in theory — but only works when the scorer is reliable enough to avoid double-billing (paying for cheap + expensive on the same query). In practice, production teams prefer pre-call routing because the cost of a mis-scored cascade (paying both tiers) can exceed the savings.

7. When Cascades Beat Routers, and Vice Versa

- Pre-call routers (RouteLLM, Bifrost, LiteLLM): lower latency (10–30ms overhead), predictable cost, but wrong-way routing produces quality regressions because you never get the expensive model's answer.

- Cascades (FrugalGPT, ROUTENLP): higher ceiling accuracy (can exceed frontier models), deterministic quality floor via the scorer, but latency adds on escalation and mis-calibrated thresholds double-bill.

- Hybrid (ROUTENLP's "closed loop" with targeted distillation and automatic router retraining): the 2025 state of the art. Reports 2× more cost reduction than random distillation and statistically significant gains over RouteLLM (cost ratio 0.159 vs. 0.246, p<0.001) [9].

The failure mode to avoid is what a 2026 Tianpan post calls "optimizing cost without tracking quality" — the dashboard shows "95% quality maintained" while contract analysis accuracy drops from 94% to 79% because the aggregate metric averages over query types where quality matters differently [10][3]. The fix is quality-aware routing with per-task evaluation, not just a single quality threshold.

8. Practical Guidance

- Start with 2–3 tiers, not 10. The marginal savings from a fourth tier rarely justify the operational complexity [10].

- Instrument quality before you route. A routing dashboard that doesn't track per-task acceptance is worse than no routing, because silent regressions churn users [3][10].

- Set fallback chains, not just routes. Individual providers hit >20% failure rates during incidents; transparent failover to a secondary provider is a reliability win and a cost win when the failover target is cheaper [19].

- Use prompt caching aggressively. Anthropic's prompt caching and DeepSeek's 90% cache-hit discount compound with routing: a cache-hit DeepSeek call at $0.028/M input is ~900× cheaper than a Sonnet cache-miss [1][2].

- Calibrate thresholds on your traffic, not public benchmarks. RouteLLM's 85% reduction number is from MT Bench; structured tasks yield 35–46%. Your traffic distribution, not the paper's, determines the realistic ceiling [4][8].

- Break even at ~$1K/month. Below that, the engineering investment exceeds the savings. Above that, routing almost always pays back within weeks [3][11].

9. Conclusion

The cheap-first / expensive-fallback pattern is now the default architecture for serious production LLM deployments. The open-source tooling (RouteLLM, LiteLLM, vLLM Semantic Router) is mature, the academic foundations (FrugalGPT's 50–98% cost savings with accuracy gains, RouteLLM's 85% on MT Bench) are solid, and production case studies consistently land in the 30–70% savings band for mixed workloads [3][4][5][11][12]. With 100× price spreads between frontier and efficient models still persisting in 2026 — and DeepSeek, Gemini Flash-Lite, and Mistral Nemo pushing the floor even lower — the arbitrage opportunity is not closing. What separates the teams that capture it from the teams that introduce quality regressions is not the routing logic itself; it's the monitoring infrastructure, quality-aware thresholds, and per-task evaluation that surround it.

References

[1] IntuitionLabs, "LLM API Pricing Comparison (2025): OpenAI, Gemini, Claude," Oct 31, 2025. https://intuitionlabs.ai/articles/llm-api-pricing-comparison-2025

[2] AI Cost Check, "AI API Pricing Guide 2026: Cheapest Models, Best Defaults, and Long-Context Picks," Feb 10, 2026. https://aicostcheck.com/blog/ai-api-pricing-guide-2026

[3] NeuralRouting.io, "What Is an LLM Router? The Engineering Guide," Apr 10, 2026. https://neuralrouting.io/blog/what-is-an-llm-router

[4] LMSYS Org, "RouteLLM: An Open-Source Framework for Cost-Effective LLM Routing," Jul 1, 2024. https://lmsys.org/blog/2024-07-01-routellm/

[5] Chen, Zaharia, Zou, "FrugalGPT: How to Use Large Language Models While Reducing Cost and Improving Performance," arXiv:2305.05176 / TMLR 2024. http://arxiv.org/pdf/2305.05176

[6] Stanford FutureData, "FrugalGPT GitHub repository," 2023–2024. https://github.com/stanford-futuredata/FrugalGPT

[7] APIScout, "LLM API Pricing 2026: GPT-5 vs Claude vs Gemini," Mar 16, 2026. https://apiscout.dev/blog/llm-api-pricing-comparison-2026

[8] Ong et al., "RouteLLM: Learning to Route LLMs with Preference Data," arXiv:2406.18665 (ICLR 2025). https://arxiv.org/pdf/2406.18665

[9] OpenReview, "ROUTENLP: Closed-Loop Cost-Aware Routing" (anonymous submission). https://openreview.net/pdf?id=H9KBJXHoA8

[10] Tianpan, "Quality-Aware Model Routing: Why Optimizing for Cost Alone Fails," Apr 14, 2026. https://tianpan.co/blog/2026-04-14-quality-aware-model-routing

[11] Particula Tech, "LLM Model Routing: Cheap First, Expensive Only When Needed," Feb 26, 2026. https://particula.tech/blog/llm-model-routing-cheap-first-reduce-api-costs

[12] TokenMix, "How to Use Multiple AI Models: Routing, Failover, and the Unified API Approach (2026)," Apr 13, 2026. https://tokenmix.ai/blog/how-to-use-multiple-ai-models

[13] Markaicode, "The LLM Router Pattern: Dynamically Switching Models by Task Complexity and Cost," Mar 2, 2026. https://markaicode.com/llm-router-pattern-model-switching/

[14] Portkey, "Build AI agents with Portkey's MCP client — delivery platform case study." https://portkey.ai/case-studies/leading-delivery-platform

[15] Martian, "Routing for AI Agents — financial services case study." https://www.withmartian.com/solutions/routing-for-ai-agents

[16] Wang et al., "When to Reason: Semantic Router for vLLM," OpenReview/HuggingFace, Sept 2025. https://huggingface.co/papers/2510.08731

[17] Red Hat Developer, "vLLM Semantic Router: Improving efficiency in AI reasoning," Sept 11, 2025. https://developers.redhat.com/articles/2025/09/11/vllm-semantic-router-improving-efficiency-ai-reasoning

[18] Maxim AI, "Top 5 LLM Gateways in 2025," Dec 4, 2025. https://www.getmaxim.ai/articles/top-5-llm-gateways-in-2025-the-definitive-guide-for-production-ai-applications/

[19] Portkey, "LLMs in Prod 2025: Insights from 2 Trillion+ Tokens," Jan 21, 2025. https://portkey.ai/blog/report

Content was rephrased for compliance with licensing restrictions.