How Tokens Are Consumed

Tokens are the fundamental unit of measurement in large language models. Every character of text you send, every word the model generates, every image you attach — all of it is metered in tokens. Understanding how tokens are produced, counted, and billed is essential for anyone building on LLM APIs in 2026.

1. Tokenization: From Text to Numbers

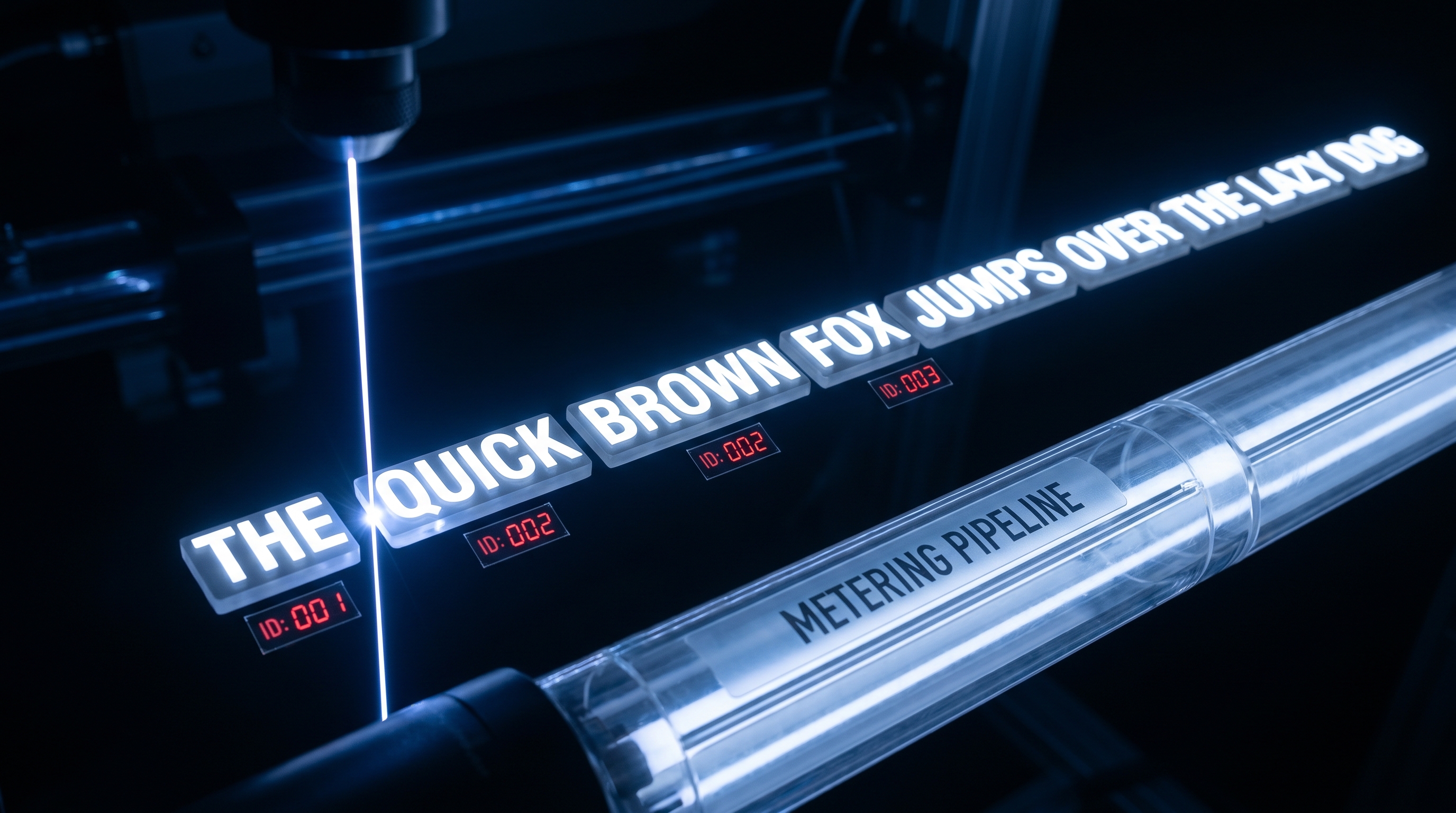

LLMs do not process raw text. Before any inference begins, a tokenizer splits input into smaller units called tokens and maps each to a unique integer ID. A token roughly corresponds to 3–4 characters of English text, or about 0.75 words. Code, non-Latin scripts, and emoji consume significantly more tokens per character [1][2].

Byte Pair Encoding (BPE)

BPE is the dominant tokenization algorithm in modern LLMs. The algorithm works in four steps [1][3]:

- Initialize with individual bytes (0–255), giving universal coverage of any Unicode input.

- Count how often each adjacent pair of tokens appears across the training corpus.

- Merge the most frequent pair into a single new token (e.g.,

t+h→th). - Repeat until the vocabulary reaches a target size — typically 32,000 to 100,000+ tokens.

The result: common English words like "hello" become a single token, while rare or invented words decompose into subword pieces (e.g., "tokenization" → "token" + "ization"). This subword approach eliminates the "unknown token" problem of older word-level tokenizers while keeping sequences compact [1][3].

tiktoken

OpenAI's tiktoken is the standard tokenizer library for GPT-family models. It is implemented in Rust with a thin Python wrapper for speed. Key encodings include cl100k_base (GPT-4, GPT-3.5-Turbo) and o200k_base (GPT-4o). tiktoken operates on UTF-8 bytes after a regex pre-split that prevents merges from crossing word boundaries — this is why "don't" splits as ["don", "'t"] rather than merging the apostrophe with adjacent letters [1][4].

SentencePiece

SentencePiece, used by models like LLaMA, T5, and Gemma, takes a different approach: it operates directly on raw Unicode text without assuming whitespace-delimited words. This makes it far more suitable for multilingual and noisy corpora (Japanese, Chinese, Thai, etc.) where spaces do not separate words. SentencePiece supports both BPE and Unigram algorithms. The Unigram variant starts with a large vocabulary and iteratively removes tokens that contribute least to overall likelihood — a probabilistic approach compared to BPE's greedy frequency-based merging [2][3][5].

The practical difference: tiktoken encodes UTF-8 bytes then merges; SentencePiece merges at the code-point level with a byte fallback for rare characters [3].

2. Input Tokens vs. Output Tokens

Every API call has two billable components [6][7][8]:

- Input tokens: Everything you send to the model — system prompt, user message, conversation history, tool definitions, retrieved documents, and images.

- Output tokens: Everything the model generates back — the completion text, tool calls, and (for reasoning models) internal chain-of-thought tokens.

Output tokens are universally more expensive because generation is sequential and autoregressive (each token depends on all previous tokens), while input can be processed in parallel. Across all major providers in 2026, output tokens cost 3–5× more than input tokens [7][8]:

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Context Window |

|---|---|---|---|

| GPT-4o | $2.50 | $10.00 | 128K |

| GPT-4o mini | ~$0.15 | ~$0.60 | 128K |

| Claude 3.5/4 Sonnet | $3.00 | $15.00 | 200K |

| Gemini 2.0 Flash | ~$0.15 | ~$0.60 | 1M |

| GPT-4.6 (2026) | $5.00 | $25.00 | 128K |

Prices as of mid-2026; confirm on provider pricing pages. [6][9][10]

This asymmetry means that verbose output is the most expensive part of any API call. A system generating long responses burns money on the costliest token class [8].

3. System Tokens, Tool Tokens, and Hidden Overhead

Not all tokens in a request are visible in the user's message:

- System prompt tokens: The system message that sets model behavior. In production agents, this is typically 500–2,000 tokens. It is sent with every request and billed as input [6].

- Conversation history tokens: If you send the last 20 turns of conversation as context, that adds 3,000–10,000 input tokens per call [6].

- Tool/function definition tokens: When using function calling or tool-use APIs, the JSON schemas for available tools are serialized into the prompt. A set of 10–20 tools can easily add 1,000–3,000 tokens of overhead per request.

- Reasoning tokens: Models like OpenAI's o1/o3 and Claude's extended thinking produce internal chain-of-thought tokens that are billed as output but may not be shown to the user. These can multiply output token counts by 2–10× compared to standard completions.

All of these accumulate silently. A "simple" chatbot request that looks like 50 tokens of user text may actually consume 5,000+ tokens once system prompts, history, and tool definitions are included.

4. Prompt Caching: The 50–90% Discount

Prompt caching is one of the most impactful cost optimizations available in 2026. When the beginning of a prompt matches a recently seen prefix, providers can reuse the cached computation and charge a reduced rate [11][12]:

- OpenAI: Cached input tokens cost $1.25/1M for GPT-4o — a 50% discount on the standard $2.50/1M input price. Caching is automatic for repeated prefixes [6][11].

- Anthropic: Claude offers up to 90% savings on cached prompt prefixes, with explicit cache control via API headers [12].

- Google: Gemini provides context caching for long documents with similar discount tiers.

The key insight: structure your prompts so that the static prefix (system prompt, tool definitions, reference documents) comes first and the variable part (user query) comes last. This maximizes cache hit rates. For agentic workloads that make many calls with the same system prompt, caching alone can cut costs by 50–90% [12].

5. Multimodal Token Costs

Images and audio are converted to token equivalents before processing:

- Images: OpenAI's GPT-4o charges based on image resolution. A low-resolution image (512×512) costs approximately 85 tokens. A high-resolution image can consume 765–1,105 tokens depending on the number of tiles. Each 512×512 tile costs ~170 tokens plus a base of 85 tokens [9].

- Audio: OpenAI's audio input/output models bill audio at token-equivalent rates, typically higher than text.

- Video: Gemini processes video by sampling frames and converting each to tokens. A minute of video can consume tens of thousands of tokens.

Multimodal inputs are particularly expensive because they inflate the input token count dramatically. A single high-res image attached to a short text prompt can double or triple the total input tokens.

6. Context Window Accounting

Every model has a hard context window — the maximum total tokens (input + output) it can handle in a single request [1][3]:

- GPT-4o: 128K tokens (~100,000 words of usable space accounting for output)

- Claude 3.5/4 Sonnet: 200K tokens

- Gemini 2.0 Pro: 1M tokens

- Gemini 2.0 Flash: 1M tokens

The context window is shared between input and output. If you send 120K tokens of input to GPT-4o, you have only ~8K tokens left for the model's response. Engineers who do not count tokens discover this limit in production during incidents [1].

Practical accounting: A typical 250-word passage tokenizes to roughly 240–280 tokens after special tokens are added. A 50-page PDF might consume 30,000–50,000 tokens. A full codebase dump can easily exceed 100K tokens. The 2026 pricing spread ranges from $0.15/1M tokens (Gemini Flash input) to $75/1M tokens (frontier model output) — a 500× range that makes token accounting a first-class engineering concern [7][10].

Key Takeaways

- Tokenizers (BPE/tiktoken/SentencePiece) convert text to integer sequences; token counts vary by language, content type, and encoding.

- Output tokens cost 3–5× more than input tokens across all providers.

- Hidden overhead from system prompts, tool definitions, conversation history, and reasoning tokens can dominate total consumption.

- Prompt caching delivers 50–90% savings on repeated prefixes — structure prompts accordingly.

- Multimodal inputs (images, audio, video) inflate token counts substantially.

- Context windows are measured in tokens and shared between input and output; exceeding them is a production failure mode.

References

[1] Selva Prabhakaran, "How LLM Tokenization Works: Build a BPE Tokenizer" — https://machinelearningplus.com/gen-ai/build-bpe-tokenizer/ [2] DigitalOcean, "LLM Tokenizers Simplified: BPE, SentencePiece, and More" — https://www.digitalocean.com/community/conceptual-articles/llm-tokenizers-bpe-sentencepiece-custom-vs-pretrained [3] fast.ai / Andrej Karpathy, "Let's Build the GPT Tokenizer" — https://www.fast.ai/posts/2025-10-16-karpathy-tokenizers [4] machinelearningplus, "tiktoken vs HuggingFace Tokenizers: Benchmark Guide" — https://machinelearningplus.com/gen-ai/tiktoken-vs-huggingface-tokenizers/ [5] MyEngineeringPath, "Tokenization Guide — BPE, SentencePiece & Token Counting (2026)" — https://myengineeringpath.dev/genai-engineer/tokenization/ [6] mem0.ai, "Claude, Gemini & OpenAI Compared" — https://mem0.ai/blog/llm-api-cost-breakdown-claude-gemini-openai-compared [7] wring.co, "LLM Inference Cost Optimization" — https://wring.co/blog/llm-inference-cost-optimization [8] ATXP, "How LLM Token Pricing Works: A Developer's Guide (2026)" — https://atxp.ai/blog/how-llm-token-pricing-works [9] LangCopilot, "Official GPT-4o Pricing (2026)" — https://langcopilot.com/llm-pricing/openai/gpt-4o [10] Vibe Coder Blog, "AI API Costs: OpenAI, Anthropic, Google" — https://blog.vibecoder.me/ai-api-costs-openai-anthropic-google-budget [11] OpenAI, "Prompt Caching in the API" — https://openai.com/index/api-prompt-caching/ [12] AI Superior, "LLM Cost Optimization Strategies 2026" — https://aisuperior.com/llm-cost-optimization-strategies-2026/