How LLMs Work Under the Hood

Overview

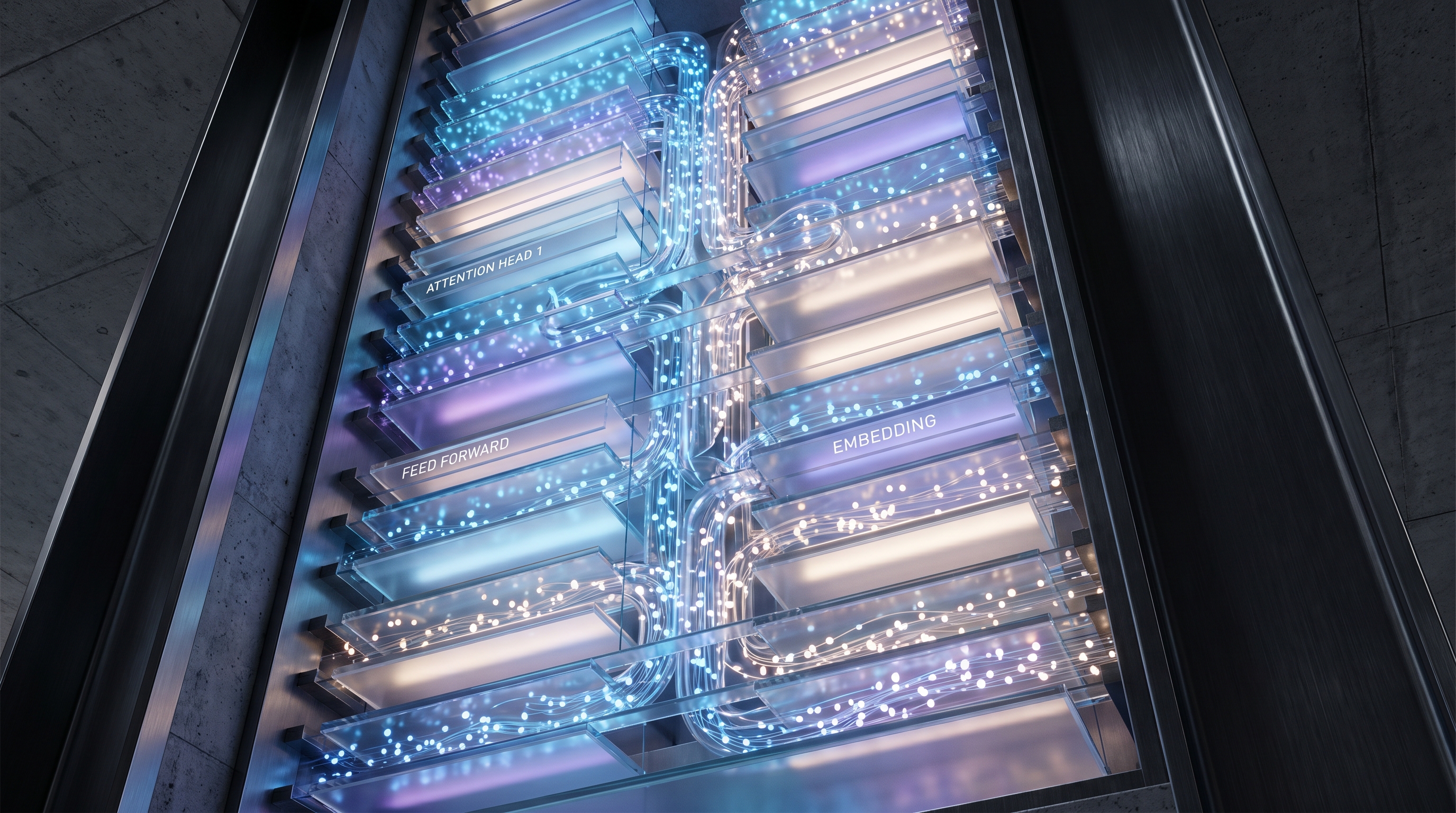

Large Language Models (LLMs) such as GPT-4o, Claude, Gemini, Llama 4, DeepSeek-V4, and Qwen all share a common lineage: the Transformer architecture introduced in 2017's Attention Is All You Need. What has changed dramatically since then — and especially through 2025–2026 — is not the core skeleton but everything around it: how text is tokenized, how attention is computed, how models are scaled through sparse Mixture-of-Experts (MoE), how they are aligned with human intent, and how inference is squeezed for every last FLOP using KV caches, speculative decoding, PagedAttention, and test-time compute scaling [1][2][5].

This document walks the stack from the bottom up: tokenization → embeddings → attention → the Transformer block → training (pretraining, SFT, RLHF/DPO) → inference optimization → the 2026 frontier (MoE at scale, reasoning models, linear and hybrid attention).

flowchart LR

A[Raw Text] --> B[Tokenizer<br/>BPE / Byte-level]

B --> C[Token Embeddings + RoPE]

C --> D[Transformer Block × N<br/>Attention + MoE FFN]

D --> E[LM Head / Softmax]

E --> F[Next-Token Distribution]

F -->|autoregressive| C

1. Tokenization: From Bytes to Tokens

Before a model sees anything, raw UTF-8 text is chopped into tokens. Nearly every frontier model in 2026 uses some variant of Byte-Pair Encoding (BPE) — iteratively merging the most frequent adjacent byte pairs until a vocabulary of ~100K–200K tokens is built. GPT-4o uses ~200K tokens; Llama 3/4 uses a 128K SentencePiece-BPE vocabulary; DeepSeek-V3/V4 uses ~129K [2][11].

Tokenization matters far more than it looks:

- Throughput bottleneck. Crusoe and NVIDIA Dynamo's 2026

fastokensRust BPE implementation delivers a 9.1× average speedup over HuggingFace tokenizers and up to 40% faster time-to-first-token (TTFT) on long-context agentic workloads — tokenization had quietly become a CPU bottleneck on H100-class inference [11]. - Safety and hallucinations. Vocabulary quality affects jailbreak resistance and numerical reasoning; the infamous "SolidGoldMagikarp" glitch-token class stems from tokens present in the vocabulary but essentially unseen in training [11].

- Tokenizer-free models. Byte-level approaches (ByT5, Byte Language Models, Meta's Byte Latent Transformer) skip the vocabulary entirely, processing raw bytes through patch-based or hierarchical architectures with dynamic compression. They trade slight throughput loss for script-agnostic, open-vocabulary robustness — especially strong on code, multilingual text, and noisy inputs [11][12].

2. Embeddings and Positional Encoding

Each token ID is looked up in an embedding matrix of shape [vocab_size, d_model] (e.g., 128K × 8192 in a Llama-3.1-70B). Because self-attention is permutation-invariant, the model needs positional information. The 2017 sinusoidal encodings are long gone. In 2026 the dominant choices are:

- RoPE (Rotary Position Embeddings) — used by Llama, Qwen, Mistral, DeepSeek. Positions are encoded by rotating query/key vectors in 2D subspaces. RoPE's killer feature is length extrapolation via base frequency scaling (YaRN, NTK-aware, Llama 3.1's "RoPE scaling") enabling 128K–1M context windows without retraining from scratch [5][9].

- ALiBi — linear bias added to attention scores; simpler, good extrapolation, used in MPT and BLOOM variants.

- NoPE / implicit positions — some 2026 research (and DeepSeek's Multi-Head Latent Attention) demonstrates that decoder-only models can learn position implicitly from the causal mask [7].

3. Attention: The Core Mechanism

Self-attention lets each token attend to every other token in the sequence. For query Q, key K, value V matrices of shape [seq_len, d_head]:

$$\text{Attention}(Q, K, V) = \text{softmax}!\left(\frac{QK^T}{\sqrt{d_k}} + M\right) V$$

where M is the causal mask (−∞ on future positions). Cost is O(n²) in sequence length — the central pain point of the entire field.

Multi-Head → GQA → MLA

The 2026 lineage of attention variants:

| Variant | Models | Idea |

|---|---|---|

| Multi-Head Attention (MHA) | GPT-2, GPT-3 | h separate Q/K/V heads |

| Multi-Query Attention (MQA) | PaLM | 1 shared K/V head |

| Grouped-Query Attention (GQA) | Llama 3/4, Mistral, Qwen | g groups of shared K/V — sweet spot for quality vs KV-cache size |

| Multi-Head Latent Attention (MLA) | DeepSeek-V2/V3/V4 | Compress K/V into a low-rank latent vector; 8–16× KV memory reduction with near-MHA quality [6][7] |

| Lightning / Linear Attention | MiniMax, RWKV, Mamba-hybrids | Kernelize softmax, O(n) cost; hybrids (e.g., Jamba, MiniMax-01) interleave linear and softmax layers [9] |

DeepSeek-V3's MLA is one of the most impactful architectural tweaks of 2025: it's what lets a 671B-parameter model serve long contexts at GPT-4-class quality on a fraction of the memory [6][7].

FlashAttention

FlashAttention (Dao et al., with FlashAttention-3 in 2024 and FlashAttention-4 refinements through 2026) doesn't change the math — it changes the I/O pattern. By tiling Q/K/V into SRAM-sized blocks and fusing the softmax, it eliminates the materialization of the full n×n attention matrix in HBM, giving 2–4× wall-clock speedups and enabling much longer contexts on the same hardware [13][14].

4. The Transformer Block

A modern decoder-only block looks like:

flowchart TD

X[Input Hidden State] --> N1[RMSNorm]

N1 --> Att[GQA / MLA Attention<br/>with RoPE + KV Cache]

Att --> R1[+ Residual]

X --> R1

R1 --> N2[RMSNorm]

N2 --> FFN[FFN: SwiGLU<br/>or MoE Router → Experts]

FFN --> R2[+ Residual]

R1 --> R2

R2 --> Y[Output Hidden State]

Key 2026 defaults: RMSNorm (cheaper than LayerNorm), pre-norm placement, SwiGLU activation in the FFN, and — increasingly — a sparse MoE FFN instead of a dense one.

5. Mixture of Experts (MoE)

MoE replaces the dense FFN with N parallel "expert" FFNs plus a lightweight router that picks the top-k (usually k=1 or k=2) experts per token. Only the selected experts run, so active parameters ≪ total parameters.

Concrete 2026 numbers:

- DeepSeek-V3 / R1: 671B total, 37B active per token [6][7].

- DeepSeek-V4 (Nov 2025): scales the family further with 1M-token context, V4-Pro beating rivals on Codeforces and IMO-AnswerBench, V4-Flash optimized for speed [7].

- Mixtral 8×7B: 46.7B total, ~13B active — runs at 13B speed with much higher quality.

- Llama 4 Maverick/Scout, Qwen3-MoE, Kimi-K2: all sparse-MoE in the 200B–1T total-parameter range.

The DeepSeekMoE recipe introduced two refinements that became standard:

- Fine-grained experts — instead of 8 big experts, use 64–256 small ones, routing top-8. Increases specialization and combinatorial expressivity.

- Shared experts — 1–2 always-on experts absorb common-knowledge patterns, so routed experts don't waste capacity redundantly encoding the same basics [6][7].

Routing is typically token-choice top-k with an auxiliary-loss-free load-balancing trick (DeepSeek-V3) that avoids the quality hit of traditional aux losses. Training MoE at scale requires expert parallelism (experts sharded across GPUs) + all-to-all communication, which is why MoE infrastructure became a first-class topic in 2026 [6].

In 2026, MoE is practically the default choice for any serious frontier model [6].

6. Training: Pretraining → SFT → RLHF/DPO

Pretraining

The objective is next-token prediction — cross-entropy loss over ~15–30 trillion tokens. 2026 recipes (Llama 3.1, DeepSeek-V3, phi-4, Step Law) have formalized several principles [3][4]:

- Chinchilla-optimal and beyond. Chinchilla (2022) said ~20 tokens per parameter is compute-optimal. 2026 practice overtrains aggressively (100–300 tokens/param) because inference cost dominates total-cost-of-ownership: a smaller model trained longer is cheaper to serve forever [3].

- Step Law (ByteDance, 2025). An empirical investigation training 3,700+ models across 100T tokens on ~1M H800 GPU-hours yielded a universal scaling law for optimal learning rate and batch size as a function of model size and data [4].

- Data quality scaling laws. 2025–2026 work introduced a dimensionless data-quality parameter Q extending Chinchilla; higher Q sublinearly reduces required compute, formalizing why curation matters [3].

- Curriculum and annealing. Constant-LR + cooldown schedules (replacing cosine) and final-phase data re-weighting toward high-quality math/code are now standard [3].

Post-Training: Alignment

A raw pretrained model is a fluent autocomplete, not an assistant. Alignment turns it into one, typically in three stages [8][15]:

- Supervised Fine-Tuning (SFT). Train on curated (prompt, response) demonstrations — the quickest, cheapest alignment lever. Often sufficient for narrow domains [8].

- Preference optimization. Given pairs (chosen, rejected) of responses:

- RLHF (PPO). Train a reward model on preferences, then run PPO with the policy, a frozen reference, and a KL penalty. Four models in memory, notoriously finicky but the original recipe behind ChatGPT and Claude [8][15].

- DPO (Direct Preference Optimization). Derives a closed-form loss that optimizes the policy directly against the reference model, no separate reward model, no RL loop. Dominant in open-source for its simplicity; works well when preference data is clean [8][15].

- KTO, IPO, ORPO, SimPO, GRPO. A 2024–2026 zoo of variants trading off data requirements, stability, and off-policy handling. GRPO (Group Relative Policy Optimization, from DeepSeek-Math/R1) became prominent for reasoning-model training.

- RLAIF / Constitutional AI / RLVR. Replace or augment human labelers with AI judges (Anthropic) or with verifiable rewards — unit tests passing, math answer correct, proof checker accepting. RLVR is the engine behind OpenAI's o1/o3 and DeepSeek-R1 [15][16].

Reasoning Models and Test-Time Compute

OpenAI's o1 (2024) and DeepSeek-R1 (early 2025) changed the narrative: instead of only scaling pretraining, let the model think longer at inference. R1 matched o1 quality at ~70% lower cost by running large-scale RL with verifiable rewards directly on a base model, producing long chain-of-thought traces [16]. Through 2026 a full "test-time compute" scaling law emerged — doubling inference compute via longer reasoning chains, best-of-N sampling, or tree search yields predictable quality gains on math, coding, and scientific reasoning [16]. ByteDance's P1 winning physics-olympiad gold and ThreadWeaver achieving 1.5× speedup on reasoning workflows are 2025–2026 landmarks of this paradigm [16].

7. Inference: Where the Magic (and the Cost) Lives

Serving an LLM has two phases:

flowchart LR

P[Prompt] --> PF[Prefill<br/>Compute-bound<br/>Parallel over all tokens]

PF --> KV[(KV Cache<br/>grows with seq len)]

KV --> DEC[Decode<br/>Memory-bound<br/>One token at a time]

DEC -->|append K,V| KV

DEC --> OUT[Streamed tokens]

KV Cache

Autoregressive decoding would recompute attention over all past tokens every step — quadratic waste. The KV cache stores each layer's K and V tensors so only the new token's Q attends against them. This turns per-step cost from O(n²) to O(n) — but the cache itself grows linearly with sequence length × batch size and often exceeds GPU HBM, becoming the bottleneck for long-context serving [17][18][19].

KV-cache management is now a research subfield of its own. 2025–2026 techniques include [17][18][19]:

- PagedAttention (vLLM). Treat KV cache like OS virtual memory: fixed-size blocks, on-demand allocation, near-zero fragmentation. Cut waste from 60–80% down to ~4% and enabled 2–4× throughput improvements [20][21].

- KV-cache eviction and compression. H2O, SnapKV, Quest, and 2026's KVP (Learning to Evict, RL-trained per-head eviction policies) selectively drop or quantize less-useful tokens [17][18].

- MLA (DeepSeek). Bakes compression into the architecture — the KV cache stores only a low-rank latent [6][7].

- Disaggregated KV caches. 2025–2026 CXL-SpecKV and similar work pushes KV memory off the GPU onto CXL-attached pools or FPGAs, decoupling model FLOPs from memory capacity [17].

- Prefix caching. System prompts and RAG contexts are cached across requests — huge TTFT wins in production.

Continuous Batching and Chunked Prefill

Static batching pads every request to the longest sequence and waits for all to finish — average GPU utilization on production workloads was historically ~40% [20][21]. Continuous (iteration-level) batching (Orca/vLLM) lets new requests join mid-flight and finished ones leave immediately. Chunked prefill splits long prompt prefills into small pieces that interleave with decode steps, preventing a single long prompt from stalling everyone else. Together on an H100, these yield 3–5× more traffic than a naive PyTorch loop [20][21].

Speculative Decoding

Decode is memory-bound — the GPU computes one token per forward pass regardless of FLOP budget. Speculative decoding exploits the slack: a small draft model proposes k tokens cheaply, then the large target model verifies all k in parallel with one forward pass. Accepted tokens are free; rejected ones fall back to target sampling. Mathematically lossless (same output distribution), typical speedups 2–3×, stacking to 10× with KV-cache tricks [22][23].

2026 variants [22][23]:

- Self-speculative / Medusa / EAGLE-2/3. The same model drafts using lightweight heads or early-exit layers — no separate draft model needed.

- Hierarchical / tree-based speculation. Draft multiple branches, verify a tree of candidates in one pass.

- SpeCache (2025). Speculatively prefetch KV pairs the next token is likely to attend to, overlapping CPU↔GPU transfer with compute [18].

- CXL-SpecKV (late 2025). Datacenter-scale speculative KV architecture combining CXL interconnects with FPGA accelerators for disaggregated serving [17].

Quantization

INT8, INT4 (GPTQ, AWQ), FP8, and FP4 on Blackwell/B200 hardware are now routine. DeepSeek-V3 was natively trained in FP8. 2026 inference stacks combine FP8 weights + KV cache + FP4 activations for 4–6× throughput on the same silicon, with sub-1% quality loss on well-calibrated models [3].

8. The 2026 Frontier: Where Things Are Going

- Hybrid and linear attention. MiniMax-01, Jamba, RWKV-7, Mamba-2 hybrids interleave O(n) state-space or linear-attention layers with a few softmax layers for needle-in-haystack recall. 1M–10M token contexts are becoming feasible at reasonable cost [9][10].

- MoE-everywhere. DeepSeek-V4's 1M-token MoE, Llama 4 Maverick, Qwen3-Next MoE — sparse activation is the default frontier architecture, and expert parallelism is a first-class infrastructure concern [6][7].

- Reasoning + agents as default. Post-R1, every serious lab ships a reasoning variant. RL with verifiable rewards (math, code, proofs, tool use) has overtaken RLHF as the dominant late-stage training paradigm for frontier capability [15][16].

- Multimodal by construction. Vision, audio, and video tokens are interleaved with text tokens; DeepSeek-OCR (Oct 2025) even treats text itself as a compressible visual signal, using a vision encoder to pack long documents into far fewer tokens than BPE [6].

- Inference-time scaling laws. Alongside pretraining scaling laws, a formalized test-time compute scaling law now guides cost/quality tradeoffs for reasoning budgets [16].

- Tokenizer-free and architectural unification. Byte Latent Transformer, hierarchical byte models, and OCR-as-compression all point toward reducing the tokenizer's central role [11][12].

Key Takeaways

- The Transformer decoder has been remarkably stable; innovation in 2025–2026 is concentrated in attention variants (GQA, MLA, linear/hybrid), the FFN (sparse MoE), and the serving stack.

- MoE is the default frontier architecture. DeepSeek-V3/V4, Llama 4, Qwen3, Mixtral, Kimi-K2 all activate only 5–15% of parameters per token. Fine-grained + shared experts is the winning recipe [6][7].

- Post-training now rivals pretraining in importance. SFT → DPO/GRPO → RLVR pipelines, especially with verifiable rewards for math and code, are what turn base models into o1/R1-class reasoners [15][16].

- Inference is an engineering-dominated field. PagedAttention, continuous batching, chunked prefill, speculative decoding, FP8/FP4 quantization, and KV-cache compression compound to 10×+ throughput improvements over naive serving [20][21][22].

- Test-time compute is a new scaling axis. Longer reasoning chains and tree-search have their own predictable, logarithmic-return scaling law that complements pretraining scale [16].

- The quadratic-attention wall is being attacked from four angles simultaneously — better kernels (FlashAttention), better memory layout (Paged/MLA), linear alternatives (Mamba, linear attention), and hierarchical hybrids — and 1M-token contexts at production cost are now real in DeepSeek-V4 and similar models [7][9].

References

[1] Starmorph, The Complete Technical Guide to Transformers, Training, and Inference (2026) — https://blog.starmorph.com/blog/how-llms-work-complete-technical-guide [2] Starmorph, From Attention Mechanism to GPT-4o, Claude, and Open-Source LLMs (2026) — https://blog.starmorph.com/blog/intro-to-transformers [3] youngju.dev, LLM Pretraining & Scaling Laws: From Chinchilla to Flash Attention and MoE (2026) — https://www.youngju.dev/blog/llm/2026-03-17-llm-pretraining-scaling-laws-guide.en [4] arXiv 2503.04715, Step Law – Optimal Hyperparameter Scaling Law in LLM Pre-training — https://arxiv.org/html/2503.04715 [5] Stanford, Next Generation LLM Architecture — http://web.stanford.edu/~jksun/blog/llm-architecture.html [6] Introl, Mixture of Experts Infrastructure: Scaling Sparse Models for Production AI — https://introl.com/uk/blog/mixture-of-experts-moe-infrastructure-scaling-sparse-models-guide [7] The NextGen Tech Insider, DeepSeek Launches V4 Mixture-of-Experts AI Model Family — https://www.thenextgentechinsider.com/pulse/deepseek-launches-v4-mixture-of-experts-ai-model-family [8] PremAI, Which LLM Alignment Method? RLHF vs DPO vs KTO Tradeoffs Explained — https://blog.premai.io/which-llm-alignment-method-rlhf-vs-dpo-vs-kto-tradeoffs-explained/ [9] GetMaxim, The Attention Arms Race: How Modern Open-Source LLMs Are Reinventing the Transformer's Core — https://www.getmaxim.ai/blog/the-attention-arms-race-how-modern-open-source-llms-are-reinventing-the-transformers-core/ [10] BuildFastWithAI, Attention Mechanism in LLMs Explained (2026) — https://www.buildfastwithai.com/blogs/attention-mechanism-llm-explained [11] Crusoe, Reducing TTFT by CPUMaxxing Tokenization — https://www.crusoe.ai/resources/blog/reducing-ttft-by-cpumaxxing-tokenization [12] EmergentMind, Byte-Level Tokenization / Byte Language Models — https://www.emergentmind.com/topics/byte-level-tokenization [13] Reintech, Flash Attention & PagedAttention Guide — https://reintech.io/blog/llm-inference-optimization-flash-attention-pagedattention [14] BuildFastWithAI, What Is Mixture of Experts (MoE)? How It Works (2026) — https://www.buildfastwithai.com/blogs/mixture-of-experts-moe-explained [15] Tianpan, The Alignment Method Decision Matrix for Narrow Domain Applications (April 2026) — https://tianpan.co/blog/2026-04-16-sft-rlhf-dpo-alignment-method-decision-matrix [16] Introl, Inference-Time Scaling: Research and Reasoning Models (Dec 2025) — https://introl.com/blog/inference-time-scaling-research-reasoning-models-december-2025 [17] arXiv 2512.11920, A Disaggregated FPGA Speculative KV-Cache for Datacenter LLM Serving — https://arxiv.org/html/2512.11920 [18] arXiv 2503.16163, SpeCache: Speculative Key-Value Caching for Efficient Generation of LLMs — https://arxiv.org/html/2503.16163v1 [19] arXiv 2603.20397, KV Cache Optimization Strategies for Scalable and Efficient LLM Inference — https://arxiv.org/html/2603.20397v1 [20] Spheron, LLM Serving Optimization: Continuous Batching, PagedAttention, and Chunked Prefill on H100 (2026) — https://www.spheron.network/blog/llm-serving-optimization-continuous-batching-paged-attention/ [21] RunPod, PagedAttention, Continuous Batching, and Deploying High-Throughput LLM Inference in Production — https://www.runpod.io/articles/guides/vllm-pagedattention-continuous-batching [22] Substack/BoringBot, KV Caching and Speculative Decoding — https://boringbot.substack.com/p/kv-caching-and-speculative-decoding [23] arXiv 2404.11912, Lossless Acceleration of Long Sequence Generation with Hierarchical Speculative Decoding — https://arxiv.org/html/2404.11912v3

Content rephrased and synthesized from the referenced sources for compliance with licensing restrictions.