Cost Observability and Governance for LLMs in 2026

1. Why AI FinOps Became a Board-Level Problem

Between 2024 and 2026, AI spending moved from a line-item under R&D to a top-three infrastructure cost for most software organizations. The FinOps Foundation's State of FinOps 2026 survey of 1,192 practitioners found that 98% of FinOps teams now manage AI spend, up from just 31% two years earlier, and "AI Cost Management" is the #1 skill teams are trying to add (58% of respondents) [1][2]. Gartner estimates roughly $644 billion in GenAI spending in 2025, rising toward $632 billion of global AI investment in 2025 and $2+ trillion trajectories into 2028 [3][4].

The underlying economics are brutal. A business unit running uncontrolled frontier-model inference can burn 10–50× more per request than a GPT-4o-class workload depending on context-window usage and reasoning tokens [3]. Kong cites the 2025 State of AI Cost Governance Report showing 84% of companies take a >6% hit to gross margin from AI costs [5], and CloudZero reports that 40% of companies now spend $10M+ per year on AI, most without knowing if it's worth it [6]. Flexera 2026 puts wasted cloud spend back at 29%, reversing five years of decline — AI is the single biggest cause [7]. Only 15% of GenAI deployments have any LLM observability at all; the rest are flying blind on tokens [8].

This document covers how the 2026 tooling stack — Helicone, Langfuse, Portkey, Braintrust, plus FinOps-native platforms — addresses cost observability, budgets, alerts, and chargeback.

2. The 2026 LLM Price Landscape (per million tokens)

Understanding dashboards without pricing context is useless. Published list prices as of Q1–Q2 2026 per million tokens (MTok):

| Model | Input $/MTok | Output $/MTok | Notes |

|---|---|---|---|

| GPT-5 | $1.25 | ~$10 | 400K context at launch [9] |

| GPT-5.2 / 5.4 | $1.75–$2.50 | $14–$15 | Flagship tier [10][11] |

| GPT-5.2 Pro / 5.4 Pro | $21–$30 | $168–$180 | >272K context doubles prices [10][11] |

| GPT-5 mini | $0.25 | $2.00 | Cost-tier workhorse [10] |

| Claude Sonnet 4.x | $3.00 | $15.00 | [11] |

| Claude Opus 4.5/4.6 | ~$15 | ~$75 | Opus 4.5 cut prices 66% vs predecessor, scoring 80.9% on SWE-bench [9] |

| Gemini 2.5 Pro | ~$1.25 / ~$10 | parity with GPT-5 at flagship [12] | |

| Gemini Flash | $0.30 | — | [11] |

| DeepSeek R1 | $0.55 | — | Reasoning-class at a fraction of cost [9] |

| DeepSeek V3 | $0.14 | — | Commodity tier [11] |

The critical observation: prices span more than 200× between the cheapest (DeepSeek V3 at $0.14/MTok) and the most expensive flagship (GPT-5.4 Pro long-context at $60/MTok input, $180/MTok output) [11]. A dashboard that cannot attribute which team is consuming which tier is a dashboard that cannot control cost.

3. The Four Dominant Dashboards

3.1 Helicone — Gateway-first, cheapest to start

Helicone is a proxy-based observability platform: change one environment variable (base_url) and every request gets logged with tokens, cost, latency, and user metadata. It supports 300+ models via an open-source cost repository [13].

Pricing (2026): Free tier = 10K–100K requests/month, Pro $20–$79/month depending on volume, Enterprise $50–$500/month self-hosted free under MIT license [14][15][16]. Helicone's built-in prompt caching "typically saves 20–30% on LLM costs," and their own analysis claims the platform "pays for itself" at even modest production scale [14]. At 5–10 developers and mid-volume traffic, TokenMix benchmarks Helicone at $20–50/month versus LangSmith at $95–390/month [16].

Strengths: fastest setup (one-line integration, under 30 minutes per Latitude [17]), cost tracking is native, caching is built in. Weakness: eval tooling is basic and prompt management is minimal compared to Langfuse or Braintrust.

3.2 Langfuse — Open-source all-in-one

Langfuse is MIT-licensed and self-hostable for free with no feature limitations; the managed cloud tier offers 50K observations/month free, then $8 per 100,000 additional units overage across paid plans [18][19][20]. In December 2025 Langfuse shipped a pricing-tier engine that supports multiple price points per model with conditions (e.g., >200K context triggers Tier 2 pricing), so cost calculations match the provider's actual long-context surcharges [21]. Langfuse tracks token-based cost estimation across every trace and span.

Langfuse wins when teams want: deep trace trees (ideal for multi-step agents), prompt versioning/registry, evaluation framework with scoring, and GDPR/data-residency control via self-hosting [22]. The primary weakness is that dedicated budget alerts and finance-grade reporting are secondary to its trace/eval focus — which is why some teams pair Langfuse with a dedicated cost-governance layer like AI Cost Board [23].

3.3 Portkey — AI gateway with hard budget enforcement

Portkey raised a $15M Series A in 2026 and is positioned as the governance-heavy AI gateway routing to 200+ LLMs with 20–40ms added latency [8][24]. Pricing: Developer free tier (10K logs/month, 3-day retention), Production $49/month (100K logs, +$9 per additional 100K up to 3M, 30-day log retention, 90-day metric retention, role-based access control, simple + semantic caching, alerts), Enterprise custom with SOC2 Type 2, GDPR, HIPAA, BAAs, VPC deployment, 10M+ logs, and "granular budget & rate limits" [25].

Portkey's differentiator is enforcement. Virtual keys can have monthly or custom budget limits set at provider or Portkey-key level, with rate limits applied per-workspace or per-virtual-key [24][26]. When a team's key hits its monthly cap, requests are blocked — not merely logged. This is the mechanism that makes chargeback actually work. Qoala, a Portkey customer running 30 million policies per month across 25+ GenAI use cases, uses it specifically for per-use-case cost tracking and key governance [25]. Another Portkey user (Ario) reported it "saved thousands of dollars by caching tests that don't require reruns" in GitHub CI workflows [25].

3.4 Braintrust — Evaluation-first with cost attribution

Braintrust is the highest-priced of the four: Free tier includes 1M trace spans, Pro $249/month with unlimited spans, Enterprise custom (typically $2,000–$5,000/month for mid-size teams) [27][14]. Its niche is evaluation + regression testing; cost attribution is excellent (per-request breakdowns by user/feature/model with tag-based attribution and budget-overrun alerts [27]) but you're paying mostly for the eval engine. Verdict across multiple comparisons: "Worth it when prompt regression is a real cost driver," otherwise overkill [14].

3.5 Feature Matrix

| Capability | Helicone | Langfuse | Portkey | Braintrust |

|---|---|---|---|---|

| Starting price | Free / $20 | Free self-host / $29 | Free / $49 | Free / $249 |

| Free tier | 10K–100K req/mo | 50K obs/mo | 10K logs/mo | 1M spans |

| Integration | 1-line proxy | SDK / OTEL | Gateway + SDK | SDK |

| Cost tracking | Native (300+ models) | Token est. w/ tiers | Native + budgets | Native + tags |

| Budget enforcement | Limited | None native | Hard limits | Alerts |

| Caching (built-in) | Yes (20–30% save) | No | Simple + semantic | No |

| Evaluation | Basic | Strong | Basic | Best-in-class |

| Self-host | Yes (MIT OSS) | Yes (MIT OSS) | Partial OSS | Limited |

| Latency overhead | Minimal | SDK only | 20–40ms | SDK only |

Sources: [14][15][16][17][19][22][24][25][27].

4. Caching — The Single Biggest Cost Lever

Every governance story starts with caching because the math is unambiguous. Both major providers now offer aggressive discounts on cached tokens, and dashboards that measure cache-hit rate directly expose savings opportunities.

- Anthropic prompt caching: cache writes cost 25% more than standard input tokens, but cache reads cost only 10% of the base rate — a 90% discount. Requires explicit

cache_controlmarkers with minimum prefix lengths [28][29]. - OpenAI automatic prompt caching: enabled by default, delivers 50% cost savings on eligible prefixes [30].

- Google Gemini: offers both implicit and explicit caching modes [31].

Published benchmarks on real workloads:

- An arXiv evaluation of long-horizon agentic tasks found prompt caching reduces API costs 45–80% and improves time-to-first-token 13–31% [32].

- Morph reports up to 90% cut in input token costs and 80% latency reduction on identical prompt prefixes [33].

- Particula Tech's production case study: prompt caching cut input token costs 40%, and layered exact-match cache served 22% of queries from cache with zero quality risk, for a combined 75% AI API cost reduction [34].

- Mercury Labs reports a customer with a 12,000-token compliance document in every system prompt: bill dropped 82% within a week of implementing prompt caching [35].

- The three-tier model (exact-match → prefix → semantic) compounds: 30–40% + 50–70% + 70–90% reductions respectively [36]. Semantic caching (vector-based) is reported to cut OpenAI/Anthropic bills 40–70% on FAQ-heavy workloads [37].

- Redress Compliance consolidates the industry position: prompt caching + intelligent model routing + batch APIs achieve 70–95% cost reduction on suitable workloads without quality loss [38].

The caveat: caches only pay off past a break-even point — Anthropic's 25% write surcharge means a prefix must be reused enough times to recoup the markup, so dashboards need cache-hit-rate views per prompt template, not just aggregate hit rate [39].

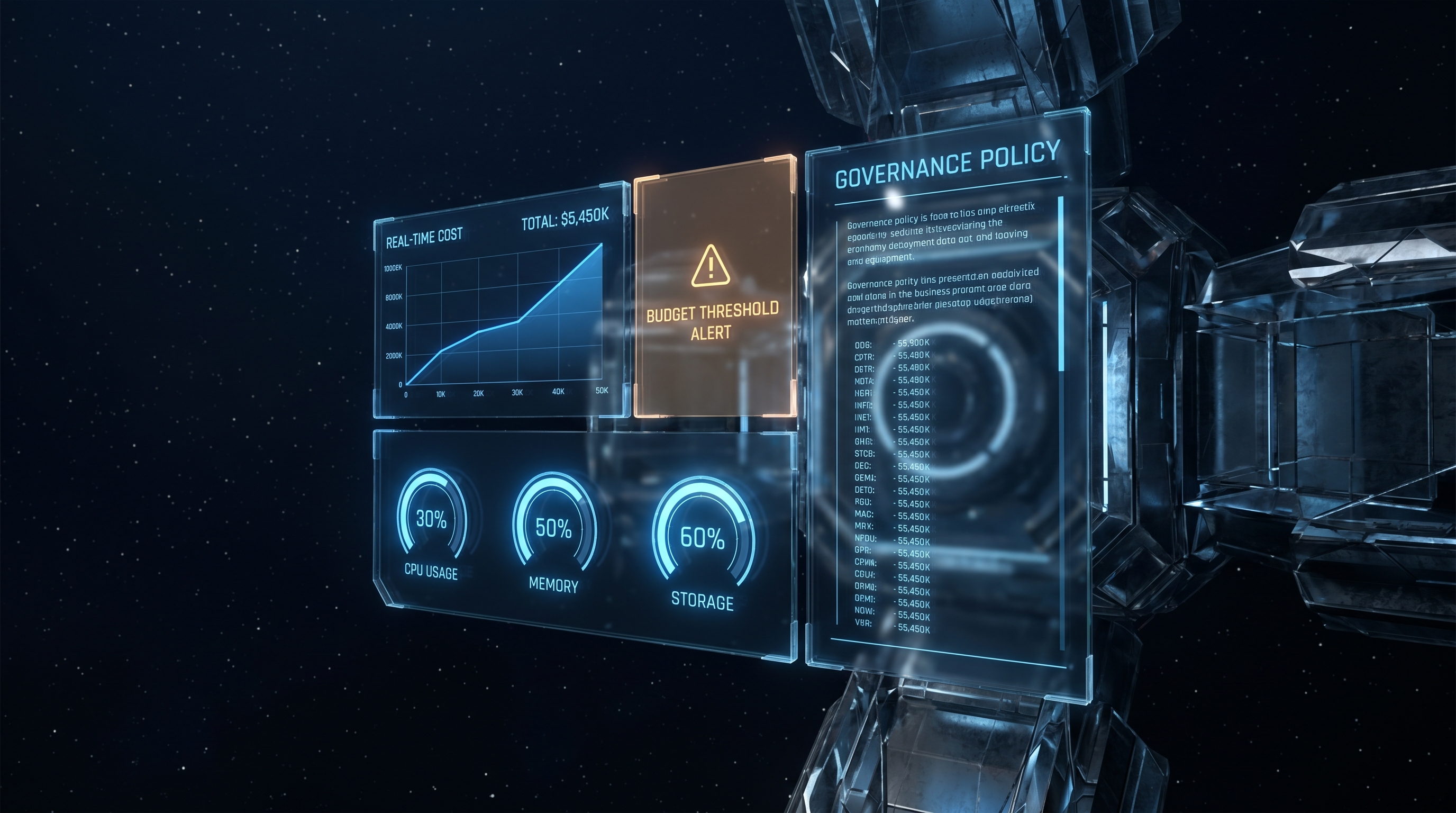

5. Budgets, Alerts, and Chargeback Mechanics

5.1 Showback vs Chargeback for AI

Showback = finance publishes weekly reports of each team's AI usage; no money moves. Chargeback = costs are actually moved into the consuming team's budget, creating direct incentive alignment [40]. FinOps Foundation data: 53.4% of organizations cannot see the full scope of what they spend on AI, and GPU workloads now account for 18% of cloud spend, up from 4% in 2023 [41].

Chargeback requires three mechanical capabilities that 2026 dashboards increasingly provide:

- Tag propagation at request creation time. Retroactive tagging from logs always under-reports long agentic traces [42]. Gateways like Portkey auto-propagate

x-portkey-user,x-portkey-trace-id, and arbitraryx-portkey-metadata-*headers so every span in an agent graph inherits team/tenant tags. - Hierarchical budget controls. Portkey enforces monthly/custom budgets at virtual-key, workspace, or provider level with hard cutoffs [24][26]. Braintrust supports tag-based attribution with alerts before overages hit [27]. AWS Bedrock Projects (2026) enables per-project tagging that flows into Cost Explorer [43].

- Multi-dimensional attribution. Per-user, per-task, per-tenant breakdowns are now table stakes for multi-tenant SaaS [44]. The metadata-at-gateway pattern is standard: attach

tenant_idto every request, rely on the gateway/proxy to log it, then roll up in the dashboard.

5.2 Budget Alert Patterns

Mature 2026 stacks implement tiered alerts: 50% soft notice → 80% team warning → 100% hard block. Portkey and Braintrust both ship this natively. For organizations running on Langfuse OSS without native budgets, the common pattern is to export traces to ClickHouse (Langfuse's 2025 data-stack migration [45]) and wire alerts through Grafana or a dedicated layer like AI Cost Board ($9.99/month for 10K requests) or Vantage (which Vantage calls the "market leader for FinOps-native LLM cost workflows" [46]).

5.3 Throttling and Model Tiering

Clarifai reports enterprise AI budgets more than doubled between early 2024 and early 2026 as workloads shifted from one-time training to continuous inference [47]. The dominant cost-control response is automatic model-tier routing: send cheap queries to Gemini Flash ($0.30/MTok) or GPT-5 nano, only escalate to Claude Opus or GPT-5.4 Pro on retrieval/reasoning gates. Automate-and-Deploy reports 40–60% cost reductions from combined caching + routing + token optimization [48]. Redress Compliance notes that coding-assist adoption went from 11% to 50% of enterprise LLM usage between 2024 and 2026, and without routing policy, that category alone can 10× a line-item [38].

6. Integrated Vendors (Gateway + FinOps)

Two trends define 2026:

- Gateway-as-control-plane. Portkey, LiteLLM, Helicone, and LLMGateway all offer hard budget enforcement because the gateway sits on the request path. FinOps teams prefer this over log-only tools because a showback report you can't enforce is advisory.

- Traditional FinOps vendors extend to tokens. Vantage, CloudZero, Cloudchipr, nOps, and Ternary now ingest OpenAI and Anthropic invoices, normalize them against cloud spend, and expose token-level cost attribution alongside EC2/GPU spend [46][6][49]. Vantage positions itself as the unified view — "real, trustworthy visibility into OpenAI and Anthropic costs alongside your broader cloud bill" [46].

AWS Bedrock Projects (2026) closes the loop on the cloud side: each project gets a tag; pass project_id in Bedrock API calls; cost-allocation tags activate in AWS Billing and surface in Cost Explorer and Data Exports [43]. This is the native pattern for teams standardized on a single hyperscaler.

7. Reference Architecture for 2026

A defensible governance stack for a Series-B-and-up SaaS company:

- Gateway layer: Portkey (enterprise) or self-hosted Helicone/LiteLLM for budget enforcement, routing, caching, virtual keys per team.

- Observability layer: Langfuse OSS (self-hosted on ClickHouse) for deep traces + prompt registry; or Helicone Cloud for low-friction teams.

- Evaluation layer: Braintrust if prompt regression is material; otherwise Langfuse evals.

- Caching strategy: Provider prefix caching ON for all system prompts >1,024 tokens; semantic cache layer (Redis + embeddings) in front of FAQ-heavy endpoints.

- FinOps integration: Vantage or CloudZero to consolidate OpenAI/Anthropic/Bedrock invoices with AWS/GCP spend; weekly showback to engineering leads; monthly chargeback journals to finance.

- Policy: Per-team virtual keys with 50%/80%/100% budget alerts, per-model routing rules (commodity tier by default, flagship only on explicit tags), mandatory

tenant_id+feature_idheaders on every request, retention policies aligned to log volumes.

Teams that implement even half of this report the 70–95% cost reductions cited in the caching/routing literature; teams that don't typically show up in the 84% who report a >6% gross-margin hit from AI costs [5][38].

8. Closing Observation

The tooling is no longer the bottleneck. A four-developer team can run Helicone OSS + Langfuse OSS + provider prompt caching for $0/month in software and capture 90%+ of the visibility a $10K/month enterprise stack provides. What's scarce is the organizational discipline: tagging at request time, enforcing budget caps at the gateway, and treating cache-hit rate as a first-class production SLO. The 2026 data is unambiguous — 98% of FinOps teams now own AI spend [1] — and the gap between organizations that have wired up chargeback and those still doing showback-only correlates directly with gross-margin outcomes.

References

[1] FinOps Foundation — State of FinOps 2026 Report, https://data.finops.org/ [2] Ternary — State of FinOps 2026: Key Takeaways, https://ternary.app/blog/state-of-finops-2026/ [3] Redress Compliance — AI Cost Allocation: Showback vs Chargeback, https://redresscompliance.com/ai-showback-chargeback-genai-cost-allocation.html [4] Iternal.ai — Chargeback, Showback, Budgets (2026), https://iternal.ai/ai-cost-allocation [5] Kong HQ — LLM Cost Management: AI Showback and Chargeback, https://konghq.com/blog/enterprise/llm-cost-management-ai-showback-and-chargeback [6] CloudZero — FinOps Strategy For Managing Claude API & Anthropic Costs, https://www.cloudzero.com/blog/finops-for-claude/ [7] nOps — How to Tag AI Cloud Spend, https://www.nops.io/blog/tag-ai-cloud-spend/ [8] Implicator.ai — Best LLM Token Monitoring Tools in 2026, https://www.implicator.ai/the-best-llm-token-monitoring-tools-in-2026-and-which-one-you-actually-need-2/ [9] FutureAGI (Substack) — Top 11 LLM API Providers in 2026, https://futureagi.substack.com/p/top-11-llm-api-providers-in-2026 [10] IntuitionLabs — LLM API Pricing Comparison (2025): OpenAI, Gemini, Claude, https://intuitionlabs.ai/articles/llm-api-pricing-comparison-2025 [11] TLDL.io — LLM API Pricing 2026, https://www.tldl.io/resources/llm-api-pricing-2026 [12] LLMGateway — OpenAI vs Anthropic vs Google: Real Cost Comparison 2026, https://llmgateway.io/blog/openai-vs-anthropic-vs-google-cost-comparison [13] Helicone Docs — Cost Tracking, https://docs.helicone.ai/guides/cookbooks/cost-tracking [14] TokenMix — LangSmith vs Helicone vs Braintrust: LLM Observability 2026, https://tokenmix.ai/blog/langsmith-vs-helicone-vs-braintrust-observability-2026 [15] Braintrust — 5 Best Tools for Monitoring LLM Applications in 2026, https://www.braintrust.dev/articles/best-llm-monitoring-tools-2026 [16] TokenMix — 5 LLM Monitoring Tools 2026, https://tokenmix.ai/blog/ai-api-monitoring-tools [17] Latitude — Best Helicone Alternatives for LLM Monitoring (2026), https://latitude.so/blog/helicone-alternatives [18] Langfuse — Self-Hosted Pricing, https://langfuse.com/pricing-self-host [19] Langfuse — Cloud Pricing, https://langfuse.com/pricing [20] Cekura — Langfuse Pricing Plans 2026, https://www.cekura.ai/blogs/langfuse-pricing [21] Langfuse — Pricing Tiers for Accurate Model Cost Tracking, https://langfuse.com/changelog/2025-12-02-model-pricing-tiers [22] AppScale — Langfuse vs LangSmith vs Braintrust vs Helicone (2026), https://appscale.blog/en/blog/langfuse-vs-langsmith-vs-braintrust-vs-helicone-2026 [23] AI Cost Board — Helicone vs Langfuse, https://aicostboard.com/comparisons/helicone-vs-langfuse [24] Portkey Docs — Enterprise Offering, https://portkey.ai/docs/virtual_key_old/product/enterprise-offering [25] Portkey — Pricing, https://portkey.ai/pricing [26] Portkey Docs — Enforce Budget Limits and Rate Limits, https://portkey.ai/docs/product/administration/enforce-budget-and-rate-limit [27] AI Cost Board — Best LLM Cost Tracking Tools 2026, https://aicostboard.com/guides/best-llm-cost-tracking-tools-2026 [28] Tianpan — The Optimization That Cuts LLM Costs by 90%, https://tianpan.co/blog/2025-10-13-prompt-caching-cut-llm-costs [29] Stackviv — Prompt Caching and KV Cache, https://stackviv.ai/blog/prompt-caching-kv-cache-explained [30] Introl — Prompt Caching Infrastructure, https://introl.com/blog/prompt-caching-infrastructure-llm-cost-latency-reduction-guide-2025 [31] Athenic — Cut LLM Costs by 60% Without Sacrificing Quality, https://getathenic.com/blog/prompt-caching-cost-optimisation-ai-agents [32] arXiv — An Evaluation of Prompt Caching for Long-Horizon Agentic Tasks, https://arxiv.org/html/2601.06007v1 [33] MorphLLM — Prompt Caching: How Anthropic, OpenAI, and Google Cut LLM Costs by 90%, https://www.morphllm.com/prompt-caching [34] Particula Tech — How Smart Caching Cut Our AI API Costs by 75%, https://particula.tech/blog/cut-ai-api-costs-smart-caching-architecture [35] Mercury Labs — Understanding Prompt Caching, https://mercurylabs.io/posts/prompt-caching [36] GPTPrompts — Prompt Caching: Reduce AI Costs 90%, https://gptprompts.ai/prompt-caching [37] Maxim AI — Reducing Your OpenAI and Anthropic Bill with Semantic Caching, https://www.getmaxim.ai/articles/reducing-your-openai-and-anthropic-bill-with-semantic-caching/ [38] Redress Compliance — AI API Cost Reduction: Prompt Caching, https://redresscompliance.com/ai-api-cost-reduction-prompt-caching-routing.html [39] Tianpan — When Provider-Side Prefix Caching Actually Pays Off, https://tianpan.co/blog/2026-04-17-prompt-cache-break-even-math [40] Redress Compliance — FinOps for AI: Governing GenAI and ML, https://redresscompliance.com/finops-ai-genai-ml-spend-governance-enterprise.html [41] ViviScape — Why Your AI Spend Is Out of Control, https://viviscape.com/news/ai-finops-enterprise-cost-management [42] DigitalApplied — LLM Agent Cost Attribution Production Guide 2026, https://www.digitalapplied.com/blog/llm-agent-cost-attribution-guide-production-2026 [43] AWS — Manage AI costs with Amazon Bedrock Projects, https://aws.amazon.com/blogs/machine-learning/manage-ai-costs-with-amazon-bedrock-projects/ [44] Particula Tech — Per-Tenant LLM Cost Attribution for Multi-Tenant SaaS, https://particula.tech/blog/per-tenant-llm-cost-attribution-multi-tenant-saas [45] ClickHouse — A new data stack for modern LLM applications (Langfuse), https://clickhouse.com/blog/langfuse-and-clickhouse-a-new-data-stack-for-modern-llm-applications [46] Vantage — Best FinOps Tools for Tracking AI Costs, https://www.vantage.sh/blog/best-finops-tools-for-ai [47] Clarifai — Budgets, Throttling & Model Tiering, https://www.clarifai.com/blog/ai-cost-controls [48] Automate & Deploy — Token Optimization, Caching, and Routing, https://automateanddeploy.com/knowledge/ai-machine-learning/llm-cost-engineering-tokens-caching-routing/ [49] Cloudchipr — How to Know Which Team Is Actually Spending, https://cloudchipr.com/blog/ai-cost-allocation

Content was rephrased for compliance with licensing restrictions.