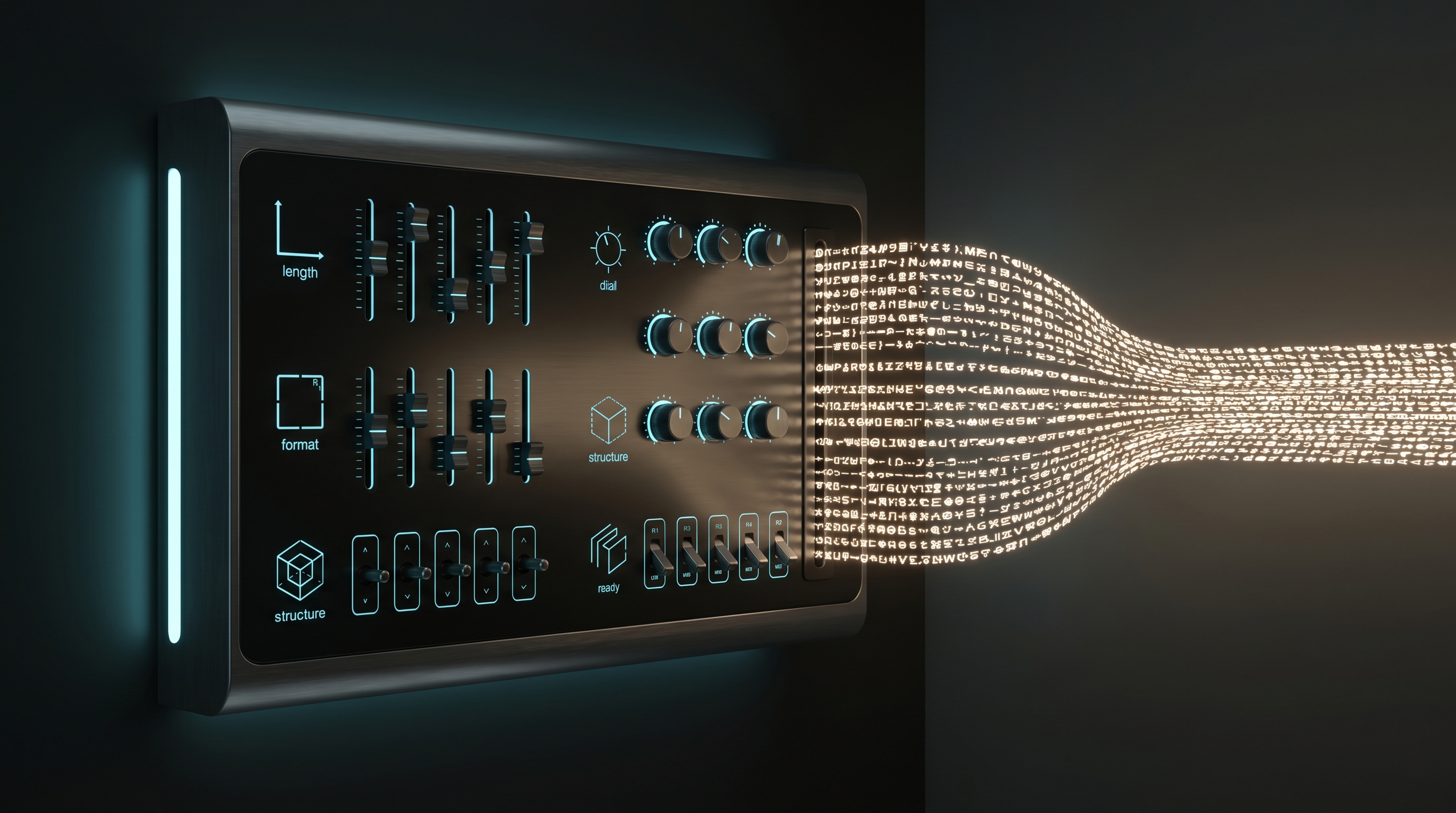

Controlling Output Tokens

How to govern what an LLM produces — length, format, structure, and reasoning depth — through the parameters every major API exposes in 2025-2026.

1. max_tokens / max_completion_tokens

The most fundamental output control is a hard cap on the number of tokens the model may generate.

OpenAI uses two parameters depending on the API surface. The Chat Completions API accepts max_completion_tokens, while the older max_tokens field is still supported for backward compatibility. For reasoning models (o-series), max_completion_tokens includes both visible output and internal reasoning tokens — a critical distinction [1][2].

Anthropic (Claude) uses max_tokens on the Messages API. When extended thinking is enabled, max_tokens acts as a hard limit on total output: thinking tokens plus response text combined. On Claude Opus 4.6 and Sonnet 4.6, the maximum is 128K and 64K respectively; the Batch API raises this to 300K with a beta header [7][8].

Google (Gemini) uses max_output_tokens in the generation config. For Gemini 2.5 Pro and Flash with thinking enabled, thinking tokens count against this budget, which can lead to empty responses if the budget is too small for the model's internal reasoning [1][3].

Practical guidance: For reasoning models, set max_tokens to roughly 4× your expected visible output to leave headroom for thinking tokens. For standard models, set it to the expected response length plus a small buffer [1].

2. Stop Sequences

Stop sequences are developer-defined strings that cause the model to halt generation immediately upon producing them. They act as custom end-of-output markers, giving fine-grained control over where a response terminates [4][5].

Common uses include:

- Terminating at a delimiter like

\n\nor---to prevent runaway generation - Stopping at closing tags (e.g.,

</answer>) in structured prompt templates - Halting at function-call boundaries in agent loops

All major providers (OpenAI, Anthropic, Google, Cohere) support stop sequences, typically accepting an array of up to 4 strings. The stop string itself is excluded from the output. Combined with max_tokens, stop sequences provide a dual safety net: the model stops at whichever limit is reached first.

3. Structured Outputs and Forced JSON

Getting LLMs to produce machine-readable output reliably has evolved from fragile prompt engineering ("please return JSON") to native, guaranteed schema conformance [6][9].

Constrained Decoding

The underlying technique is constrained decoding: at every generation step, the model's token probability distribution is modified to zero out tokens that would violate the target schema. This mathematically guarantees valid output — no post-hoc parsing or retry loops needed [10].

Provider Implementations

OpenAI offers Structured Outputs via response_format: { type: "json_schema", json_schema: {...} }. The schema is compiled into a constraint mask applied during decoding. Supported since late 2024, it guarantees 100% schema-valid JSON for any supported schema [6][9].

Anthropic supports forced JSON via tool-use mechanics — you define a tool with a JSON schema and force the model to call it. Claude also supports response_format: { type: "json" } for basic JSON mode without schema enforcement.

Google Gemini supports response_mime_type: "application/json" with an optional response_schema for schema-constrained generation.

Open-source (vLLM, NVIDIA NIM) supports structured outputs via JSON schema, regex patterns, context-free grammars, and choice constraints. vLLM uses libraries like outlines and lm-format-enforcer under the hood [11][12][13].

Limitations

Structured outputs guarantee syntactic validity, not semantic correctness — the model can still hallucinate values within a valid schema. OpenAI's implementation historically had a 4,096 max output token limit for structured outputs, though this has been relaxed in newer models [14].

4. Verbosity Control

Beyond hard token limits, several softer mechanisms influence output length and detail:

- System prompt instructions: Directives like "respond in under 100 words" or "be concise" remain effective, especially with instruction-tuned models.

- Temperature and top_p: Lower temperature produces more focused, often shorter responses. Higher temperature can lead to more verbose, exploratory output.

- Presence/frequency penalties: Positive frequency penalties discourage repetition, indirectly reducing verbosity in long outputs.

- Model selection: Smaller models (GPT-4o-mini, Claude Haiku, Gemini Flash) tend toward shorter responses by default. Choosing the right model tier is itself a verbosity control.

For production systems, the most reliable approach combines a system-prompt length directive with a max_tokens hard cap and stop sequences as a safety net.

5. Reasoning-Token Budgets

Reasoning models — OpenAI o-series, Claude extended thinking, Gemini thinking mode, DeepSeek R1 — generate internal "thinking" tokens before producing visible output. These tokens are billed at output-token rates across all providers, making budget control essential [1][2][15].

OpenAI o-series

OpenAI's o3, o4-mini, and earlier o1/o1-mini models expose reasoning_effort (values: low, medium, high) to control how much internal reasoning the model performs. Reasoning tokens appear in usage.output_tokens_details.reasoning_tokens but are not visible in the response body. The max_completion_tokens parameter caps the combined total of reasoning + visible tokens. There is no way to fully disable reasoning on o-series models — to avoid reasoning costs, use a non-reasoning model like GPT-4o instead [1][2].

Claude Extended Thinking

Anthropic's approach has evolved through three phases:

-

Manual budget (Claude 3.7 Sonnet, Opus 4): Set

thinking: { type: "enabled", budget_tokens: N }where N ≥ 1,024. Thebudget_tokensparameter is a soft ceiling — the model may exceed it by up to 20% on complex tasks. It must be strictly less thanmax_tokens[7][8][15]. -

Adaptive thinking (Opus 4.6, Sonnet 4.6): The recommended approach uses

thinking: { type: "adaptive" }with aneffortparameter (low,medium,high). Claude dynamically decides how much to think based on problem complexity. Manualbudget_tokensis deprecated on these models [16]. -

Adaptive-only (Opus 4.7+): Manual

budget_tokensis rejected entirely. Adaptive thinking is the only supported mode [16].

Budget calibration guidelines [8][17]:

| Budget Range | Use Case |

|---|---|

| 1,024–5,000 | Light logical reasoning, simple multi-step |

| 5,000–16,000 | Complex debugging, architecture decisions |

| 16,000–40,000 | Mathematical proofs, deep analysis |

| 60,000+ | Research-level exploration |

Billing note: On Claude 4+ models, you are billed for the full internal thinking tokens, but the API only returns a summarized thinking block. The visible thinking field may be much shorter than what was actually computed and billed [8].

Gemini Thinking Mode

Google's Gemini 2.5 Pro and Flash support thinking via thinking_config: { thinking_budget: N }. Setting thinking_budget: 0 disables thinking entirely. Without explicit configuration, Flash uses a lighter reasoning pass that produces fewer thinking tokens and worse results on hard tasks. Thinking tokens are returned separately under thoughts_token_count and priced at the output rate [1][2][15].

DeepSeek R1

DeepSeek R1 always reasons — there is no parameter to disable it. The model returns reasoning_content alongside content, and both are billed. To avoid reasoning costs, use DeepSeek V3 instead [1][2].

Cross-Provider Cost Comparison (2026)

| Model | Output Rate (per MTok) | Thinking Rate | Budget Control |

|---|---|---|---|

| OpenAI o4-mini | ~$12 | Same as output | reasoning_effort |

| Claude Sonnet 4.6 | $15 | Same as output | effort (adaptive) |

| Claude Opus 4.6 | $25 | Same as output | effort (adaptive) |

| Gemini 2.5 Pro | $10 | Same as output | thinking_budget |

| DeepSeek R1 | $2.19 | Same as output | None (always on) |

Sources: [2][15]

6. Practical Recommendations

- Always set

max_tokensexplicitly. Relying on provider defaults wastes money and risks unexpectedly long responses. - For reasoning models, budget 3-4× visible output. Thinking tokens consume the same budget and cost the same per token.

- Use structured outputs for machine-consumed responses. Constrained decoding eliminates parsing failures entirely.

- Route by complexity. Use standard inference for simple tasks; escalate to extended thinking only when initial confidence is low [17].

- Monitor thinking-token usage. Providers now expose thinking token counts in usage metadata — track them to catch cost surprises [1][2].

- Prefer adaptive thinking on latest Claude models. Manual

budget_tokensis deprecated; adaptive mode witheffortlevels provides better cost-quality tradeoffs [16].

References

[1] TokenMix — Thinking Tokens Trap: How Reasoning Models Burn max_tokens (2026) - https://tokenmix.ai/blog/thinking-tokens-billing-trap-2026 [2] Cycles — Budgeting Reasoning Tokens: Governing Extended Thinking - https://runcycles.io/blog/budgeting-reasoning-tokens-governing-extended-thinking-before-it-bills [3] googleapis/python-genai Issue #782 — Thinking models unreliable with max_output_tokens - https://github.com/googleapis/python-genai/issues/782 [4] StackViv — Max Tokens and Stop Sequences - https://stackviv.ai/blog/max-tokens-stop-sequences-llm [5] Rohan Paul — Stop Sequences in LLMs: Concept and Implementation - https://www.rohan-paul.com/p/stop-sequences-in-llms-concept-and [6] dida — Structured Outputs with OpenAI and Pydantic - https://dida.do/blog/structured-outputs-with-openai-and-pydantic [7] Claude Code Guides — Claude Extended Thinking API Guide - https://claudecodeguides.com/claude-extended-thinking-api-guide/ [8] APIScout — Claude Extended Thinking API: Cost & When to Use 2026 - https://apiscout.dev/blog/claude-api-extended-thinking-mode-2026 [9] SuperJSON — Complete Guide to Structured Outputs - https://superjson.ai/blog/2025-08-17-json-schema-structured-output-apis-complete-guide/ [10] Let's Data Science — How Structured Outputs and Constrained Decoding Work - https://letsdatascience.com/blog/structured-outputs-making-llms-return-reliable-json [11] Red Hat — Structured Outputs in vLLM - https://developers.redhat.com/articles/2025/06/03/structured-outputs-vllm-guiding-ai-responses [12] HPE — Using Structured Outputs in vLLM - https://developer.hpe.com/blog/using-structured-outputs-in-vllm/ [13] NVIDIA — Structured Generation with NIM for LLMs - https://docs.nvidia.com/nim/large-language-models/latest/structured-generation.html [14] OpenAI Community — Structured Outputs Deep-dive - https://community.openai.com/t/structured-outputs-deep-dive/930169 [15] Awesome Agents — Reasoning Model API Pricing Compared 2026 - https://awesomeagents.ai/pricing/reasoning-model-pricing/ [16] Anthropic Docs — Adaptive Thinking - https://platform.claude.com/docs/en/build-with-claude/adaptive-thinking [17] Claude Lab — When Extended Thinking Actually Pays Off - https://claudelab.net/en/articles/claude-ai/claude-extended-thinking-advanced-problem-solving