Context Window Economics: Pricing, KV Cache Costs, and When Big Windows Pay Off

Last updated: April 2026. All pricing reflects publicly available API rates as of Q1–Q2 2026.

1. The 1M+ Context Pricing Landscape

Context windows have expanded dramatically since 2023. GPT-4 launched with 8K tokens at $30/MTok input; by early 2026, flagship models routinely offer 1M–2M token windows at a fraction of that cost [1][2]. The pricing spread across providers is enormous — filling a 2M-token window ranges from $0.40 (Grok 4.1 Fast) to $31.50 (GPT-5.4 Pro), a 78× difference for roughly the same amount of context [2].

Current Flagship Pricing (per million tokens, Q2 2026)

| Model | Provider | Context Window | Input $/MTok | Output $/MTok | Long-Context Surcharge |

|---|---|---|---|---|---|

| Claude Opus 4.6 | Anthropic | 1M | $5.00 | $25.00 | None — flat rate across full window [3][4] |

| Claude Sonnet 4.6 | Anthropic | 1M | $3.00 | $15.00 | None — flat rate [3][4] |

| GPT-5.4 | OpenAI | 1.05M | $2.50 | $15.00 | 2× input / 1.5× output above 272K tokens [4][5] |

| GPT-5.2 | OpenAI | 1M | $1.75 | $14.00 | Not documented [6] |

| Gemini 3.1 Pro | 2M | $2.00 | $12.00 | 2× input / 1.5× output above 200K tokens [4][7] | |

| Gemini 2.5 Pro | 1M–2M | $1.25 | $10.00 | 2× input above 200K [4] | |

| Gemini 3 Flash | 1M | $0.50 | $3.00 | — [6] | |

| Grok 4.1 Fast | xAI | 2M | $0.20 | $0.50 | — [2] |

| Llama 4 Maverick | Meta (via Together) | 1M | $0.27 | $0.27 | — [2] |

| DeepSeek V3.2 | DeepSeek | 128K | $0.28 | $0.42 | 90% cache discount [4] |

Budget Tier (sub-$1/MTok input)

| Model | Input $/MTok | Context | Notes |

|---|---|---|---|

| GPT-5 nano | $0.05 | 128K | Cheapest OpenAI option [2] |

| Gemini 2.0 Flash-Lite | $0.075 | 1M | Ultra-budget with full 1M window [4] |

| Gemini 2.0 Flash | $0.10 | 1M | $0.10 to fill entire 1M window [2] |

| GPT-4.1-nano | $0.10 | 1M | 1M context at rock-bottom price [8] |

| Mistral Nemo | $0.02 | 128K | Cheapest commercial API [4] |

The Cost to Fill a Full Context Window

A single request filling the entire window illustrates the stakes at scale [2]:

- Grok 4.1 Fast (2M): $0.40

- Gemini 2.0 Flash (1M): $0.10

- Llama 4 Maverick (1M): $0.27

- Claude Sonnet 4.6 (1M): $3.00

- GPT-5.4 (1.05M): $2.63 (but $5.25 if above 272K surcharge applies)

- GPT-5.4 Pro (1.05M): $31.50

- Gemini 3 Pro (2M): $4.00 (at the extended-context rate)

At 1,000 calls/day with a 900K-token context, Claude Sonnet 4.6 costs ~$2,700/day ($81K/month) in input alone. Claude Opus 4.6 reaches ~$4,500/day ($135K/month) [9].

2. Long-Context Surcharges: The Hidden Multiplier

Not all "1M context" claims are priced equally. The critical differentiator in 2026 is whether providers charge a premium once you cross a threshold:

Anthropic (Claude): Flat-rate pricing across the entire 1M window. A 900K-token request is billed at the same per-token rate as a 9K-token one. No multiplier, no tiers [3][10].

OpenAI (GPT-5.4): Input costs double beyond 272K tokens per session; output costs increase 1.5×. A sustained 500K-token workload pays $5.00/MTok input instead of $2.50 — making it more expensive than Claude Sonnet for long-context work despite a lower base rate [4][5].

Google (Gemini 3.1 Pro): 2× input premium and 1.5× output premium above 200K tokens. Gemini 2.5 Pro follows the same pattern. This means the effective input rate jumps from $2.00 to $4.00/MTok for long documents [4][7].

Practical impact: For workloads consistently above 200K–272K tokens, Claude's flat pricing becomes the most predictable cost structure. For workloads that stay under those thresholds, OpenAI and Google offer lower base rates [4][10].

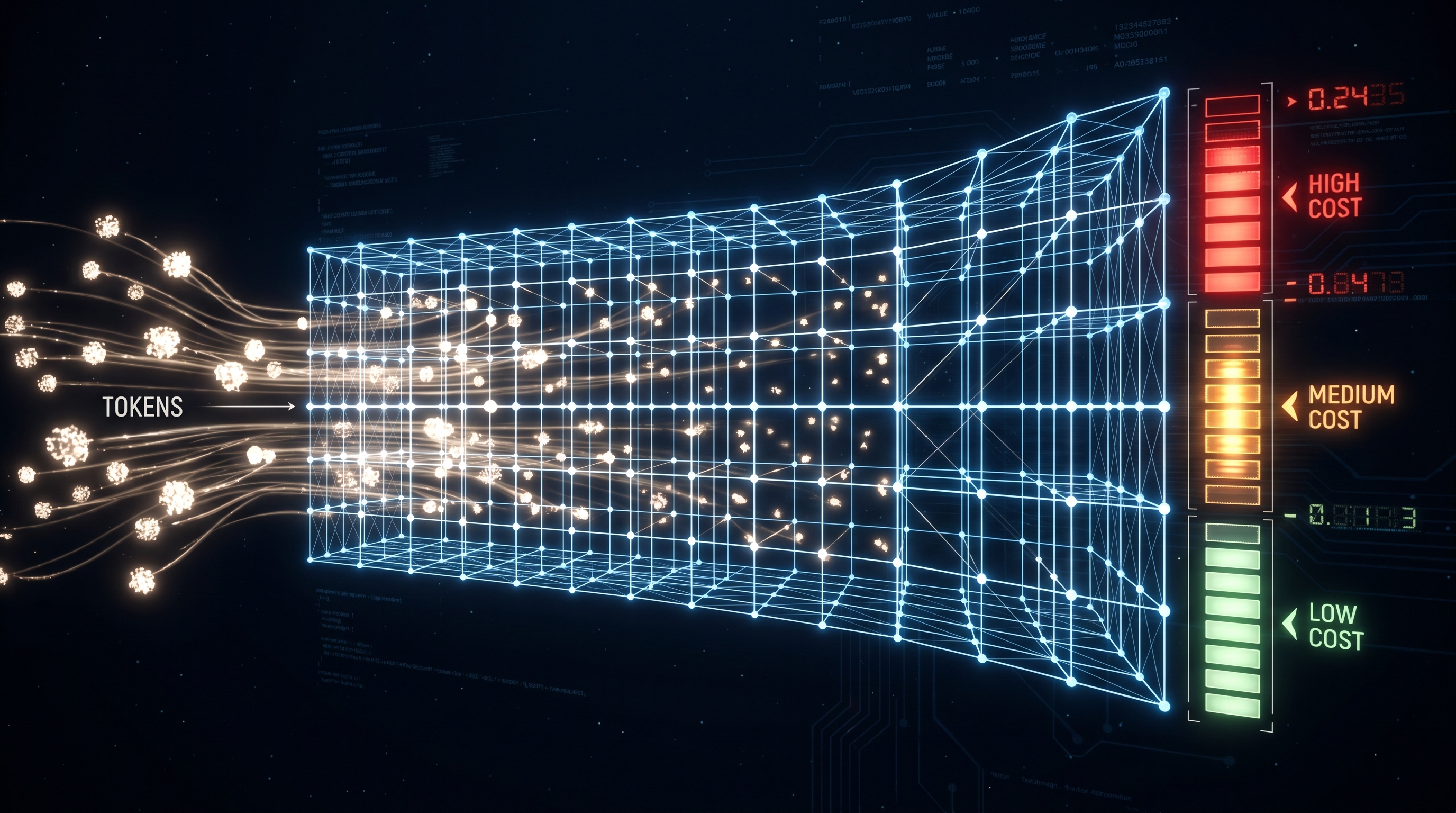

3. KV Cache: The Infrastructure Cost Behind the Price Tag

Every token in the context window requires storing key-value (KV) pairs in GPU memory during inference. This is the fundamental hardware cost that drives long-context pricing.

Memory Scaling

KV cache memory grows linearly with sequence length and batch size [11]:

Memory = batch_size × seq_length × num_layers × 2 × num_kv_heads × head_dim × precision_bytes

For Llama 3.1-70B (FP16) [11]:

- Per token: ~2.5 MB of KV cache

- 8K context: ~20 GB per request

- Batch of 32 at 8K: ~640 GB total KV cache — often exceeding model weights

At 1M tokens, a single request's KV cache can require ~100 GB of GPU memory [12]. This is why long-context inference is expensive: it monopolizes GPU HBM that could otherwise serve many shorter requests.

Fragmentation and Waste

Traditional inference systems waste 60–80% of allocated KV cache memory through fragmentation and over-allocation. vLLM's PagedAttention reduced this waste to under 4%, enabling 2–4× throughput improvements — equivalent to doubling GPU investment without buying hardware [11].

KV Cache Quantization

FP8 KV cache (supported on H100/H200 GPUs) halves memory requirements with minimal quality loss. INT4 quantization achieves 75% reduction but with moderate accuracy impact. These optimizations are essential for making 1M-token inference economically viable on existing hardware [11].

Self-Hosted KV Cache Economics

For self-hosted deployments, KV cache optimization directly translates to cost savings [11]:

- PagedAttention: 2–4× throughput gain (free, default in vLLM)

- FP8 quantization: 2× memory reduction per request

- Prefix caching: 87%+ cache hit rates for workloads with shared system prompts

- Cache-aware routing: 88% faster time-to-first-token for warm cache hits

4. Prompt Caching: The Great Equalizer

Prompt caching is the single highest-leverage cost optimization for long-context workloads. All three major providers offer it, but with very different economics [13][14][15].

Provider Comparison

| Feature | Anthropic | OpenAI | Google Gemini |

|---|---|---|---|

| Cache read discount | 90% off input | 50% off input | 75–90% off input |

| Cache write cost | 1.25× (5-min TTL) / 2× (1-hr TTL) | Free (automatic) | Free writes + $4.50/MTok/hr storage |

| Activation | Explicit cache_control | Automatic (zero code) | Implicit (auto) or explicit (manual) |

| Min cacheable tokens | 1,024–4,096 | 1,024 | 32,768 (explicit) |

| Cache TTL | 5 min or 1 hour | ~5–10 min (24 hr extended) | User-defined |

| Batch API stacking | 95% off (cache + batch) | 75% off (cache + batch) | N/A |

Real-world savings example (5,000 daily users, chatbot with shared system prompt) [15]:

| Model | Without Caching | With Caching | Monthly Savings |

|---|---|---|---|

| Claude Opus 4.6 | $4,612/mo | $481/mo | $4,131 (89%) |

| Claude Sonnet 4.6 | $2,767/mo | $289/mo | $2,478 (89%) |

| GPT-4.1 | $3,690/mo | $1,868/mo | $1,822 (49%) |

The stacking play: On Anthropic, combining the 90% cache discount with the 50% batch API discount yields an effective rate of 5% of base price. Claude Sonnet's $3.00/MTok input becomes $0.15/MTok — competitive with budget-tier models [13][14].

Caching Caveats

- Anthropic's cache is workspace-isolated since February 2026; multi-workspace architectures lose cross-workspace sharing [14].

- Google's explicit caching storage fees ($4.50/MTok/hour for Pro) require ~40 full-context reads per hour to break even on a 10M-token cache [14].

- Bursty workloads with quiet periods see cache hit rates drop below 30%, eliminating most savings [8].

- OpenAI's automatic caching requires exact prefix matching — minor prompt variations cause cache misses [15].

5. When Big Windows Pay Off vs. RAG

The 1M-token context window is a specialized instrument, not a universal replacement for retrieval-augmented generation (RAG). Production data makes the tradeoffs stark.

The Cost and Latency Gap

| Metric | RAG Pipeline | 1M Long-Context Call |

|---|---|---|

| Per-query cost | ~$0.0001 | ~$2.00+ (GPT-4.1 at 1M tokens) |

| Cost ratio | 1× | ~1,250× [12] |

| End-to-end latency | ~1 second | 45–120 seconds [9][12] |

| Latency ratio | 1× | 30–60× |

At 10,000 queries/day, the cost difference between RAG and full-context becomes the dominant engineering constraint [12].

Retrieval Quality at Scale

Marketing benchmarks overstate long-context reliability. The MRCR v2 benchmark at 1M tokens reveals significant retrieval accuracy gaps [10]:

| Model | MRCR v2 Retrieval (1M tokens) |

|---|---|

| Claude Opus 4.6 | 78.3% |

| GPT-5.4 | 36.6% |

| Gemini 3.1 | 25.9% |

The "lost in the middle" problem persists: LLM performance follows a U-shaped curve across context position, with 20+ percentage point degradation for information buried in the middle of long contexts [12]. Most models experience measurable accuracy drops well before their advertised maximum context length — Llama-3.1-405B degrades after 32K tokens, GPT-4 after ~64K [12].

When Long Context Wins

Long context is the right choice for a specific set of tasks [12][16]:

- Global document understanding — legal contract review, codebase audits, cross-clause consistency checks where signal lives in interactions between parts, not individual chunks.

- Implicit/exploratory queries — "What are the most concerning parts of this agreement?" where the user doesn't know which section is relevant.

- Static small corpora (<100K tokens) — style guides, product specs, fixed reference documents that fit comfortably and benefit from full attention.

- One-off analytical tasks — due diligence, research synthesis where 30–60 second latency is acceptable and cost is secondary.

- Multi-hop reasoning within a single document — connecting facts from different sections that chunk-based retrieval might separate.

When RAG Wins

RAG remains the default for [12][16][17]:

- Large/dynamic corpora — anything over ~100K tokens or content that changes frequently.

- High query volume — the 1,250× cost gap makes long-context nonviable at thousands of queries/day.

- Interactive latency requirements — sub-2-second SLOs are incompatible with 45+ second long-context calls.

- Low relevance ratio — when <20% of the corpus is relevant to a typical query, sending everything wastes tokens and triggers the lost-in-the-middle effect.

- Auditability — RAG provides retrieval trails that compliance teams can inspect.

The Emerging Pattern: Intelligent Routing

The most cost-effective production architecture in 2026 combines both approaches [12][16]:

- Simple factual lookups → RAG (fast, cheap, precise)

- Complex synthesis requiring global understanding → Long context (if corpus fits)

- Routing classifier — even simple rule-based routing on query type and corpus size achieves 90%+ correct routing

Research from EMNLP 2024 on "Self-Route" demonstrated that letting the model decide whether it needs full context or focused retrieval improves accuracy while cutting computational cost [12].

6. Historical Price Trajectory

The cost of filling a context window has collapsed over three years [2]:

| Year | Model | Context | Input $/MTok | Cost to Fill |

|---|---|---|---|---|

| 2023 | GPT-4 | 8K | $30.00 | $0.24 |

| 2024 | GPT-4 Turbo | 128K | $10.00 | $1.28 |

| 2025 | GPT-5 | 1M | $1.25 | $1.25 |

| 2026 | Grok 4.1 Fast | 2M | $0.20 | $0.40 |

Context windows grew 250× while the cost to fill them grew less than 2×. Per-token prices fell roughly 150× over the same period. This trend suggests that within 12–18 months, 1M-token contexts at sub-$0.10/MTok input will be available from multiple providers.

7. Practical Cost Optimization Playbook

Based on current pricing and tooling, the highest-impact optimizations in order of effort [8][13][14]:

| Tactic | Savings | Effort |

|---|---|---|

| Model routing (70% of calls to mini/nano tier) | 75–85% | Medium |

| Prompt caching (50–90% on repeated prefixes) | 50–90% on input | Low |

| Batch API (50% off, 24-hr turnaround) | 50% | Low |

| Cache + Batch stacking (Anthropic) | Up to 95% on input | Low |

| Context pruning (send only relevant tokens) | Variable, often 50%+ | Medium |

| Output optimization (structured outputs, max_tokens) | 20–30% on output | Low |

A team spending $15,000/month on LLM APIs can typically reduce to ~$3,600/month (76% reduction) by combining model routing, caching, and output optimization — without changing models or degrading quality [13].

8. Key Takeaways

- Flat-rate 1M context is now available from Anthropic at $3–5/MTok input with no surcharge. OpenAI and Google charge 1.5–2× premiums above 200–272K tokens.

- KV cache is the hardware bottleneck. A single 1M-token request can consume ~100 GB of GPU memory. PagedAttention and FP8 quantization are table-stakes optimizations for self-hosted inference.

- Prompt caching transforms the economics. Anthropic's 90% cache discount (stacking to 95% with batch) can make Claude Sonnet competitive with budget models at $0.15/MTok effective input.

- RAG remains cheaper by ~1,250× per query for retrieval workloads. Long context wins for global document understanding, implicit queries, and static corpora under 100K tokens.

- Retrieval quality varies wildly. Claude Opus 4.6 achieves 78% recall at 1M tokens on MRCR v2; GPT-5.4 manages 37%; Gemini 3.1 hits 26%. A context window you can't reliably retrieve from is a marketing number.

- The optimal architecture routes between RAG and long context based on query type, corpus size, and latency requirements.

References

[1] AI Cost Check, "Large Context Window Costs 2026" — https://aicostcheck.com/blog/large-context-window-costs-2026

[2] AI Cost Check, "Large Context Window Costs 2026: The Real Price of 1M+ Tokens" — https://aicostcheck.com/blog/large-context-window-costs-2026

[3] Anthropic, "1M context is now generally available for Opus 4.6 and Sonnet 4.6" — https://claude.com/blog/1m-context-ga

[4] APIScout, "LLM API Pricing 2026: GPT-5 vs Claude vs Gemini" — https://apiscout.dev/blog/llm-api-pricing-comparison-2026

[5] ScriptByAI, "GPT-5.4, Gemini 3.1, Claude 4.7, and More" — https://www.scriptbyai.com/gpt-gemini-claude-pricing/

[6] AI Cost Check, "Gemini vs GPT-5 vs Claude: 2026 Pricing Compared" — https://aicostcheck.com/blog/gemini-vs-gpt5-vs-claude

[7] IntuitionLabs, "AI API Pricing Comparison (2026)" — https://intuitionlabs.ai/articles/ai-api-pricing-comparison-grok-gemini-openai-claude

[8] UATGPT, "AI Model Pricing Decoded" — https://uatgpt.com/ai-model-comparison/ai-model-pricing/

[9] TokenMix, "1M Token Context Reality Check 2026" — https://tokenmix.ai/blog/1m-token-context-reality-check-2026

[10] ComputeLeap, "Claude's 1M Context Window Is Here" — https://www.computeleap.com/blog/claude-1m-context-window-guide-2026/

[11] Introl, "KV Cache Optimization: Memory Efficiency for Production LLMs" — https://introl.com/blog/kv-cache-optimization-memory-efficiency-production-llms-guide

[12] Tian Pan, "Long-Context Models vs. RAG: When the 1M-Token Window Is the Wrong Tool" — https://tianpan.co/blog/2026-04-09-long-context-vs-rag-production-decision-framework

[13] TokenMix, "Prompt Caching Guide 2026" — https://tokenmix.ai/blog/prompt-caching-guide

[14] TechPlained, "LLM Prompt Caching: Cut API Costs 90%" — https://www.techplained.com/llm-prompt-caching

[15] AI Cost Check, "Prompt Caching: Cut Your AI API Bill by 90%" — https://aicostcheck.com/blog/ai-prompt-caching-cost-savings

[16] AlphaCorp, "Is RAG Still Worth It in the Age of Million-Token Context Windows" — https://www.alphacorp.ai/blog/is-rag-still-worth-it-in-the-age-of-million-token-context-windows

[17] LightOn, "RAG to Riches" — https://www.lighton.ai/lighton-blogs/rag-to-riches