Agent Loop Cost Optimization

Agent systems are expensive not because any single call is costly, but because the loop that wraps tool use quietly rebills prior context on every turn. Understanding the compounding dynamics — and the countermeasures shipped by frontier labs in 2025–2026 — is now a core engineering skill. This note synthesizes public data on tool-call loop overhead, conversation trimming, summarization, and sub-agent spawning, with a focus on Anthropic's published numbers.

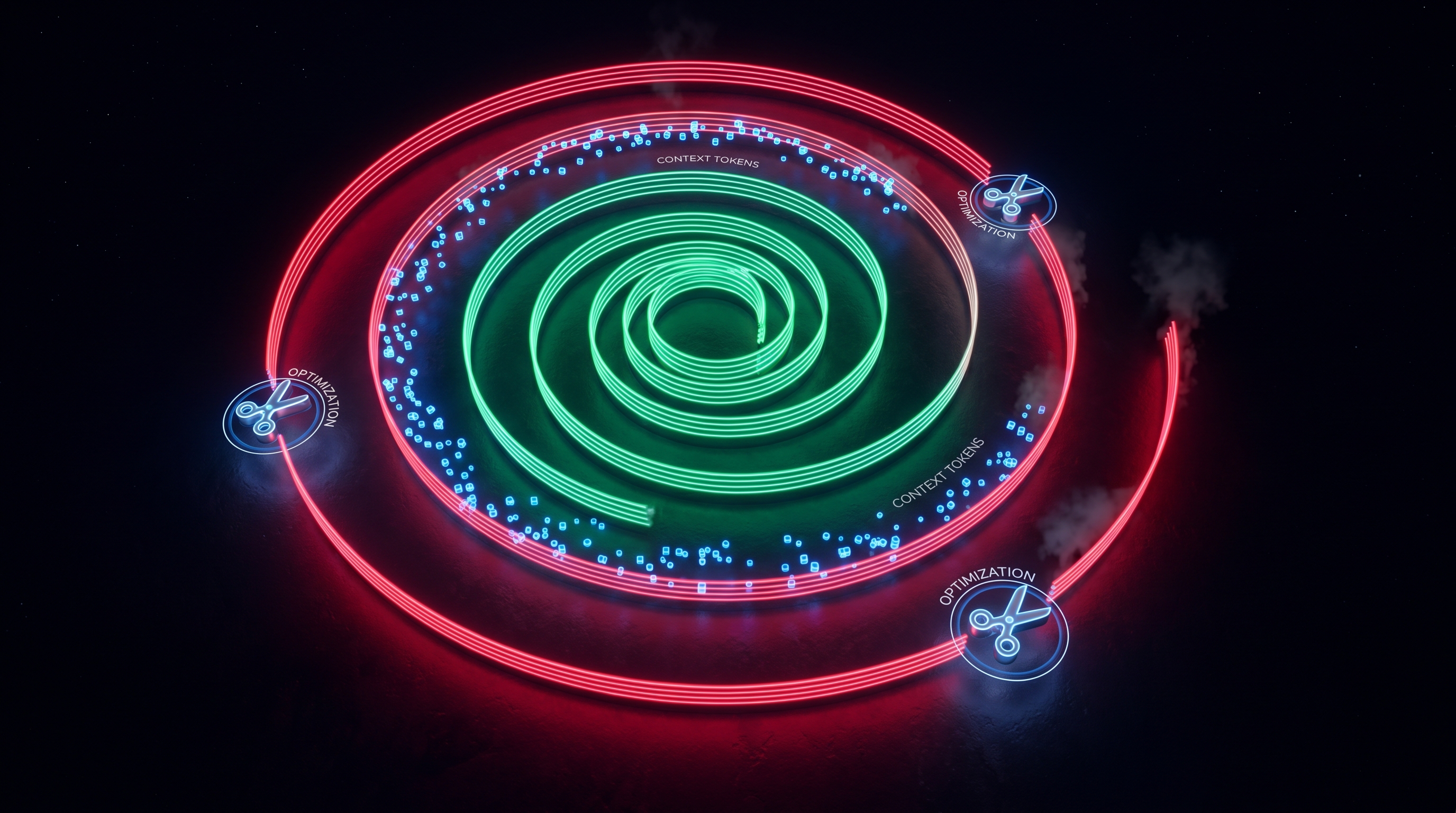

1. Why naive agent loops cost 5–40x more than they look

Every iteration of an agent loop re-sends the full conversation history to the model. That means tool outputs, reasoning traces, and previous assistant messages are paid for on each subsequent step. Augment Code describes this as a quadratic growth pattern: "Naive agent loops rebill prior context on every call, so input token cost grows quadratically as tool outputs and reasoning traces accumulate. A 20-step loop can consume over 10x the tokens a simple per-step estimate suggests" [1].

Tianpan models this concretely: a single-pass call with a 9,000-token context costs about $0.03, but running the agent for ten steps while naively appending tool results brings the cumulative context across all calls to roughly 472,000 tokens — a 43x increase over the single-call baseline [2]. Tool output is the primary driver. Average agent session length tripled from under 2,000 tokens in late 2023 to over 5,400 tokens by late 2025, and the bulk of that growth came from tool results accumulating in context [3].

A real-world case study from OpenClaw showed a single session burning 21.5 million tokens in a day, with cached prefix replay — not user input or model output — as the dominant cost. The model repeatedly reads massive historical context on each round, and without compaction this compounds dramatically [4].

2. The five-strategy pipeline: cheap defenses first

Claude Code treats context management as a pipeline ordered strictly by cost, running cheap local operations before resorting to expensive API calls [5]. The published layers, summarized across Anthropic's engineering posts and third-party analyses, look roughly like this:

- Tool-result clearing. Anthropic's cookbook explicitly calls out "tool-result clearing" as a distinct mechanism that "addresses the bloat from tool use itself" by dropping or truncating stale tool outputs once they are no longer referenced [6]. This is the cheapest defense because it is a deterministic, local string operation — zero extra API spend.

- Prefix caching. Anthropic's prompt caching turns the static system prompt, CLAUDE.md, and tool schemas into a cached prefix, so repeated loop iterations pay only for the delta rather than re-tokenizing the entire head of the conversation.

- Conversation trimming. Sliding-window or relevance-scored trimming drops old turns before hitting the model. This is still local and free, but risks dropping information the agent later needs.

- Summarization / compaction. When trimming is too lossy, compaction distills the window into a high-fidelity summary. Anthropic describes it as letting "the agent continue with minimal performance degradation when the conversation gets long" [6]. This costs an extra model call and occasionally loses nuance, but preserves continuity on long tasks [7].

- Sub-agent spawning. The most expensive layer. The orchestrator delegates a verbose task to a sub-agent that runs in its own context window and returns only a summary, so verbose tool output never touches the main conversation [8].

The ordering matters: each later layer costs more per token saved, so cheap layers should always run first.

3. Conversation trimming and summarization: concrete numbers

Summarization has direct token cost (an extra LLM call) plus a quality tax — the summarizer may miss "stopping signals" that tell the agent it has enough information [7]. In practice, teams report 50–70% reductions in per-step input tokens when combining trimming with tool-output compression. One survey frames the headline figure as a "70% cost reduction" achievable from the combination of prompt compression, context-window management, model routing, caching, batch processing, output constraints, and early stopping [9].

Key operational knobs:

- Truncate verbose tool output before injection. Keep only the relevant slice (e.g., the failing test output, not the entire log) [3].

- Threshold-based compaction. Trigger compaction at, say, 70% of the context window rather than at overflow, so the agent still has headroom to reason.

- Avoid large tool outputs in long-term context. OpenClaw's post-mortem singles this out as the single biggest lever [4].

- Model routing for summarization. Run the compaction call on a cheaper model (Haiku) even when the main loop uses Sonnet or Opus.

4. Sub-agent spawning: the 4x and 15x multipliers

Anthropic's multi-agent research system post anchors the public cost discussion. Two numbers are now canonical:

- Single agents use ~4x more tokens than a chat interaction.

- Multi-agent systems use ~15x more tokens than chat. [10][11]

Dividing the two gives the multi-agent coordination premium over a single-agent loop: about 3.75x. Ready Solutions frames this bluntly: "It is the unit-cost premium you pay for coordination. It is real. It is published. It does not go away with better prompting" [12].

Anthropic justifies the 15x hit with a 90.2% performance improvement on research-shaped tasks over single-agent Claude Opus 4 [10][11]. Three factors explained 95% of performance differences in their testing: token usage (80%), number of tool calls, and model choice. Critically, upgrading from Sonnet 3.7 to Sonnet 4 beat doubling the token budget on the older model — quality and quantity both matter [11].

Anthropic's own sizing guidance in the same post: simple fact-finding uses 1 agent with 3–10 tool calls; direct comparisons use 2–4 sub-agents with 10–15 tool calls each; complex research uses 10+ sub-agents with clearly divided responsibilities. A lead agent typically runs 3–5 sub-agents in parallel [11].

5. Claude Code "agent teams" — the 7x number

In March 2026 Anthropic shipped agent teams in Claude Code, with an additional published multiplier: "Agent teams use approximately 7x more tokens than standard sessions when teammates run in plan mode, because each teammate maintains its own context window and runs as a separate Claude instance" [13]. A representative agent-team session from TokenCost reports ~1.5M input / ~200K output tokens, costing roughly $7.50 on Sonnet 4.6 — dropping to about $3.00 with Haiku subagents plus caching [14]. That is a 60–70% cost cut on the subagent layer alone, consistent with the $1/M vs $3/M input price delta between Haiku 4.5 and Sonnet 4.6 [14].

Anthropic's own cost-management docs give the operational checklist for teams [15]:

- Use Sonnet for teammates; reserve Opus for complex reasoning.

- Keep teams small — token usage is roughly proportional to team size.

- Keep spawn prompts focused; CLAUDE.md, MCP servers, and skills load automatically and inflate the starting context.

- Clean up teams when work is done; idle teammates keep consuming tokens.

- Delegate verbose operations (tests, docs fetches, log processing) so "the verbose output stays in the subagent's context while only a summary returns to your main conversation" [15].

6. A practical cost-optimization checklist

Combining the public guidance:

- Measure before optimizing. Track input/output tokens per loop step; the cost explosion is almost always in cached prefix replay, not final outputs [4].

- Compress tool outputs at injection time. Biggest single lever; addresses the source of the 5,400-token average session size [3].

- Cache the static prefix. System prompt, tool schemas, and stable project context should be cacheable across turns.

- Trim then compact. Cheap sliding-window trimming first; summarization only when trimming would lose required state [6][7].

- Route models by task. Haiku for summarization, file reads, simple extraction; Sonnet for coordination; Opus only for synthesis or hard reasoning [14][15].

- Spawn sub-agents only when the 3.75x coordination premium is justified — research-shaped, parallelizable tasks where result value clearly exceeds token cost [12][16]. For linear coding tasks, a single strong agent usually wins.

- Bound team size and lifetime. Each extra teammate adds roughly one full context window of cost; idle teammates still bill [13][15].

- Stop the loop. Explicit stopping criteria and output constraints contribute materially to the reported ~70% total savings [9].

The overall picture from 2025–2026 data is consistent: agent loops are expensive by default, but most of the cost is structural and avoidable. Tool-result clearing, compaction, prefix caching, and careful sub-agent scoping together turn "15x more than chat" from a ceiling into a choice you make only when the task's value clearly clears that bar.

References

[1] AI Agent Loop Token Costs: How to Constrain Context — https://www.augmentcode.com/guides/ai-agent-loop-token-cost-context-constraints [2] Why Your Agent Costs 5x More Than You Think — https://tianpan.co/blog/2026-04-20-token-economy-multi-turn-tool-use-agent-cost [3] The Injection Decision That Shapes Context Quality — https://tianpan.co/blog/2026-04-20-tool-output-compression-context-injection [4] OpenClaw Burns 21.5 Million Tokens in a Day — https://www.bee.com/67225.html [5] Context Management at Scale (Claude Code vs. Hermes Agent) — https://kenhuangus.substack.com/p/chapter-6-context-management-at-scale [6] Context engineering: memory, compaction, and tool clearing — https://platform.claude.com/cookbook/tool-use-context-engineering-context-engineering-tools [7] Hybrid Context Compaction: Managing Token Growth in Agentic Loops — https://www.reinforcementcoding.com/blog/hybrid-context-compaction-agentic-loops [8] How to Use Sub-Agents for Codebase Analysis Without Hitting Context Limits — https://www.mindstudio.ai/blog/sub-agents-codebase-analysis-context-limits [9] Token Optimization: Reduce Agent Costs by 70% — https://theaiuniversity.com/docs/cost-optimization/token-optimization [10] How Anthropic's Multi-Agent AI Beats Single Models by 90% — https://www.implicator.ai/inside-claudes-research-engine-are-teams-of-ai-agents-the-future-of-artificial-intelligence/ [11] Anthropic's Multi-Agent Secret: 15x Cost, 90% Better Results — https://www.youtube.com/watch?v=ob_c_pokSa0 [12] The Four Sub-Agent Orchestration Patterns — https://readysolutions.ai/blog/2026-04-18-sub-agent-orchestration-patterns-claude/ [13] Manage costs effectively — Claude Code Docs — https://docs.anthropic.com/en/docs/claude-code/costs [14] Claude Code cost per session — https://tokencost.app/blog/claude-code-cost-per-session [15] Manage costs effectively — Claude Code Docs (agent teams) — https://docs.anthropic.com/en/docs/claude-code/costs [16] Anthropic Subagent: The Multi-Agent Architecture Revolution — https://www.myaiexp.com/en/insights/anthropic-subagent-multi-agent-revolution

Content was rephrased for compliance with licensing restrictions.